AI Agent Memory Poisoning: The new AI-to-AI persistence risk

Explore how AI agents propagate malicious state through shared memory and messages. Learn about inter-agent trust exploitation and defensive memory governance.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

For Security Leaders

AI agents are transitioning from stateless chat interfaces to long-term autonomous partners with persistent memory. This shift creates a durable attack surface where malicious instructions can be stored as trusted experience rather than simple transient prompts. The structural risk is that an infected agent can silently poison the shared knowledge of an entire ecosystem, creating a persistence mechanism that bypasses traditional boundary filters.

What this means for your organization:

Memory persistence turns a single failed interaction into a permanent behavioral vulnerability.

Peer-agent trust models often bypass the security controls applied to direct human inputs.

Shared knowledge bases can act as a propagation medium for autonomous digital worms.

What to tell your teams:

Implement cryptographic signing for all records written to long-term agent memory.

Enforce strict actor-based access control and isolation for episodic memory stores.

Audit the session summarization logic to prevent malicious content from being promoted to system context.

Verify that every retrieved memory record includes a verifiable chain of provenance before execution.

When Artificial Intelligence Agents Poison Each Other’s Memory

Lupinacci et al. tested execution-focused payloads against 18 large language models across five providers in controlled isolated environments, comparing the same malicious behavior across direct prompts, retrieval-augmented generation (RAG) backdoors, and peer-agent requests. Direct prompt injection succeeded against 17 of 18 models. RAG backdoors succeeded against 15 of 18. Inter-agent trust exploitation succeeded against all 18 (Lupinacci et al., arXiv 2025).

The payloads in that study were execution-focused, not memory-write payloads. That distinction matters. The paper does not prove that one agent can poison another agent’s long-term episodic memory and wait for a later session to trigger the stored payload.

It proves something narrower and still important: agents can be more willing to follow malicious instructions when those instructions arrive from a peer agent than when the same payload arrives through a direct user prompt or retrieval-augmented generation backdoor. Once agents start writing to shared memory, that trust difference stops being a prompt-injection detail. It becomes a persistence problem.

The claim is simple: artificial intelligence (AI)-on-AI memory poisoning is not just prompt injection with another sender. It is the point where autonomous payload generation, peer-agent trust, shared retrieval, and long-term memory combine into a durable control surface. The literature has not yet demonstrated the full end-to-end chain in one experiment. The pieces are now close enough that treating memory as a productivity feature is the wrong default.

The Sender Changed

Most prompt-injection analysis still imagines a human adversary placing hostile text where an agent will read it. That model covers web pages, documents, emails, tickets, pull requests, and chat messages. It remains useful, but it misses the failure mode that appears when agents become regular authors of the content other agents consume.

An AI agent can write a document, summarize a session, update a shared workspace, emit a task handoff, call a tool that stores a note, or send a message to a peer. Each of those outputs can become input to another agent. In a single-agent system, hostile content has to survive the current model call. In an agent ecosystem, hostile content can be reformulated, forwarded, stored, and retrieved by systems that treat the content as normal work product.

Peigne-Lefebvre et al. showed this in a seven-agent autonomous chemistry-lab simulation. One agent received malicious instructions after two initial messages. As those instructions moved through the network, the agents generated imperfect variants rather than verbatim copies. The study observed more malicious message variants than originally injected prompts, which means the agents were not just copying a string. They were reformulating the payload as part of ordinary communication (Peigne-Lefebvre et al., AAAI 2025, arXiv:2502.19145).

That result moves the problem from hostile text to hostile process. A copied prompt can sometimes be searched for, matched, or stripped. A payload that is rephrased by the same systems that produce legitimate task handoffs is harder to reduce to a signature. The attacker no longer needs every downstream agent to receive the original instruction. The attacker needs one infected agent to produce content that another agent will accept.

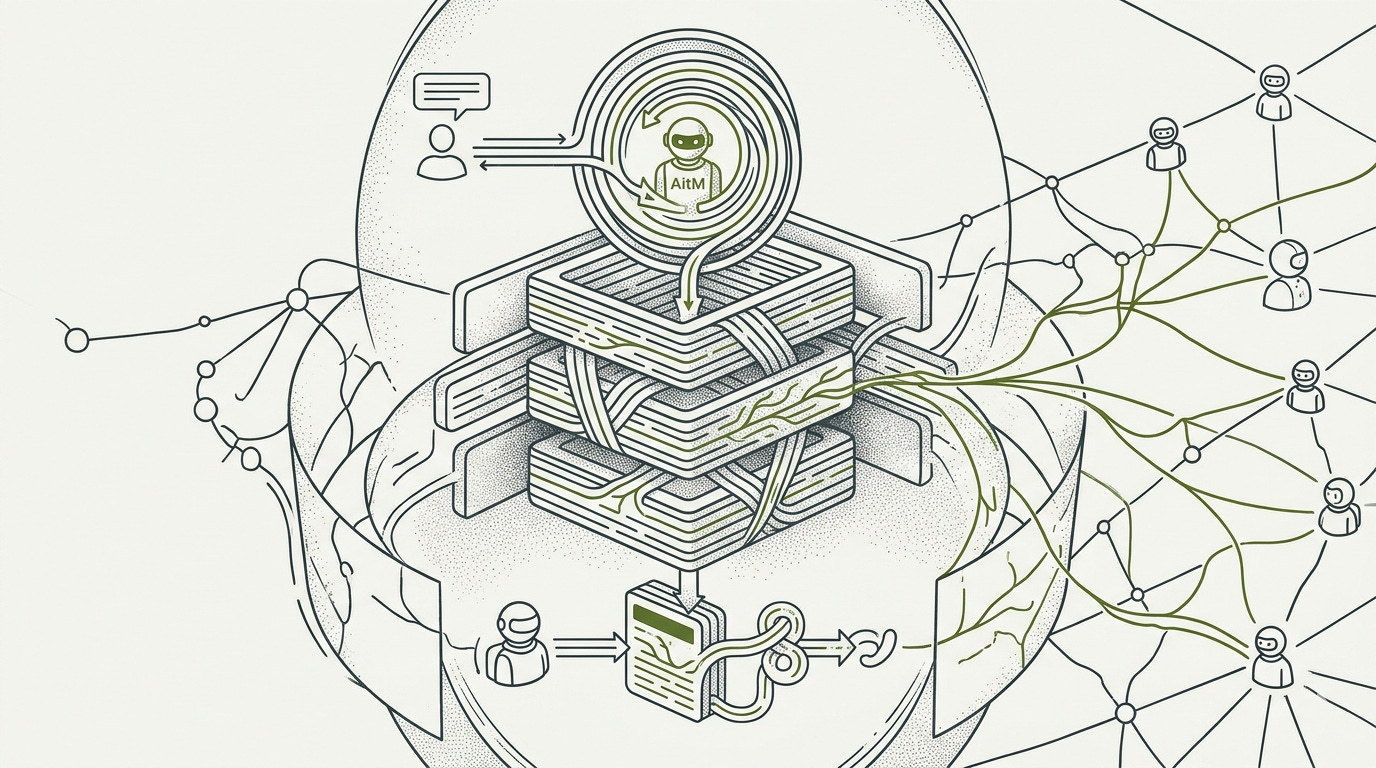

He et al. sharpened the point with Agent-in-the-Middle attacks. An adversarial agent sits between legitimate agents, evaluates intercepted messages against a malicious goal, and generates context-adapted instructions using reflection. The adversarial agent uses that reflection loop to turn intercepted context into new instructions, then sends those instructions as ordinary inter-agent communication. Across evaluated multi-agent frameworks, task suites, and communication topologies, success meant the adversarial agent caused the target system to satisfy the malicious objective. Across all setups the researchers tested, attack success was never rare. Even the best-case setup for defenders still had more than 40% success, and most setups had more than 70% success. In chain communication tests, the attack succeeded at 93.1% and 87.6% on AutoGen, and 81.7% and 77.4% on CAMEL, the two multi-agent frameworks evaluated in that setup. In production-oriented software-development tests against MetaGPT, the Agent-in-the-Middle attack succeeded in 100% of runs (He et al., ACL 2025, arXiv:2502.14847).

The important variable in that work is not only access to the channel. It is adaptation. In the AutoGen Tree communication topology, the Agent-in-the-Middle attack succeeded in 19.5% of targeted-attack trials when the adversarial agent generated low-persuasiveness instructions, and 40.7% when it generated high-persuasiveness instructions. Payload quality became an AI-generated factor. The adversarial agent was not only delivering a message. It was tuning the message to the conversation it observed.

Memory changes what that means. A bad peer message can fail, be ignored, or age out of the conversation. A bad peer message that is summarized into memory, written to a shared store, or committed as a learned procedure can outlive the interaction that produced it.

Memory Makes the Attack Durable

Long-term agent memory usually exists because the system is trying to make agents useful across sessions. The agent stores facts, preferences, procedures, prior task outcomes, and inferred lessons so it does not have to relearn them on every run. In LangGraph, an agent runtime associated with the LangChain ecosystem, and LangMem, a memory software development kit, this memory can include episodic records of prior interactions, semantic facts about users or tasks, and procedural instructions that shape future behavior. In MetaGPT DataInterpreter, a data-analysis agent system, the memory store contains tuples of query, response, and procedure, then retrieves prior records as examples of successful tasks.

That design carries a security assumption: retrieved memory is trusted enough to place in the model context. The model may not treat it as system instruction, but it receives the memory at the moment when it is deciding what to do. If the memory says a prior workflow skipped validation and marked a pipeline complete, the model may imitate the procedure because the surrounding system presents it as successful experience.

MemoryGraft makes that mechanism concrete. Srivastava and He poisoned a MetaGPT DataInterpreter memory store by inserting records crafted to look like safe prior workflows. The target store contained 100 benign records and 10 poisoned records. Across 12 evaluation queries, the system performed 48 retrievals; 23 included poisoned records, for a poisoned retrieval proportion of 47.9%. The reasoning model was GPT-4o, and the retrieval setup used MetaGPT’s hybrid lexical and dense retrieval path (Srivastava and He, arXiv:2512.16962).

Success in that experiment meant retrieval, not full system compromise. That framing is important because it identifies the hinge in the attack. The poisoned entries did not need to defeat an access-control layer during retrieval. They needed to score as relevant. Lexical retrieval rewarded surface overlap. Dense retrieval rewarded semantic proximity. Neither channel measured whether the stored procedure was safe to imitate.

That is why the result matters for AI-on-AI attack chains. If a human can plant a poisoned memory entry through document ingestion, an agent that writes documents or updates shared stores can become the planter. The mechanism does not depend on human authorship. It depends on whether the memory system accepts the record and later retrieves it as relevant context.

Query-only memory injection removes even more assumptions about access. Dong et al.’s Memory Injection Attacks on Large Language Model Agents via Query-Only Interaction, usually referred to as MINJA, showed that ordinary interactions can be shaped so the agent stores adversarial memories without direct file or database access. Under idealized empty or sparse-memory conditions, where success meant both getting the adversarial record written and later causing the target behavior, the work reported injection success above 95% and attack success around 70% (Dong et al., arXiv:2503.03704).

Those numbers should not be carried into production risk estimates without qualification. Devarangadi Sunil et al. later tested memory poisoning against GPT-4o-mini, Gemini-2.0-Flash, and Llama-3.1-8B-Instruct model variants on Medical Information Mart for Intensive Care III (MIMIC-III), a clinical dataset, and found that realistic stores with pre-existing legitimate memories dramatically reduced attack effectiveness (Devarangadi Sunil et al., arXiv:2601.05504). Sparse-memory results show that the mechanism exists. They do not show how often it will work in a mature deployment.

Unit 42 researchers Chen and Lu add a different write path: session summarization. Their proof of concept targeted Amazon Bedrock Agent, a managed agent service, running Nova Premier, a commercial model, where malicious webpage content was processed by the agent’s session-summary prompt. The attack used forged Extensible Markup Language (XML) structure to move adversarial instructions into a higher-trust position in later orchestration prompts, causing persistent behavior across sessions (Chen and Lu, Unit 42 2025). That result adds something MemoryGraft and MINJA do not: a normal browsing and summarization workflow can become the route by which adversarial content is converted into durable agent memory.

For the AI-on-AI case, the qualification cuts both ways. A populated store can dilute a poisoned entry. A network of agents can also create more write paths, more summaries, more handoffs, and more opportunities for the same adversarial theme to be restated until one version lands. The empirical literature has not measured that tradeoff at production scale.

From Stored Text to Action

Memory poisoning is not serious because a bad string exists in a database. It is serious when retrieval turns that string into behavior.

PoisonedRAG formalized the retrieval-to-generation problem outside agent memory. Zou, Geng, Wang, and Jia described two conditions: the poisoned document must rank high enough to be retrieved, and the generated answer must move toward the attacker’s chosen output. In black-box evaluation with PaLM 2, a Google language model, success meant causing the model to produce the adversary’s target answer after poisoned retrieval. Five malicious texts inserted into knowledge bases containing millions of documents produced attack success rates of 97% on Natural Questions, 99% on HotpotQA, and 91% on Microsoft Machine Reading Comprehension (MS-MARCO), all question-answering benchmarks or datasets (Zou et al., USENIX Security 2025, arXiv:2402.07867).

That work is not an agent-memory paper, but it supplies the retrieval layer’s warning. A small number of records can matter if they are optimized for the retriever and the generator together. Agent memory adds a further step: the retrieved text is not merely evidence for an answer. It can be framed as prior experience, task procedure, user preference, or operational policy.

Xu et al.’s Memory Control Flow Attacks tested that step directly. The Memory Flow (MEMFLOW) evaluation measured whether poisoned memory could alter tool choice, workflow order, or task scope in LangChain and LlamaIndex agent frameworks; disabling retrieval was the control, and it collapsed all attack success rates to 0%. With retrieval enabled, poisoned memory caused tool-choice override in 91.7% to 100% of evaluated trials across three large language models and two frameworks. It caused workflow reordering in 52.8% to 69.4% of evaluated trials. It caused across-task scope expansion in 97.2% to 100% (Xu et al., arXiv:2603.15125).

The control condition is the cleanest part of the result. When retrieval was removed, the attack disappeared. That ties the behavior to memory, not to a generic willingness to follow a bad prompt. The poisoned memory occupied a high-salience position in the agent context and changed execution despite explicit user instructions.

This is where peer-agent channels and memory channels converge. Inter-agent work shows that agents can generate, adapt, and transmit adversarial instructions. Memory work shows that stored instructions can later steer retrieval, imitation, and tool use. The missing experiment is not vague. No reviewed paper shows one AI agent autonomously inspecting another agent’s memory architecture, generating a retrieval-optimized payload, writing it to a persistent episodic memory store, and causing a second agent in a later session to change behavior because of that retrieved memory.

InjecMEM narrows that gap without closing it. Tian et al. describe optimized memory injection using a retriever-agnostic anchor plus an adversarial command, with reported properties of topic-conditioned retrieval, targeted generation, persistence after benign memory drift, and limited effect on non-target queries. Its indirect path includes a compromised tool writing poison that ordinary future queries later retrieve. Full quantitative results were not available in the reviewed abstract and review materials, and International Conference on Learning Representations 2026 acceptance was not confirmed as of the monograph date, so InjecMEM is evidence for the payload-optimization component, not proof of the full AI-to-AI episodic-memory chain (Tian et al., OpenReview 2026 submission).

That gap should be stated plainly. It is the difference between an established attack class and an emerging compound chain. The risk is not that the full chain has been proven. The risk is that every major component has been demonstrated separately, and agent systems are being built in ways that connect those components by default.

Shared Stores Turn Compromise Into Propagation

A private memory store limits blast radius. A shared store changes it. Torra and Bras-Amoros distinguish short-term memory inside one agent, episodic memory stored per agent, and consolidated semantic memory in shared knowledge bases; the shared category is where a poisoned record can become a common prior across agents (Torra and Bras-Amoros, arXiv:2603.20357). When several agents retrieve from the same memory or retrieval backend, a poisoned record is no longer a single-agent problem. It becomes a shared prior.

Morris II showed the shared-store version early. Cohen, Bitton, and Nassi built a self-replicating adversarial prompt that moved through interconnected generative AI applications sharing a RAG database. One infected application wrote content to the shared store. Later applications retrieved the content and became carriers for further propagation. The work tested Gemini Pro, ChatGPT 4.0, and Large Language and Vision Assistant (LLaVA) model systems in a controlled email-assistant ecosystem (Cohen et al., arXiv:2403.02817).

Morris II did not report one simple aggregate success rate across every propagation hop. Its strongest contribution is mechanical: shared retrieval can act as a transmission medium. The same property that makes organizational memory useful, one system writes and another benefits, makes organizational memory dangerous when origin and integrity are not enforced.

Zhang et al.’s ClawWorm adds persistence and autonomous retry in an agent ecosystem. The target was OpenClaw, a popular agent framework. The attack used one anchor in session startup configuration and another in a global interaction rule that caused the infected agent to append the payload to replies, tool actions, or shared-channel messages. When infection failed, the agent generated context-appropriate follow-up messages with escalating authority cues, up to three attempts and eight conversational turns per attempt. In 1,800 trials against four Chinese commercial model backends, success meant infection and payload execution through the evaluated propagation vector. Aggregate attack success was 64.5%. By infection vector, ClawWorm attack success was 81% for skill supply-chain poisoning, 59% for direct instruction replication, and 54% for web injection (Zhang et al., arXiv:2603.15727).

The platform and model caveats are real. The evaluated backends were Chinese commercial models, and the target was OpenClaw rather than LangGraph, AutoGPT, or another mainstream Western agent framework. Still, the relevant mechanism generalizes as a design pattern: an infected agent can keep trying, adjust its language, and transmit the payload as if it were routine operational output.

The security economics are bad. A defender has to protect every write path into shared memory, every summarization step that can convert conversation into stored state, every retrieval path that can place memory into context, and every inter-agent channel that can carry poisoned work product. An attacker needs one accepted write whose content is retrieved in the right future context.

That asymmetry is why AI-on-AI memory poisoning should be treated as an architecture problem before it is treated as a model behavior problem. Refusal tuning may reduce some direct compliance. It does not tell the memory store who wrote a record, whether the writer had authority, whether the record was modified, whether the record should be visible to a given agent, or how to remove every derived copy after compromise.

Taxonomies Still Under-Scope the Chain

The main public taxonomies recognize pieces of this risk, but they do not yet give defenders a complete model for AI-on-AI memory poisoning.

MITRE ATLAS, an adversarial artificial intelligence threat framework, added AI Agent Context Poisoning as Adversarial Machine Learning technique AML.T0080 under the Persistence tactic, with a Memory subtechnique, AML.T0080.000, created on September 30, 2025. Related entries include RAG Poisoning, False RAG Entry Injection, and Gather RAG-Indexed Targets. That placement is useful because it names persistence. The memory subtechnique still lacks the mature detection and mitigation detail defenders expect from older technique entries (MITRE ATLAS, 2025).

National Institute of Standards and Technology (NIST) AI 100-2 E2025, a U.S. government adversarial machine learning taxonomy, was approved March 20, 2025 and does not define agent memory poisoning as its own taxonomy item. The closest primary category is NIST Adversarial Machine Learning identifier NISTAML.015, Indirect Prompt Injection. Section 3.4.2 names knowledge base poisoning as an integrity attack, using PoisonedRAG as the example. Data Poisoning, NISTAML.013, is also relevant by analogy. Section 3.5 acknowledges that security research on agents is still in its early stages (Vassilev et al., NIST 2025).

The timing explains much of the gap. NIST AI 100-2 E2025 was finalized before MemoryGraft, the 2026 long-term-memory survey work, and the newest memory-control-flow results. The absence of a dedicated entry should not be read as evidence that the risk is out of scope. It means the taxonomy is behind the attack surface.

This matters operationally. A test plan built around indirect prompt injection will check whether an agent follows hostile instructions from a retrieved web page or document. A test plan built around RAG poisoning will check whether poisoned corpus entries affect generated answers. Neither necessarily asks whether an agent can store an adversarial peer’s output as long-term memory, retrieve it next week as trusted experience, and use it to select tools or steer another agent.

That is the AI-on-AI version of the problem: the writer, carrier, and victim can all be agents. The test case has to follow the memory lifecycle, not just the prompt boundary.

Defenses Must Govern Memory, Not Just Filter Text

The strongest proposed defenses aim at memory governance rather than one more prompt filter. They fall into four evidence categories.

First, proposed but unvalidated controls address the right architectural layer without yet proving production effect. MemoryGraft proposes Cryptographic Provenance Attestation, where valid experience records are signed at write time and unsigned records are discarded at retrieval. It also proposes Constitutional Consistency Reranking, where retrieved records are penalized if their procedural content scores as risky. Neither defense was empirically validated in the MemoryGraft paper, and both have open problems. Signing does not fix records written before the signing regime exists. Risk reranking depends on a classifier that adversarial content may evade (Srivastava and He, arXiv:2512.16962). The residual risk is that both controls can fail at deployment boundaries: legacy records, compromised write paths, and classifier evasion.

Second, measured retrieval-layer mitigations change some outcomes but do not close the surface. Thornton’s Semantic Chameleon work tested Greedy Coordinate Gradient poisoning attacks against hybrid Best Matching 25 (BM25) lexical retrieval plus vector retrieval, comparing vector-only optimized attacks with attacks optimized for both channels. On the Security Stack Exchange corpus, a technical question-answering dataset, hybrid retrieval reduced vector-only optimized attack success from 38% to 0%. When adversaries optimized jointly for both channels, success remained between 20% and 44%. On the Fact Extraction and Verification (FEVER) Wikipedia dataset, all tested attack configurations failed, which shows strong corpus dependence (Thornton, arXiv:2603.18034). The residual risk is adaptive optimization: hybrid retrieval adds friction against one attacker strategy but still leaves measurable exposure when the attacker targets the full retrieval stack.

Third, measured agent-level defenses are still narrow. Composite trust scoring and temporal decay have the right shape, but the calibration problem is unresolved. A threshold strict enough to suppress adversarial memories can delete useful memory. A threshold loose enough to preserve utility can keep poisoned records alive. Devarangadi Sunil et al. make that tradeoff visible in the clinical-memory setting, where realistic pre-existing memories changed attack effectiveness and defense behavior.

Fine-tuning has the strongest empirical defense signal at the agent level in the current record. Patlan et al. tested memory injection against ElizaOS, a decentralized artificial intelligence agent framework for Web3 operations, using CrAIBench, a blockchain-agent benchmark with more than 150 blockchain tasks and more than 500 attack scenarios. They found memory injection more effective than direct prompt injection, prompt-injection defenses only partly useful once stored context was corrupted, and fine-tuning-based defenses effective while maintaining task performance on single-step tasks (Patlan et al., arXiv:2503.16248). The residual risk is portability: fine-tuning has to track evolving attacks and is unavailable or operationally constrained for many closed-model deployments.

Fine-tuning is not a memory governance system. It can make a model less likely to follow certain corrupted contexts. It does not provide write authorization, read-time provenance filtering, rollback, retention limits, scope restrictions, actor tagging, entry signing, audit logging, or purge confirmation.

Fourth, memory governance is the architectural synthesis, not a benchmarked defense result. Lin, Li, and Chen’s 2026 survey maps long-term memory security through a six-phase lifecycle: Write, Store, Retrieve, Execute, Share, and Forget/Rollback. It also frames nine controls as the governance primitives required for mnemonic sovereignty. No surveyed production architecture implements all nine (Lin et al., arXiv:2604.16548). Mem0’s Actor-Aware Memory, launched in June 2025, is close to one primitive because it tags memories by source actor. That is useful metadata. It is not, by itself, a security boundary. Actor tags have to feed authorization, retrieval filtering, audit, and purge workflows before they change the outcome of an attack.

The following control map is my operational synthesis of the memory-governance literature, not a source-documented framework or benchmarked control set:

Control area What it must answer

Write authorization Who was allowed to create this memory?

Provenance Which agent, user, tool, or document produced it?

Integrity Has the record changed since it was accepted?

Scope Which agents may retrieve it?

Retrieval filtering Should this record be trusted for this task?

Rollback Can every derived copy be found and removed?

Audit Can investigators reconstruct the write and retrieval path?

A system that cannot answer those questions does not have long-term memory security. It has long-term memory optimism.

The Boundary Is Between Agents

The full AI-to-AI persistent episodic-memory attack has not been demonstrated in one clean public experiment. That should keep the claim disciplined. It should not keep defenders comfortable.

The separate results already establish the shape of the risk. Agents can reformulate malicious instructions as they pass them along. Adversarial agents can adapt payloads to the conversation. Shared retrieval stores can propagate adversarial content. Poisoned memory can be retrieved as trusted prior experience. Retrieved memory can change tool selection and workflow behavior. Peer-agent channels can produce higher malicious compliance than human-facing channels under controlled conditions.

The remaining question is not whether these components are related. They are related by the architecture of the systems now being deployed. The open question is how often the full chain works in realistic stores, under realistic permissions, with realistic audit, retention, and rollback.

That is the defensive test practitioners should run, only in isolated staging systems they own or are explicitly authorized to assess. Do not run it against production memory stores. Seed an adversarial test agent into the workflow. Let it write only through the channels a normal agent can use. Measure whether another agent later retrieves, trusts, and acts on the resulting memory. Then disable retrieval, restrict memory scope, require signed writes, filter by actor, verify rollback and purge behavior, and test again. The control conditions matter more than the demo.

Agent memory is becoming an inter-agent security boundary. If one agent can write what another agent will later treat as experience, then memory is not just state. It is authority deferred into the future.

Peace. Stay curious! End of transmission.

Fact-Check Appendix

Statement: Inter-agent trust exploitation succeeded against all 18 tested large language models, while direct prompt injection succeeded against 17 of 18 and retrieval-augmented generation backdoors against 15 of 18.

Source: Lupinacci et al., “The Dark Side of LLMs: Agent-based Attacks for Complete Computer Takeover,” arXiv:2507.06850, https://arxiv.org/abs/2507.06850

Statement: Peigne-Lefebvre et al. evaluated a seven-agent autonomous chemistry-lab simulation and observed agents generating imperfect variants of malicious instructions.

Source: Peigne-Lefebvre et al., “Multi-Agent Security Tax: Trading Off Security and Collaboration Capabilities in Multi-Agent Systems,” AAAI 2025, https://arxiv.org/abs/2502.19145

Statement: Agent-in-the-Middle attack success exceeded 40% in every tested condition and exceeded 70% in most experiments.

Source: He et al., “Red-Teaming LLM Multi-Agent Systems via Communication Attacks,” ACL 2025, https://arxiv.org/abs/2502.14847

Statement: In chain communication structures, AutoGen reached 93.1% success on biology tasks and 87.6% on physics tasks; Camel reached 81.7% and 77.4%.

Source: He et al., “Red-Teaming LLM Multi-Agent Systems via Communication Attacks,” ACL 2025, https://arxiv.org/abs/2502.14847

Statement: MetaGPT reached 100% success on software-development tasks in production-oriented framework testing.

Source: He et al., “Red-Teaming LLM Multi-Agent Systems via Communication Attacks,” ACL 2025, https://arxiv.org/abs/2502.14847

Statement: In AutoGen Tree targeted attacks, success rose from 19.5% with low-persuasiveness generated instructions to 40.7% with high-persuasiveness generated instructions.

Source: He et al., “Red-Teaming LLM Multi-Agent Systems via Communication Attacks,” ACL 2025, https://arxiv.org/abs/2502.14847

Statement: MemoryGraft evaluated a store with 100 benign records and 10 poisoned records, producing 23 poisoned retrievals across 48 total retrievals, for a poisoned retrieval proportion of 47.9%.

Source: Srivastava and He, “MemoryGraft: Persistent Compromise of LLM Agents via Poisoned Experience Retrieval,” arXiv:2512.16962, https://arxiv.org/abs/2512.16962

Statement: MINJA reported injection success above 95% and attack success around 70% under idealized sparse-memory conditions.

Source: Dong et al., “Memory Injection Attacks on LLM Agents via Query-Only Interaction,” arXiv:2503.03704, https://arxiv.org/abs/2503.03704

Statement: Devarangadi Sunil et al. tested GPT-4o-mini, Gemini-2.0-Flash, and Llama-3.1-8B-Instruct model variants on the Medical Information Mart for Intensive Care III (MIMIC-III) clinical dataset and found that realistic pre-existing memories dramatically reduced attack effectiveness.

Source: Devarangadi Sunil et al., “Memory Poisoning Attack and Defense on Memory Based LLM-Agents,” arXiv:2601.05504, https://arxiv.org/abs/2601.05504

Statement: Chen and Lu demonstrated a session-summarization poisoning path against an Amazon Bedrock Agent running Nova Premier, with persistence across sessions.

Source: Chen and Lu, “When AI Remembers Too Much: Persistent Behaviors in Agents’ Memory,” Unit 42, Palo Alto Networks, https://unit42.paloaltonetworks.com/indirect-prompt-injection-poisons-ai-longterm-memory/

Statement: PoisonedRAG reported black-box attack success rates of 97% on Natural Questions, 99% on HotpotQA, and 91% on Microsoft Machine Reading Comprehension (MS-MARCO) using PaLM 2, a Google language model, with five malicious texts inserted into knowledge bases containing millions of documents.

Source: Zou, Geng, Wang, and Jia, “PoisonedRAG: Knowledge Corruption Attacks to Retrieval-Augmented Generation of Large Language Models,” USENIX Security 2025, https://arxiv.org/abs/2402.07867 and https://www.usenix.org/conference/usenixsecurity25/presentation/zou-poisonedrag

Statement: The Memory Flow (MEMFLOW) evaluation measured tool-choice override success from 91.7% to 100%, workflow reordering from 52.8% to 69.4%, and across-task scope expansion from 97.2% to 100%; disabling retrieval reduced all attack success rates to 0%.

Source: Xu et al., “From Storage to Steering: Memory Control Flow Attacks on LLM Agents,” arXiv:2603.15125, https://arxiv.org/abs/2603.15125

Statement: InjecMEM describes optimized memory injection using a retriever-agnostic anchor plus an adversarial command, but full quantitative results were not available from the reviewed abstract and review materials.

Source: Tian et al., “InjecMEM: Memory Injection Attack on LLM Agent Memory Systems,” OpenReview, https://openreview.net/forum?id=QVX6hcJ2um

Statement: Morris II tested Gemini Pro, ChatGPT 4.0, and Large Language and Vision Assistant (LLaVA) model systems in a controlled email-assistant ecosystem.

Source: Cohen, Bitton, and Nassi, “Here Comes The AI Worm: Unleashing Zero-click Worms that Target GenAI-Powered Applications,” arXiv:2403.02817, https://arxiv.org/abs/2403.02817

Statement: Torra and Bras-Amoros distinguish short-term memory inside one agent, episodic memory stored per agent, and consolidated semantic memory in shared knowledge bases.

Source: Torra and Bras-Amoros, “Memory poisoning and secure multi-agent systems,” arXiv:2603.20357, https://arxiv.org/abs/2603.20357

Statement: ClawWorm targeted OpenClaw, an agent platform with more than 40,000 active instances.

Source: Zhang et al., “ClawWorm: Self-Propagating Attacks Across LLM Agent Ecosystems,” arXiv:2603.15727, https://arxiv.org/abs/2603.15727

Statement: In ClawWorm, failed infection attempts triggered context-appropriate follow-up messages with escalating authority cues, up to three attempts and eight conversational turns per attempt.

Source: Zhang et al., “ClawWorm: Self-Propagating Attacks Across LLM Agent Ecosystems,” arXiv:2603.15727, https://arxiv.org/abs/2603.15727

Statement: ClawWorm reported 64.5% aggregate attack success across 1,800 trials.

Source: Zhang et al., “ClawWorm: Self-Propagating Attacks Across LLM Agent Ecosystems,” arXiv:2603.15727, https://arxiv.org/abs/2603.15727

Statement: In ClawWorm, skill supply-chain poisoning reached 81%, direct instruction replication 59%, and web injection 54%.

Source: Zhang et al., “ClawWorm: Self-Propagating Attacks Across LLM Agent Ecosystems,” arXiv:2603.15727, https://arxiv.org/abs/2603.15727

Statement: MITRE ATLAS added AI Agent Context Poisoning as Adversarial Machine Learning technique AML.T0080, with Memory as AML.T0080.000, created on September 30, 2025.

Source: MITRE ATLAS canonical local data, /storage/prj/obsidian_vault/CanonicalRefs/atlas-data/data/techniques.yaml

Statement: NIST AI 100-2 E2025 was approved March 20, 2025 and does not define agent memory poisoning as its own taxonomy item.

Source: Vassilev et al., “Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations,” NIST AI 100-2 E2025, https://doi.org/10.6028/NIST.AI.100-2e2025

Statement: Thornton reported hybrid Best Matching 25 (BM25) plus vector retrieval reducing vector-only optimized attack success from 38% to 0% on the Security Stack Exchange corpus, while jointly optimized attacks retained 20% to 44% success and tested configurations failed on the Fact Extraction and Verification (FEVER) Wikipedia dataset.

Source: Thornton, “Semantic Chameleon: Corpus-Dependent Poisoning Attacks and Defenses in RAG Systems,” arXiv:2603.18034, https://arxiv.org/abs/2603.18034

Statement: Patlan et al. used CrAIBench, a blockchain-agent benchmark with more than 150 blockchain tasks and more than 500 attack scenarios.

Source: Patlan et al., “Real AI Agents with Fake Memories: Fatal Context Manipulation Attacks on Web3 Agents,” arXiv:2503.16248, https://arxiv.org/abs/2503.16248

Statement: Lin, Li, and Chen identify nine governance primitives and report that no surveyed production architecture implements all nine.

Source: Lin, Li, and Chen, “A Survey on the Security of Long-Term Memory in LLM Agents: Toward Mnemonic Sovereignty,” arXiv:2604.16548, https://arxiv.org/abs/2604.16548

Statement: Lin, Li, and Chen map long-term memory security through six lifecycle phases: Write, Store, Retrieve, Execute, Share, and Forget/Rollback.

Source: Lin, Li, and Chen, “A Survey on the Security of Long-Term Memory in LLM Agents: Toward Mnemonic Sovereignty,” arXiv:2604.16548, https://arxiv.org/abs/2604.16548

Statement: Mem0 launched Actor-Aware Memory in June 2025.

Source: Mem0, “State of AI Agent Memory 2026,” https://mem0.ai/blog/state-of-ai-agent-memory-2026

Top 5 Sources

Srivastava and He, “MemoryGraft: Persistent Compromise of LLM Agents via Poisoned Experience Retrieval,” arXiv:2512.16962. This is the central empirical source for persistent poisoned experience retrieval in an agent memory store.

Xu et al., “From Storage to Steering: Memory Control Flow Attacks on LLM Agents,” arXiv:2603.15125. This source connects poisoned memory retrieval to tool choice, workflow order, and task-scope deviation.

He et al., “Red-Teaming LLM Multi-Agent Systems via Communication Attacks,” ACL 2025. This is the strongest peer-reviewed source for adversarial agents generating context-adapted malicious instructions inside multi-agent communication.

Lupinacci et al., “The Dark Side of LLMs: Agent-based Attacks for Complete Computer Takeover,” arXiv:2507.06850. This source provides the clearest quantitative trust-channel differential between direct prompts, RAG backdoors, and inter-agent channels.

Lin, Li, and Chen, “A Survey on the Security of Long-Term Memory in LLM Agents: Toward Mnemonic Sovereignty,” arXiv:2604.16548. This source provides the memory lifecycle and governance primitives used to frame practical defenses.