NIST, ISO 42001 & IEEE: Technical Standards for AI Governance

Master the technical standards layer of AI governance. Learn how to implement NIST AI RMF, ISO 42001, and IEEE 7000 to bridge engineering and compliance.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

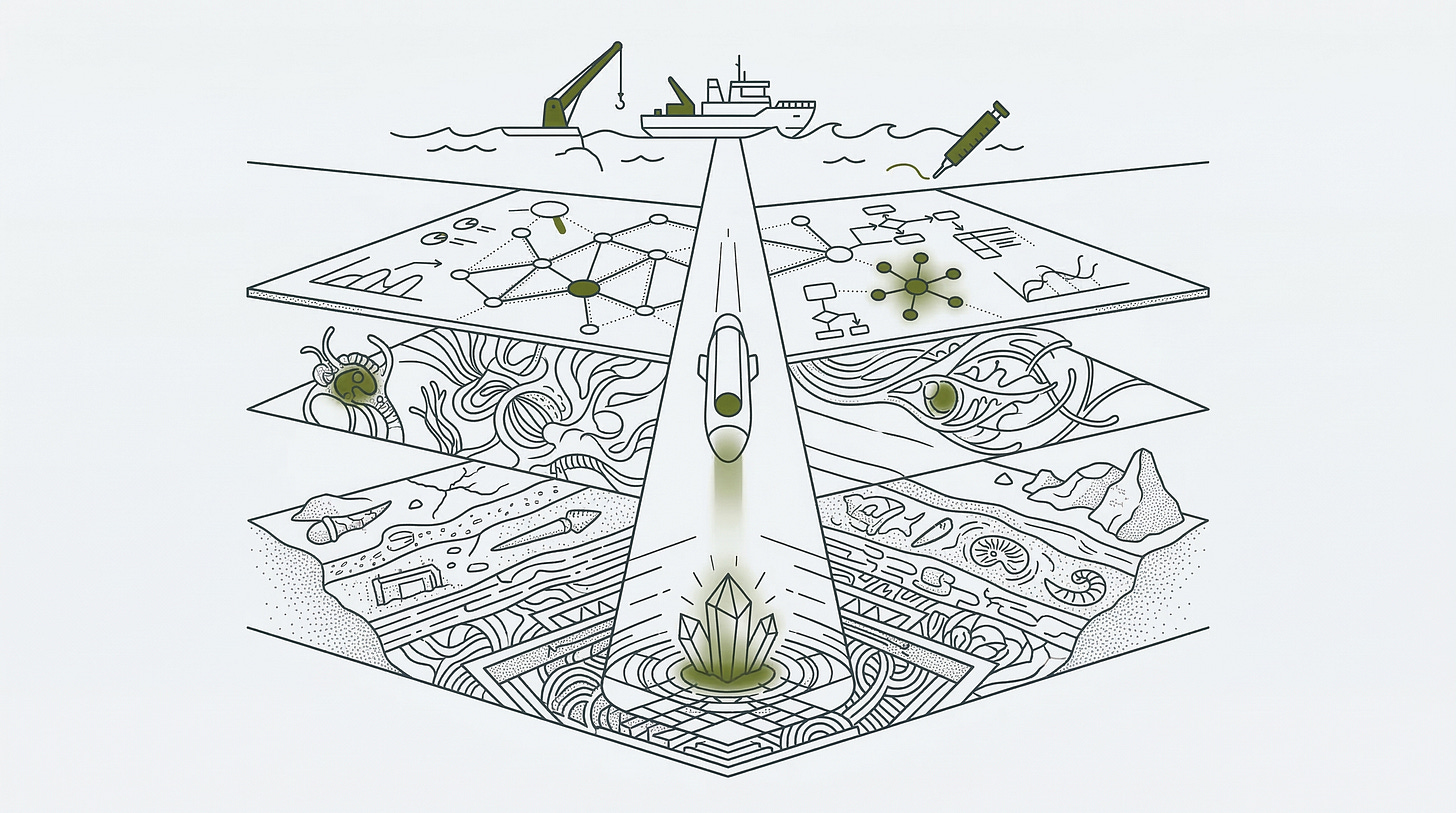

Vocabulary gaps destroy more compliance projects than any technical failure. On one side, engineers who have built a system for fourteen months; on the other, a compliance lead with an EU AI Act deadline on a whiteboard. They use the same words to mean completely different things. This article is your translation layer. I descend into the technical standards layer to show you exactly how NIST AI RMF, ISO 42001, and the IEEE 7000 series function as a sequence, not a list of options. NIST provides the risk management methodology: the field notes of your system’s life. ISO 42001 provides the receipts: the certifiable management system that external auditors actually accept. And the IEEE standards provide the upstream design values that make the whole stack coherent rather than retrofitted. If you reach for certification before your architecture is frozen, you are building documentation that satisfies auditors without reflecting reality. We cover the four functions of NIST, the certifiable controls of ISO, and the specific role of the U.S. AI Safety Institute’s NIST AI 800-1 for foundation models. This is the operational backbone of your governance strategy.

The Itch: Why This Matters Right Now

In the last article, I handed you a map.

Three layers: technical standards at the base, binding law in the middle, international coordination at the top. The map tells you what territory exists. This article is about descending into the bottom layer, the one that belongs to engineers and risk managers, and figuring out which tool does what.

Here is the scenario I keep hearing about.

A compliance lead walks into a room with three engineers. The engineers have been building an AI system for fourteen months. The compliance lead has a regulatory deadline on a whiteboard. The EU AI Act’s high-risk system obligations land in August 2026. The organization has EU-facing deployments. Someone upstairs has decided that “ISO 42001 certification” is the answer.

The engineers have never heard of ISO 42001. They have heard of NIST, loosely, from a security context. One of them thinks the IEEE is where you submit conference papers.

The compliance lead cannot explain why ISO 42001 is the right tool without understanding what the engineers have actually built. The engineers cannot satisfy a certification audit without understanding what the compliance lead is actually measuring.

One engineer describes the system’s bias mitigation: “We ran fairness evals on the test set.” The compliance lead needs that to map to a documented AI system impact assessment under Clause 6.1.4. It does not. Not yet.

Nobody in that room is incompetent. The vocabulary is missing.

That vocabulary gap has a specific cost. Organizations that cannot bridge it toward two failure modes: engineers building well-functioning systems that fail conformity assessments because the documentation never matched the architecture; and compliance leads publishing responsible AI policies that satisfy internal reviewers but carry no weight with an external auditor. Both groups work hard and produce the wrong artifact.

The technical standards layer contains three core tools, and a fourth specialist document for a specific audience. Understanding what each one actually does, and in what sequence you reach for them, is the translation layer that makes both groups useful to each other.

The Deep Dive: The Struggle for a Solution

The first tool is a risk management methodology, not a compliance checklist.

The NIST AI Risk Management Framework 1.0 arrived January 26, 2023, built through an eighteen-month public process with input from more than 240 contributing organizations. Think of it as a highly organized field notebook for everyone involved in an AI system’s life: from the team that trains the model, to the organization that deploys it, to the users who depend on its outputs.

The framework organizes itself around four functions: Govern, Map, Measure, and Manage.

Govern does not mean “add a governance committee.” It means: establish the organizational conditions, the risk tolerance definitions, the accountability structures, and the cultural norms that make the other three functions possible. Without Govern, the remaining functions produce activity without ownership. Accountability blurs, incidents get absorbed rather than addressed, and documentation accumulates without anyone who is genuinely responsible for what it says.

Map is where you place context around a specific AI system. Who are the actual stakeholders? What are the intended uses, and what foreseeable misuses need to be surfaced early? What risk categories apply to this particular deployment context? Map produces no certificates and generates no audit trail in any formal sense. What it produces is shared understanding, the kind that prevents the engineering team and the compliance team from discovering, mid-audit, that they were describing the same system in completely different terms.

Measure is where that shared understanding becomes documented evidence. Testing, evaluation, validation, and verification activities, applied to the specific system, across reliability, fairness, safety, and security properties. The NIST AI 600-1 Generative AI Profile, published July 2024, extends this function explicitly to foundation models, adding confabulation, manipulation risk, and data privacy as measurable properties alongside the standard trustworthiness characteristics.

Manage is the response layer. Risk treatments get selected and implemented. Incident response protocols get built before they are needed. Decommissioning procedures get documented while the system is still healthy. The NIST AI RMF Playbook, a filterable companion resource updated approximately twice annually based on community feedback, provides suggested actions for every subcategory across all four functions.

Here is what the framework does not do: it does not generate a certificate. There is no external auditor, no conformity assessment body, no three-year certification cycle. The framework is free to download, voluntary in every jurisdiction, and flexible enough for a five-person startup and a global enterprise to use simultaneously. That flexibility is its primary strength for practitioners. And it is exactly why it cannot, by itself, satisfy an external compliance requirement.

The second tool closes that gap.

ISO/IEC 42001:2023 arrived in December 2023 as the first certifiable international AI management system standard. It follows the same Annex SL harmonized structure as ISO 27001 for information security and ISO 9001 for quality management. If your organization already holds either of those certifications, the documentation architecture, the internal audit cycles, and the management review processes are already familiar. ISO 42001 integrates rather than replacing them.

Think of ISO 42001 as the receipts that make your NIST field notes auditable by someone outside your organization. The NIST framework tells you what to think about. ISO 42001 specifies what to write down, what to demonstrate to an independent third party, and what a certification body verifies before issuing a certificate that another organization will actually accept.

The standard is organized around ten operative clauses and 39 controls in its normative Annex A. The controls span AI policy, organizational accountability, resource documentation, impact assessment, full AI system lifecycle management, data governance, transparency obligations, responsible use objectives, and third-party relationships. Not all 39 apply to every organization: the Statement of Applicability documents which controls you selected, and provides documented justification for each exclusion.

Two things distinguish ISO 42001 from its management system siblings.

The first is an obligation that ISO 27001 does not contain: the AI system impact assessment. This is a formal, documented process for evaluating the potential consequences of an AI system on individuals, groups, and societies throughout the system’s lifecycle. It is not a risk register in the cybersecurity sense. Its scope extends to fairness, human rights, financial consequences, psychological well-being, and societal impacts, properties that a traditional information security assessment does not reach.

The second distinction is its regulatory positioning. Article 40 of the EU AI Act establishes a presumption of conformity for organizations certified against harmonized European standards. ISO 42001 is under active consideration for that harmonization by the CEN-CENELEC standards bodies. When that harmonization formalizes, an ISO 42001 certificate becomes documented evidence that maps directly to AI Act conformity assessment requirements. The certification earned under procurement pressure today may become the regulatory credit that closes an audit in 2026.

The certification pathway is substantive. A Stage 1 documentation review and a Stage 2 implementation audit, conducted by an accredited conformity assessment body. A three-year certification cycle with annual surveillance audits. Whether these audits can run as an integrated extension of an existing ISO 27001 engagement or require a separate certification body relationship depends on your accreditor and scope definition. Confirm this with your certification body before assuming shared audit cycles. The ANAB launched its ISO 42001 accreditation program in January 2024; within the first year, 15 certification bodies had applied for accreditation. AWS became the first major cloud provider to achieve accredited certification, covering Bedrock, Q Business, Textract, and Transcribe, with the certificate issued by Schellman Compliance LLC.

What ISO 42001 certification confirms: that your organization’s management system for AI is robust, documented, and independently verified. What it does not confirm: that any specific AI product is safe, unbiased, or compliant with any particular regulation. The certificate covers the process infrastructure. Whether the systems operating inside that infrastructure are trustworthy depends on what you build inside it.

The third tool is the one nobody explains in relation to the other two.

The IEEE 7000 series is built by engineers, for engineers, at the design stage, before either NIST or ISO become operationally relevant. IEEE Std 7000-2021 establishes a process for embedding ethical values into system design from the earliest concept phase. IEEE Std 7001-2021, the Transparency of Autonomous Systems standard, operationalizes transparency as a measurable, testable property, with pass-or-fail level definitions for five distinct stakeholder groups: end users, the general public and bystanders, stakeholders, accident investigators, and lawyers or expert witnesses.

A peer-reviewed survey of 84 AI ethics guidelines, drawn from 74 distinct AI ethics initiatives, found transparency to be the single most consistently prioritized value across the global AI governance ecosystem, appearing in 87% of all guidelines surveyed. IEEE Std 7001-2021 is the only document that translates that consensus into engineering specifications a developer can actually implement.

The relationship between IEEE standards and the other two tools is where most practitioners go wrong: they treat these as parallel options rather than sequential layers. The IEEE standards belong upstream.

IEEE Std 7000-2021’s ethical values elicitation process produces the stakeholder-grounded ethical requirements that inform NIST’s MAP function. The transparency specifications generated by IEEE Std 7001-2021’s System Transparency Specification process feed directly into ISO 42001’s information obligations in Annex A.8 and its impact assessment requirements under Clause 6.1.4. When organizations encounter the IEEE standards after their architecture is frozen, those values have to be retrofitted into documentation that never anticipated them. Expensive. Often incomplete. Always visible to a careful auditor.

For organizations building AI systems, the IEEE standards are the design-time work that makes NIST documentation coherent and ISO 42001 audits defensible. Done in the right sequence, they remove the scramble. A practical starting point for any product team: at the moment you document a system’s intended use, ask explicitly which values the system must protect and which harms it must not produce, then write down both the answers and the names of the people who gave them.

There is a fourth document for a specific audience.

NIST AI 800-1, “Managing Misuse Risk for Dual-Use Foundation Models,” was produced by the U.S. AI Safety Institute at NIST and was reported as finalized in mid-2025 after two rounds of public comment, though practitioners should verify current status at nvlpubs.nist.gov. It is not a general governance framework. It targets one population: organizations developing or deploying foundation models capable of contributing to CBRN weapons development, large-scale offensive cyber operations, or related national security threat categories.

Its seven objectives, covering anticipation of model capabilities, organizational planning, protection of model weights, red-teaming and misuse measurement, active mitigation, incident response, and transparency disclosure, extend the NIST AI RMF’s MEASURE and MANAGE functions into threat-profiling methodology that the parent framework does not specify.

If your organization is not developing or deploying foundation models at that capability threshold, NIST AI 800-1 is background reading. If it is, this document is the most technically detailed AI governance guidance currently in the NIST ecosystem for this specific surface area, and its supply chain role taxonomy, covering cloud service providers, model hosting platforms, downstream adapters, and third-party evaluators, is more granular than anything in ISO 42001 Annex A.10.

The Resolution: Your New Superpower

Here is the sequence that makes the translation layer operational.

The IEEE standards come first. At design time, before architectural decisions are locked, run the values elicitation process from IEEE Std 7000-2021. Identify the transparency obligations your system will carry toward each stakeholder group using IEEE Std 7001-2021’s System Transparency Specification process. Document those specifications. This is the upstream work that makes everything downstream coherent rather than retrofitted.

The NIST AI RMF comes second, running continuously across the full system lifecycle. Use it to build shared organizational vocabulary around risk, to document context for each AI system through the MAP function, and to structure your testing and evaluation activities as formal evidence rather than informal validation. The Playbook is free. Start with the Govern function: define your risk tolerance, assign accountability for each system, and work outward. No external auditor. No budget line beyond the time to do it properly.

ISO 42001 comes third, when the receipts need to be verified by someone outside your organization. The trigger points are procurement requirements from enterprise customers who need third-party assurance, regulatory readiness preparation for EU AI Act conformity assessments, or public accountability commitments that require independent validation. Treat your NIST implementation as the substantive foundation; ISO 42001 formalizes and certifies what you have already built.

The classification question from Article 1 applies here with more precision: before beginning an ISO 42001 certification project, identify which of your EU-facing AI systems fall under the Act’s high-risk category. The certification effort should be scoped around the systems carrying the highest regulatory exposure, not applied uniformly across every AI deployment your organization operates. That scoping conversation is where legal, compliance, engineering, and product have to be in the same room, and it surfaces the vocabulary gap faster than any other exercise.

If you are in compliance or legal, the sequencing above belongs in front of your engineering leads today. The August 2026 deadline for full high-risk system compliance is inside your planning horizon. A gap assessment is not a documentation project; it is a conversation about what evidence your organization can actually produce. Start that conversation with the classification question rather than a certification roadmap, and the roadmap will follow more cleanly.

If you are an engineer, the IEEE standards are worth dedicated time regardless of whether your organization has a certification initiative in motion. The values elicitation and transparency specification processes are the upstream work that prevents the downstream situation where compliance requirements arrive after the architecture is frozen and the only remaining options are expensive retrofits or documented exceptions.

The translation layer is not abstract. It is three tools, used in sequence, each one reinforcing the others. The organizations that internalize that sequence will spend the next two years building durable systems. The ones that reach for ISO 42001 first, without the NIST foundation and IEEE upstream work already in place, will spend them producing documentation that satisfies auditors without reflecting reality.

The next article in this series descends into the binding law layer: specifically, how the EU AI Act’s risk classification system works in practice, what a conformity assessment actually requires of high-risk system providers, and how the voluntary certifications covered in this article connect to the mandatory obligations that are already running.

An organization that arrives at an August 2026 conformity assessment without NIST documentation, without ISO 42001 in place, and without upstream IEEE values traceability does not fail quietly. It fails in front of a regulator, with a system already in production and users already affected.

The translation layer exists. The sequence is documented. The tools are available. What happens next depends on whether your organization treats governance as infrastructure or as paperwork. Only one of those survives an audit.

Fact-Check Appendix

Statement: NIST AI RMF 1.0 was released January 26, 2023, developed through an 18-month public comment process with more than 240 contributing organizations. | Source: NIST AI 100-1 (NIST AI RMF 1.0), January 2023 | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Statement: NIST AI 600-1, the Generative AI Profile, was published July 26, 2024. | Source: NIST AI 600-1, July 2024 | https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

Statement: ISO/IEC 42001:2023 contains 39 reference control objectives in its normative Annex A. | Source: ISO/IEC 42001:2023, Annex A (normative), Table A.1 | https://www.iso.org/standard/42001

Statement: The ANAB launched its ISO/IEC 42001 accreditation program in January 2024, with 15 certification bodies applying within the first year. | Source: ANAB, “ISO/IEC 42001: Artificial Intelligence Management Systems (AIMS)” | https://blog.ansi.org/anab/iso-iec-42001-ai-management-systems/

Statement: AWS became the first major cloud provider to achieve accredited ISO/IEC 42001 certification, covering Amazon Bedrock, Amazon Q Business, Amazon Textract, and Amazon Transcribe, with the certificate issued by Schellman Compliance LLC. | Source: AWS Blog, “AWS achieves ISO/IEC 42001:2023 Artificial Intelligence Management System accredited certification” | https://aws.amazon.com/blogs/machine-learning/aws-achieves-iso-iec-420012023-artificial-intelligence-management-system-accredited-certification/

Statement: IEEE Std 7000-2021 was published September 15, 2021, under the IEEE Systems and Software Engineering Standards Committee. | Source: IEEE Std 7000-2021 | https://doi.org/10.1109/IEEESTD.2021.9536679

Statement: A survey of 84 AI ethics guidelines, drawn from 74 distinct AI ethics initiatives, found transparency to be the most frequently included ethical principle, appearing in 87% of all guidelines surveyed. | Source: Jobin, Ienca, and Vayena (2019), “The Global Landscape of AI Ethics Guidelines,” Nature Machine Intelligence | https://doi.org/10.1038/s42256-019-0088-2

Statement: Article 40 of the EU AI Act establishes a presumption of conformity for organizations certified against harmonized European standards; ISO 42001 is under active consideration for harmonization by CEN-CENELEC. | Source: DLA Piper, “The Role of Harmonised Standards as Tools for AI Act Compliance,” January 2024 | https://www.dlapiper.com/en/insights/publications/2024/01/the-role-of-harmonised-standards-as-tools-for-ai-act-compliance

Statement: NIST AI 800-1 (Second Public Draft), “Managing Misuse Risk for Dual-Use Foundation Models,” was released January 2025 with a public comment period closing March 15, 2025; a final version is reported as published mid-2025. | Source: NIST AI 800-1 (Second Public Draft), U.S. AI Safety Institute | https://doi.org/10.6028/NIST.AI.800-1.2pd

Statement: The EU AI Act’s high-risk system full compliance deadline is August 2026. | Source: European Commission, EU AI Act Official Summary | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Top 5 Prestigious Sources

NIST AI Risk Management Framework 1.0 (NIST AI 100-1), U.S. Department of Commerce / NIST, January 2023 | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

ISO/IEC 42001:2023, Information technology: Artificial intelligence: Management system, ISO/IEC JTC 1/SC 42, December 2023 | https://www.iso.org/standard/42001

IEEE Std 7000-2021, Model Process for Addressing Ethical Concerns During System Design, IEEE Systems and Software Engineering Standards Committee | https://doi.org/10.1109/IEEESTD.2021.9536679

Jobin, A., Ienca, M., and Vayena, E. (2019), “The Global Landscape of AI Ethics Guidelines,” Nature Machine Intelligence | https://doi.org/10.1038/s42256-019-0088-2

European Commission, Regulation (EU) 2024/1689 (EU AI Act), Official Summary | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Peace. Stay curious! End of transmission.