AI Governance Framework - The Three-Layer Compliance Stack

Navigate global AI regulation with the Three-Layer Stack. Learn how the EU AI Act, NIST AI RMF, and ISO 42001 define the future of AI compliance and security.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

In the past, security professionals would chase the latest exploit while ignoring the structural floor beneath them. AI governance is currently that floor, and it is shifting faster than most teams can track. We have spent two years blurring ‘safety,’ ‘regulation,’ and ‘responsible AI’ into a single, vague obligation. This conflation is an operational trap. Picture a compliance lead discovering mid-audit that their ‘Responsible AI’ policy does not meet the EU AI Act’s conformity assessment requirements. The gap between intent and evidence carries real legal liability, and several of these obligations are already active. I built the Three-Layer Stack to stop this discovery from happening to you. From the technical standards of NIST AI RMF to the binding extraterritoriality of the EU AI Act and the international consensus of Bletchley Park, this is your map to the new regulatory landscape. We move from tool-based sectoral rules to infrastructure-level governance. If you are building AI that touches EU users, the deadlines are already inside your planning horizon. It is time to stop reacting and start architecting for compliance.

The Itch: Why This Matters Right Now

You have probably noticed the word “governance” appearing in every AI conversation you have had in the last years.

In strategy decks. In compliance audits. In job titles that did not exist in 2021. In regulatory filings from agencies that, until recently, had nothing to say about machine learning.

Here is the uncomfortable part: most of those conversations are using the same word to mean four different things. AI governance, AI safety, AI regulation, and responsible AI get collapsed into a single vague obligation that nobody in the room can define with precision. And when the terms blur, the accountability blurs with them.

Picture the moment a compliance lead discovers, mid-audit, that the responsible AI policy their organization published two years ago does not constitute a conformity assessment under the EU AI Act. The policy documented intent. The Act requires documented evidence. Those are not the same thing, and the gap between them carries legal liability. That conflation of “responsible AI” with “regulatory compliance” is the specific failure mode this map is designed to prevent. The three-layer stack tells you, in advance, exactly which document satisfies which obligation, so that discovery never happens mid-audit.

Several of these obligations are already active, and others arrive within eighteen months. If you are building, deploying, or advising on AI systems that touch users in the European Union, some of those obligations became mandatory in August 2025.

The map exists. You just have not been handed it yet.

The Deep Dive: The Struggle for a Solution

Let me take you back to late 2021.

The dominant mental model for AI in regulatory circles was sectoral. Credit scoring AI sat under financial services regulation. Diagnostic AI sat under healthcare frameworks. Hiring algorithms sat under employment law. The technology was treated as a tool, powerful in specific domains, governable through existing domain-specific rules.

That model broke in a very specific way in November 2022.

The public release of a large language model that could draft legal documents, synthesize medical protocols, write executable code, and respond to emotional distress simultaneously did something that no previous AI deployment had done: it collapsed the sectoral categories. You could not regulate it as a financial tool, because it was also a healthcare tool. You could not regulate it as a communication platform, because it was also a development environment.

AI moved from tool to infrastructure. And the regulatory frameworks built for tools were structurally inadequate for infrastructure.

The institutional response, by governance standards, was fast. In January 2023, the U.S. National Institute of Standards and Technology published its AI Risk Management Framework 1.0: a voluntary, sector-agnostic structure for managing AI trustworthiness across the full system lifecycle. By March 2024, the European Parliament had adopted the EU AI Act, the world’s first comprehensive binding legal framework for artificial intelligence, which entered into force on August 1, 2024. In November 2023, twenty-eight countries and the European Union gathered at Bletchley Park to commission an international scientific report on AI safety, chaired by Turing Award winner Yoshua Bengio, with the resulting expert panel drawing on nominees from 30 nations plus the UN and OECD. That report, synthesizing contributions from 100 AI experts, was delivered in final form in January 2025.

The numbers attached to this moment are striking. Across 75 countries, AI mentions in legislative proceedings rose 21.3% in 2024 alone, reaching 1,889 documented instances up from 1,557 in 2023. Since 2016, that figure has grown ninefold. In the United States, state-level AI laws jumped from 49 in 2023 to 131 in 2024. U.S. federal agencies issued 59 AI-related regulations in 2024, more than double the 25 recorded the year before, originating from 42 distinct agencies. These are not policy conversations. These are institution-building events.

Now here is where the conflation problem becomes operationally dangerous.

AI safety is a technical property. It is the engineering problem of making systems behave as intended under adversarial conditions, distributional shift, and high-stakes deployment contexts. It lives in alignment research, robustness testing, and interpretability work. Safety is something you build into a model.

AI governance is an institutional property. It is the apparatus of decisions, accountabilities, and oversight mechanisms that determine how AI systems are developed, deployed, and constrained. Governance is something that organizations and states build around models.

AI regulation is a legal subset of governance: binding rules with enforcement mechanisms, penalties, and jurisdictional scope.

Responsible AI is an organizational posture: principles and practices aimed at ethical, non-discriminatory outcomes. It operates at the company level and rarely carries external enforcement weight.

A compliance officer treating responsible AI commitments as a substitute for regulatory compliance will miss a legal deadline. An engineer treating safety research as a substitute for governance documentation will produce well-aligned models that fail conformity assessments. The conflation has real costs, and the costs accrue at predictable points in the deployment lifecycle.

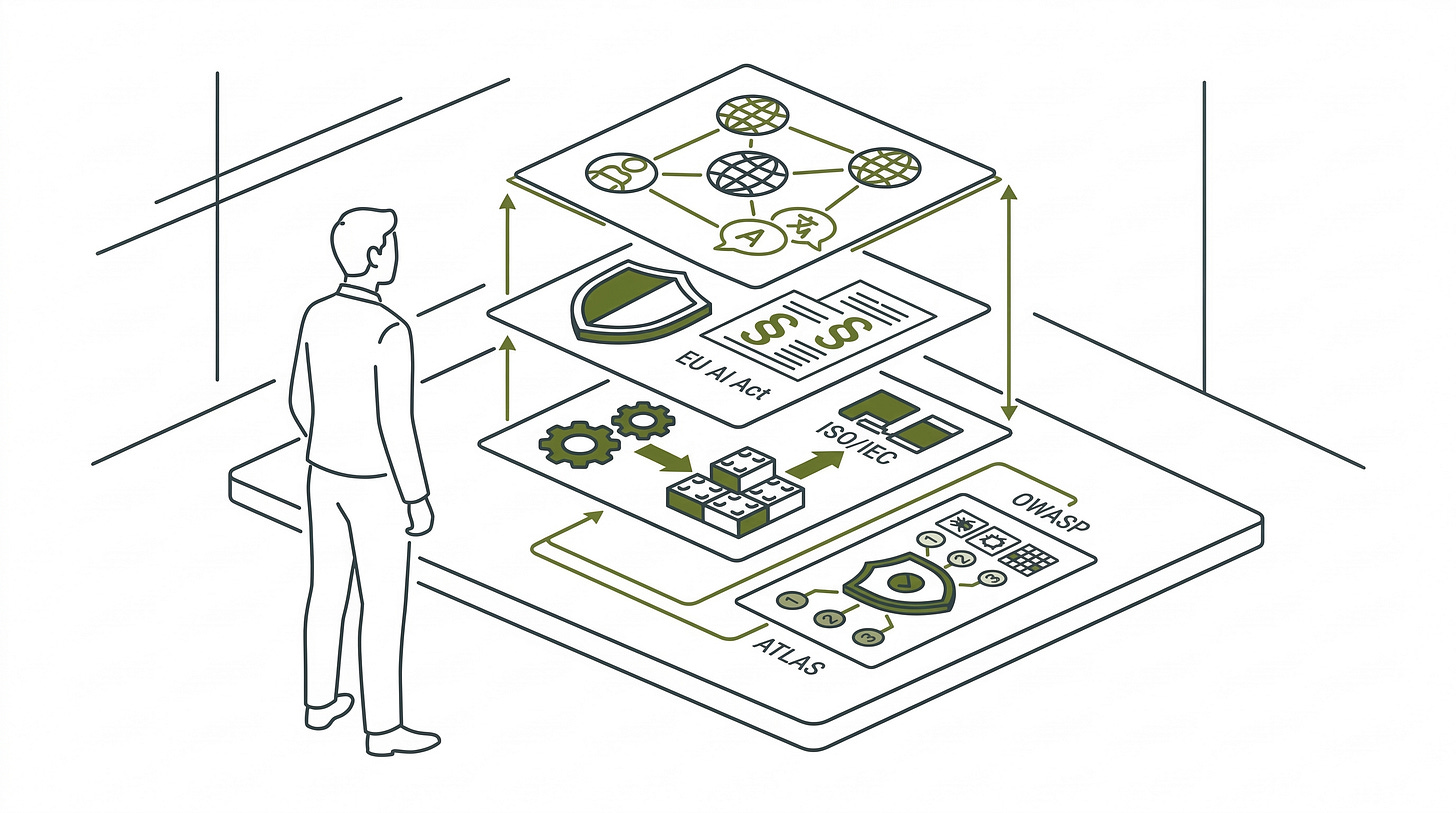

The three-layer stack is how you stop conflating them.

Layer One is technical standards: voluntary, jurisdiction-agnostic frameworks that translate governance principles into operational practice. The NIST AI RMF 1.0 is the anchor document here, organizing risk management into four functions (Govern, Map, Measure, and Manage) that work across any sector and any organizational size. ISO/IEC 42001, the first certifiable international AI management system standard, provides the third-party certification pathway for organizations that need to demonstrate governance maturity externally. This layer speaks primarily to engineers and risk managers. It carries no penalties for non-adoption, but it is increasingly referenced by regulators writing the documents that do.

Layer Two is national binding law. This is where principles become obligations. The EU AI Act is the most fully realized example in operation today. It classifies AI systems into four risk tiers, with prohibited practices (social scoring, subliminal manipulation, real-time biometric surveillance in public spaces) banned since February 2025, and full high-risk system compliance required by August 2026. The Act applies extraterritorially: if your AI touches EU users, it applies to you regardless of where your organization is headquartered. As of August 2025, the General-Purpose AI model obligations are already in effect, requiring adversarial testing and cybersecurity protections for systemic-risk models. No binding U.S. federal equivalent exists as of March 2026, though 131 state-level laws and 59 federal regulations demonstrate that obligations are accreting fast at the sub-federal level.

Layer Three is international coordination: non-binding agreements, scientific consensus bodies, and multilateral partnerships building interoperability across national regimes. The OECD AI Principles, now endorsed by 47 jurisdictions and updated in May 2024 to address generative AI and data provenance, function as the shared vocabulary of this layer. The Bengio-chaired International AI Safety Report was explicitly modeled on the Intergovernmental Panel on Climate Change: separate the scientific assessment from the policy recommendation, so that 30 nations with different political systems can participate in the same evidence base without being bound to the same policy conclusions.

Beneath all three layers is the operational security layer. This is where governance intent meets actual adversarial reality, and it is the layer that most governance frameworks gesture at without fully descending into.

OWASP Top 10 for Large Language Model Applications is a community-driven open-source document built by more than 500 international experts identifying the ten most critical security vulnerabilities in LLM-based applications. Think of it as a field-tested attack surface map: Prompt Injection sits at the top, followed by Sensitive Information Disclosure, Supply Chain vulnerabilities, Data and Model Poisoning, and six more categories that cover the specific ways LLMs fail under adversarial conditions. It is not a governance framework. It creates no legal obligations. But when the EU AI Act requires adversarial testing for GPAI models with systemic risk, the OWASP Top 10 is the document that tells you what adversarial testing actually looks like.

MITRE ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) is a living knowledge base of adversary tactics, techniques, and procedures targeting AI and machine learning systems. As of October 2025, it documented 15 tactics, 66 techniques, 46 sub-techniques, 26 mitigations, and 33 real-world case studies. Think of ATLAS as ATT&CK’s younger sibling, built specifically for the attack surfaces that exist when the target is a model rather than a network. Where OWASP tells you what vulnerabilities exist, ATLAS tells you how adversaries have actually exploited them.

The difference between those two discovery paths is the difference between finding a problem on your terms and having it found for you. A vulnerability surfaced during an internal red team exercise gives your organization time to remediate before it becomes a compliance disclosure. The same vulnerability surfaced during a regulatory audit under the EU AI Act becomes an incident report, a potential enforcement action, and a timeline you no longer control.

Practitioners who stay exclusively at the governance layer will satisfy paper compliance without operational security. Practitioners who stay exclusively at the OWASP/ATLAS level will secure systems without understanding the legal accountabilities that define liability when those systems fail. The two layers are complementary, not substitutable.

There is a dissent case worth sitting with, because it is not one argument, it is three, and they point in different directions.

The first dissent holds that most current AI governance is too weak: industry-shaped, voluntary in practice, and structurally incapable of constraining the entities it nominally regulates. The AI Now Institute has argued in congressional testimony and public statements that absent enforceable law, governance energy gets diverted into roadmaps and principles that function as stalling mechanisms rather than constraints. The Stanford 2025 AI Index provides quantitative texture here: AI-related incidents grew 56.4% in a single year, reaching 233 formally reported cases in 2024, while the gap between risk acknowledgment and active mitigation remained pronounced.

The second dissent holds that fragmentation is not structurally neutral but actively harmful: that sophisticated actors with legal resources can arbitrage regulatory differences, housing their riskiest AI operations in low-enforcement jurisdictions while maintaining commercial presence in high-value regulated markets. Chinese data localization requirements are structurally incompatible with EU adequacy determinations, making unified compliance architectures mathematically impossible for multinationals operating across both.

The third dissent holds that the governance wave itself may be misdirected: that institutional energy consumed by compliance frameworks and legal definitions diverts attention from the harder unsolved technical safety problems, and that premature binding regulation may entrench incumbent organizations that can absorb compliance costs without improving underlying model safety.

These three critiques do not resolve into a synthesis. Governance is simultaneously too weak, structurally harmful in its fragmentation, and potentially counterproductive in its timing. Holding all three is analytically uncomfortable. It is also accurate.

None of that contradicts the value of having a map. Navigating a governance system that is simultaneously under-enforced, structurally fragmented, and possibly premature is the actual practitioner condition, not a hypothetical one. The map does not resolve those contradictions; it tells you which layer each contradiction belongs to, and which team in your organization owns it.

The Resolution: What the Map Gives You

Here is what the map gives you.

You now have a vocabulary that does not blur. When someone in a meeting says “AI governance,” you can ask which layer they mean: the technical standards layer, the binding law layer, or the international coordination layer. The answer determines which team owns it, which deadlines are live, and which tools apply.

You know that the EU AI Act is not a future concern. The GPAI model obligations have been in effect since August 2025. The prohibited practices ban has been active since February 2025. The full high-risk system compliance deadline is August 2026, seventeen months from now. If your organization has EU-facing AI deployments and has not begun a conformity assessment, the deadline is already inside your planning horizon.

The most accessible first step is a classification question, not a documentation project. Can you identify, right now, which of your EU-facing AI systems fall into the Act’s four risk tiers: unacceptable, high, limited, or minimal risk? If that question surfaces uncertainty or disagreement across your legal, engineering, and product teams, you have found your starting point. The gap assessment begins there.

You know that OWASP Top 10 for LLMs and MITRE ATLAS are not governance frameworks in competition with the three-layer stack. They are the implementation layer beneath it: the tools that turn a governance obligation to conduct adversarial testing into a structured, reproducible process that a security engineer can execute. This series will devote dedicated coverage to both in later installments.

And you know something more structurally important: fragmentation is not going away. The EU, the United States, China, and the UK are building regulatory regimes from different constitutional traditions, different value hierarchies, and different industrial stakes. The Brussels Effect means that EU standards are becoming the effective global floor for any organization with EU-facing operations, not through treaty harmonization but through market access logic. That is a planning input, not a complaint.

The governance wave is not a bureaucratic interference with AI development. It is AI development’s institutional infrastructure catching up to its technical capabilities. The organizations that treat governance literacy as a core competency, not a compliance checkbox, will spend the next three years building durable systems while others spend them reacting to enforcement actions.

If you are an engineer, the deadlines in this article belong in front of your legal team; if you are in legal or compliance, the OWASP and ATLAS section belongs in front of your security lead.

The next installment goes one layer deeper into the technical standards layer: specifically, how NIST AI RMF 1.0 and ISO/IEC 42001 function as the operational backbone that connects governance intent to engineering practice. If you manage engineers who are being asked to satisfy compliance requirements they did not design for, or a compliance team that needs to speak credibly to a technical audience, that installment is the translation layer between those two conversations.

Fact-Check Appendix

Statement: AI mentions in legislative proceedings increased 21.3% across 75 countries in 2024, rising to 1,889 from 1,557 in 2023. | Source: Stanford HAI AI Index Report 2025, Chapter 6 | https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter6_final.pdf

Statement: Since 2016, total AI legislative mentions have grown more than ninefold. | Source: Stanford HAI AI Index Report 2025, Chapter 6 | https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter6_final.pdf

Statement: U.S. state-level AI laws jumped from 49 in 2023 to 131 in 2024. | Source: Stanford HAI AI Index Report 2025, Chapter 6 | https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter6_final.pdf

Statement: U.S. federal agencies issued 59 AI-related regulations in 2024, more than double the 25 recorded in 2023, from 42 unique agencies compared to 21 in 2023. | Source: Stanford HAI AI Index Report 2025, Chapter 6 | https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter6_final.pdf

Statement: AI-related incidents grew 56.4% in a single year, reaching 233 formally reported cases in 2024. | Source: Stanford HAI AI Index Report 2025 (full report, arXiv) | https://arxiv.org/abs/2504.07139

Statement: The OECD AI Principles are now endorsed by 47 jurisdictions including the European Union, last updated May 2024. | Source: OECD.AI Policy Observatory, Background and 2024 Principles Update | https://oecd.ai/en/wonk/evolving-with-innovation-the-2024-oecd-ai-principles-update

Statement: The GPAI-OECD integrated partnership, formalized in July 2024, brings 44 countries under a shared implementation infrastructure. | Source: OECD.AI Policy Observatory Background | https://oecd.ai/en/about/background

Statement: The EU AI Act entered into force on August 1, 2024; prohibited practices applied from February 2, 2025; GPAI obligations from August 2, 2025; high-risk full compliance required August 2026. | Source: European Commission, EU AI Act Official Summary | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Statement: The International AI Safety Report was chaired by Yoshua Bengio and drew on 100 AI experts from 30 nations plus the UN and OECD; final version delivered January 29, 2025. | Source: arXiv, International AI Safety Report (final) | https://arxiv.org/abs/2501.17805

Statement: As of October 2025, MITRE ATLAS documented 15 tactics, 66 techniques, 46 sub-techniques, 26 mitigations, and 33 real-world case studies. | Source: MITRE ATLAS Official Knowledge Base | https://atlas.mitre.org/

Statement: The OWASP Top 10 for LLM Applications was built by more than 500 international experts and 150+ active contributors. | Source: OWASP Top 10 for LLMs 2025 (PDF) | https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf

Statement: The NIST AI RMF 1.0 was released January 26, 2023, developed through an 18-month public comment process with 240+ contributing organizations. | Source: NIST AI RMF 1.0 (NIST AI 100-1) | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Top 5 Prestigious Sources

Stanford Institute for Human-Centered AI (HAI), AI Index Report 2025 | https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter6_final.pdf

International AI Safety Report (final), chaired by Yoshua Bengio; arXiv | https://arxiv.org/abs/2501.17805

NIST AI Risk Management Framework 1.0 (NIST AI 100-1), U.S. Department of Commerce | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

European Commission, Regulation (EU) 2024/1689 (EU AI Act), Official Summary | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

OECD.AI Policy Observatory, OECD AI Principles and 2024 Update | https://oecd.ai/en/about/background

Peace. Stay curious! End of transmission.