What Each AI Security Role Actually Expects From You

Break down the 10 emerging AI security roles. Learn the skills, mandates, and transition paths from AppSec, Data Science, and GRC into AI/ML security.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

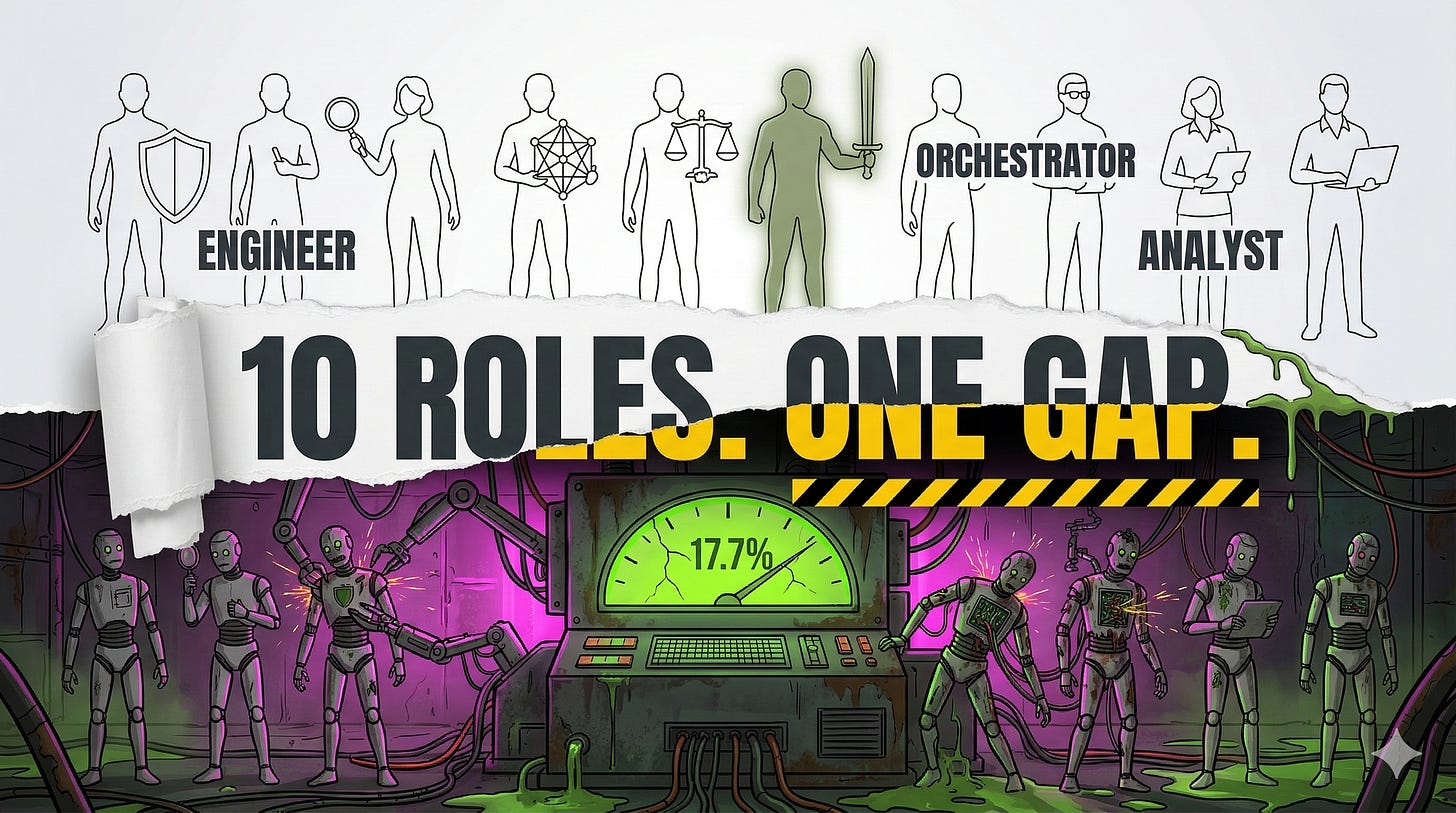

I spent weeks analyzing the data, and one number kept me up at night: 17.7%. Caleb Sima’s analysis of 243 AI security incidents reveals a jarring truth: the vast majority are just the “same old” vulnerabilities we’ve been fighting for decades. But that remaining 17.7%? That is the frontier. These are the novel, adversarial threat classes that traditional tools simply cannot see. They are the reason we are seeing an explosion of new job titles that didn’t exist three years ago. If you’re looking at this field and feeling overwhelmed by the alphabet soup of NIST, OWASP, and MITRE, you aren’t alone. I wrote this guide to be the “missing manual” for the ten roles defining the AI security stack. Whether you’re coming from AppSec, Data Science, or GRC, there is a path for you, but the ceiling requires a different set of muscles. This isn’t just a career move; it is a fundamental shift in how we defend autonomous systems. Let’s map your route to the 17.7%.

The Itch: Why This Matters Right Now

If "Nobody Knows What to Call This Job Yet. But Everyone Is Hiring for It." was a map of the storm, this guide is the compass for navigating it. We are shifting focus from the industry-wide identity crisis toward the granular DNA of the ten roles defining the AI security frontier. A massive thank you to Tox from the ToxSec substack for the collaboration; our deep-dives into the shifting requirements of the AI workforce were pivotal in translating vague job descriptions into the tactical mandates you see below. My hope is that this breakdown brings much-needed clarity to the specific skills and the fundamental mindset shift required to thrive in these new roles before the market catches up.

If you find this level of analysis valuable, I highly recommend signing up for his newsletter—it is where some of the most rigorous thinking on the frontier of AI security is happening right now.

You are probably carrying two conflicting signals, and both are accurate.

The first signal: most AI security work is just security. The Sima incident analysis found that 82.3% of documented AI security events traced back to traditional vulnerabilities in AI-adjacent software. Nearly 89% were researcher demonstrations rather than attacks by malicious actors operating in the wild. If you read those numbers and feel a kind of relief, that is reasonable. The floor is familiar.

The second signal: MITRE’s adversarial AI threat catalogue now documents 15 tactics, 66 techniques, and 46 sub-techniques drawn from real incidents against real AI systems. Fourteen of those techniques were added in October 2025 alone, specifically because autonomous AI agents had entered production environments fast enough to generate their own incident record. NIST published a formal adversarial machine learning taxonomy in March 2025 classifying 22 or more distinct attack types with their own identifier scheme, covering attacks that bypass application security controls entirely.

The confusion in this field, for practitioners evaluating a career move and for hiring managers building job descriptions, comes from treating those two signals as contradictory. They are not. They describe the same discipline at different altitudes. The 82.3% sits at the floor. The 17.7% sits at the ceiling. A practitioner who only knows one altitude is perpetually exposed at the other.

What follows maps that split to each of the ten roles currently taking shape. Three of those roles (AI SOC Orchestrator, AI Incident Response Orchestrator, and AI Prompt Engineer for Security) do not yet have a single framework codifying their competency requirements. Those sections are labeled explicitly as editorial synthesis. The other seven are grounded in NIST, OWASP, MITRE ATLAS, and the EU AI Act, with real obligations and real hiring signals behind them.

Before the role-by-role breakdown, one question deserves a direct answer: are these roles real, or are they rebadged traditional security titles with AI vocabulary? Seven of the ten have clear answers. AI/ML Security Engineer, AI Governance Lead, and AI Ethics and Compliance Officer map directly to binding regulatory obligations under the EU AI Act and certifiable controls under ISO 42001. AI Offensive Orchestrator, AI Threat Intelligence Analyst, AI Security Specialist, and Quantum-AI Security Specialist are grounded in frameworks (MITRE ATLAS, NIST AI 100-2, NIST FIPS 203/204/205) with documented real-world incident bases. These roles require materially different skills from their traditional counterparts.

The remaining three (AI SOC Orchestrator, AI Incident Response Orchestrator, AI Prompt Engineer for Security) are real functions inside organizations, but their boundaries are still being negotiated and no single standard yet defines them. Preparing for the first seven is a defensible investment. Preparing for the latter three is a bet on where the field is heading, not where it already stands.

The Deep Dive: The Struggle for a Solution

Role 1: AI/ML Security Engineer

This is the load-bearing role. Everything else in the AI security function depends on whether this one is done well.

The core mandate is securing AI/ML systems throughout the development lifecycle, from data ingestion through model deployment and post-deployment monitoring. NIST’s secure software development guidance, extended for generative AI systems in July 2024, defines a practice called PW.3 that has no equivalent in classical software development: confirming the integrity of training, testing, fine-tuning, and alignment data before it is used. A traditional security engineer knows how to validate software dependencies. PW.3 requires validating what a model learned from. That is a different trust chain pointing at a different attack surface.

Day to day, this role involves securing model weight storage and transfer, validating data pipeline integrity, conducting threat modeling of ML inference endpoints, hardening MLOps infrastructure (container orchestration, model registries, feature stores), and implementing access controls on training data. Verified hiring requirements at AI-native organizations specify at least five years of hands-on application and infrastructure security experience alongside production fluency in Python, Kubernetes, Docker, and cloud platforms.

The bridge from AppSec: you need to internalize model serialization risks (pickle deserialization in ML pipelines maps to known attack paths), supply chain compromise at the model layer (distinct from software dependency compromise), and the shared responsibility model across AI model producers, AI system producers, and AI system acquirers.

The failure mode is treating ML infrastructure as a standard software deployment. Practitioners who do this miss training data poisoning and model extraction attempts, because those attack vectors do not appear in classical vulnerability scanners. They require adversarial ML tooling and a threat model built from NIST AI 100-2, not from OWASP Top 10.

Role 2: AI SOC Orchestrator

Editorial synthesis: no single standard currently codifies this role’s competency requirements.

The function is real. The title is not yet stable.

An AI SOC Orchestrator integrates AI-driven threat detection into security operations workflows while managing the specific failure modes of AI-based detection systems. That second part is where the role diverges from traditional SOC work. AI-based detectors do not fail like signature-based systems. They drift. Their confidence distributions shift. An alert that fires reliably in week one may be producing false positives by week eight because the underlying model has drifted against a shifted data distribution.

Day to day, this role involves tuning AI-powered detection models, managing false positive rates structurally different from signature-based systems, orchestrating handoffs between automated AI triage and human analysts, and maintaining awareness of adversarial attacks against the detection infrastructure itself. MITRE ATLAS techniques covering LLM jailbreak and prompt injection become directly relevant when SOC tooling incorporates LLM-based analysis.

The bridge from traditional SOC: you need to understand model drift, the stochastic behavior of ML classifiers, and the specific risk that adversaries may attempt evasion attacks against your detection models, targeting the detector rather than the monitored system.

The failure mode is decision boundary blindness. An adversary who has studied an ML-based detection model does not trigger alerts by crossing thresholds; they stay fractionally below them, generating activity that registers as noise rather than signal. Standard SOC escalation criteria are calibrated to signature hits and threshold breaches. They have no mechanism for escalating “the model’s confidence distribution shifted by 4% over 72 hours in a way that correlates with reconnaissance patterns.” A practitioner who relies on alert-based escalation alone will never see the adversary who learned to treat the detection model as the first target rather than the obstacle.

Handoff note: when that sub-threshold pattern does eventually confirm as an incident, ownership transitions to the AI Incident Response Orchestrator. The escalation criteria and telemetry standards that make that handoff functional have to be negotiated before an incident tests them.

Role 3: AI Offensive Orchestrator

This is the red team function, and it is the thinnest talent pool in the field.

The core mandate is adversarial testing of AI systems using structured attack taxonomies to identify vulnerabilities before adversaries do. MITRE ATLAS provides the operational playbook. The framework’s 15 tactics and 66 techniques, delivered in STIX 2.1 format for machine-readable integration, define the scope of adversarial emulation. ATLAS Arsenal, a plugin for the CALDERA automated adversary emulation platform, is the primary tooling.

NIST AI 100-2 E2025 supplies the theoretical taxonomy. Attacks are classified along five dimensions: AI system type, ML lifecycle stage, attacker goals, attacker capabilities, and attacker knowledge. Day to day, this role executes evasion attacks, prompt injection campaigns, model extraction attempts, and data poisoning simulations. It also conducts dangerous capability evaluations, a structured assessment framework for identifying whether a model exhibits extreme risk capabilities including cyber-offense, deception, and self-proliferation.

The bridge from traditional penetration testing: you need to learn the ATLAS tactic chain from Reconnaissance through Impact, understand that attack success in adversarial ML is measured statistically rather than as a binary exploit outcome, and develop fluency in model probing methodology. The adversarial mindset transfers. The tooling and the metrics do not.

The failure mode is scope narrowing: restricting testing to prompt injection while ignoring supply chain compromise and training data integrity attacks. Those latter vectors require longer-term adversarial access and more patient methodology than a standard penetration testing engagement allows. Organizations that set time-boxed red team engagements without accommodating ML-specific timelines get prompt injection findings and miss the deeper architectural exposure.

Handoff note: findings from the Offensive Orchestrator feed directly into the AI/ML Security Engineer’s remediation backlog. The tension between “acceptable residual risk” and “active exploitation path” lives at that boundary. ATLAS Arsenal’s STIX 2.1 output format makes findings machine-readable and importable into the Engineer’s threat modeling workflow, which is the structural solution to findings that would otherwise get rationalized rather than remediated.

Role 4: AI Threat Intelligence Analyst

This role sits at the intersection of documented incident data, structured attack taxonomies, and organizational AI deployment profiles.

The core mandate is tracking, contextualizing, and disseminating intelligence on adversarial ML threats. MITRE ATLAS’s 33 case studies, drawn from real incidents against real AI systems, are the primary empirical source. Each case study maps to ATLAS technique IDs, making the incident record queryable against an organization’s specific AI stack. NIST AI 100-2 supplies the classification vocabulary. OWASP LLM 2025 supplies the application-layer vulnerability taxonomy.

Day to day, this role correlates observed attack patterns against ATLAS technique IDs, tracks the evolution of GenAI-specific attack vectors (indirect prompt injection, embedding poisoning), and produces intelligence products mapping emerging threats to organizational AI deployments. The STIX 2.1 data format ATLAS uses allows direct integration with existing threat intelligence platforms (OpenCTI, MISP, and Splunk’s TAXII-compatible feeds all ingest STIX 2.1 natively) once an analyst has the ML-specific vocabulary. A concrete daily workflow step: when an inference anomaly surfaces in monitoring (unexpected output distribution, atypical retrieval patterns from a vector store), the analyst pulls the ATLAS case study library and queries against the observed technique signature to determine whether the anomaly maps to a documented adversarial pattern or remains unclassified noise. That classification decision determines whether the finding gets escalated as a potential incident or logged as a drift event for the AI SOC function.

The calibrating lens for this role is the Sima finding. Of 243 documented incidents, only 17.7% were attributable to AI-specific threat classes rather than conventional vulnerabilities. A threat intelligence analyst who inflates the AI-specificity of incidents, attributing standard software vulnerabilities to novel adversarial ML techniques, produces intelligence products that misdirect defensive investment. Conversely, an analyst who dismisses novel attack classes because most incidents are conventional misses the specific 17.7% that existing security tooling cannot detect.

The failure mode is binary thinking at either end of that spectrum. Good AI threat intelligence requires holding both frequencies simultaneously and being precise about which is operating in each observed event.

Role 5: AI Security Specialist (Generalist)

This is the cross-functional connective tissue of an AI security function.

The core mandate is serving as the security practitioner accountable for AI risk across an organization’s portfolio, coordinating between engineering, governance, and operations. The NIST AI Risk Management Framework positions this role at the nexus of all four functions: GOVERN, MAP, MEASURE, and MANAGE. Day to day, the work involves conducting risk assessments against AI RMF subcategories, coordinating red team findings with governance reporting, maintaining AI system inventories, and translating framework requirements into engineering tickets. The NIST AI RMF Playbook, the companion resource to AI 100-1, maps each RMF subcategory to specific suggested actions and measurable outcomes; practitioners new to this role use it as a structured assessment checklist rather than working directly from the framework narrative.

NIST AI 600-1, the generative AI profile published in July 2024, extends this to 12 specific risk categories with over 200 suggested actions. A practitioner in this role needs operational fluency across all 12, including confabulation, harmful bias, and data privacy considerations that are absent from traditional security risk frameworks.

The bridge from generalist AppSec or cloud security: practitioners moving from those backgrounds already hold the assessment and coordination muscles this role requires. The gap is AI-specific risk vocabulary. You need to internalize the AI RMF’s seven trustworthiness characteristics (valid and reliable; safe; secure and resilient; accountable and transparent; explainable and interpretable; privacy-enhanced; fair with harmful bias managed) and understand how each maps to technical controls rather than policy language. Cloud security engineers who have managed shared responsibility models across provider and consumer boundaries will find the AI supply chain accountability structure the most transferable concept from their existing work.

The failure mode is breadth without depth: producing compliance matrices that satisfy auditors but miss active exploitation paths. A practitioner who can cite NIST AI RMF subcategories fluently but cannot identify a training data poisoning campaign in a pipeline audit report is performing the documentation of security rather than the practice of it.

Role 6: AI Incident Response Orchestrator

Editorial synthesis: no single standard currently codifies this role’s competency requirements.

The function diverges from traditional IR at the point of evidence.

The core mandate is leading containment, investigation, and recovery for incidents affecting AI systems: model compromise, training data breach, adversarial manipulation of production models. NIST SP 800-218A’s Respond to Vulnerabilities practice group and the AI RMF’s MANAGE 4 function define the framework substrate. NIST AI 600-1 identifies incident disclosure as one of four primary generative AI compliance considerations.

Day to day, this role triages AI-specific incidents (model poisoning discovered in production, prompt injection resulting in data exfiltration, supply chain compromise of a model dependency), coordinates rollback of compromised model versions, preserves forensic evidence from ML pipelines, and manages disclosure obligations. OWASP LLM06 (Excessive Agency) defines a class of incidents where autonomous AI actions produce cascading harm, requiring response procedures that account for agentic behavior propagating across connected systems.

The bridge from traditional IR: you need to understand model versioning and the difference between retraining and rollback, and you need to develop forensic methodology for inference logs. Standard digital forensics looks for file system artifacts. ML incident forensics looks for statistical signatures in inference patterns, subtle parameter drift invisible to conventional tooling.

The failure mode is playbook transplant: applying traditional IR procedures (isolate host, reimage, restore from backup) to model compromise scenarios where the compromise exists as parameter manipulation that survives redeployment of the same compromised model weights.

Handoff note: escalations arrive upstream from the AI SOC Orchestrator once a sub-threshold anomaly pattern confirms as an incident. Downstream, confirmed incidents involving high-risk AI systems trigger disclosure obligations under NIST AI 600-1 and EU AI Act Article 9, making the AI Governance Lead the required downstream recipient of incident findings that carry regulatory consequence.

Role 7: AI Governance Lead

This role has the clearest regulatory mandate of any in this field.

The core mandate is operationalizing AI risk management frameworks and regulatory obligations across the AI lifecycle. The EU AI Act’s Article 17 explicitly mandates an accountability framework setting out the responsibilities of management and staff across 13 distinct quality management system requirements. Article 9 requires a continuous iterative risk management process that runs across the entire lifecycle of a high-risk AI system and includes testing against prior-defined metrics and probabilistic thresholds. These are not aspirational obligations. Organizations deploying high-risk AI systems face enforcement timelines extending through August 2027.

ISO/IEC 42001:2023, the first certifiable AI management system standard, follows the Plan-Do-Check-Act methodology and requires leadership accountability, structured risk identification and mitigation, documented operational controls, continuous monitoring, and improvement cycles. Certification requires independent third-party audits valid for three years with annual surveillance.

Day to day, this role maintains the quality management system required by Article 17, oversees conformity assessment preparation for high-risk AI systems, manages the risk management system mandated by Article 9, tracks compliance against ISO 42001 control objectives, and coordinates cross-functional AI risk committees.

The bridge from traditional GRC: you need to understand AI-specific risk categories that have no classical analog (confabulation, recursive pollution, harmful bias amplification) and the shared responsibility model in AI supply chains, where security obligations are distributed across AI model producers, AI system producers, and AI system acquirers.

The failure mode is governance by document: producing policy artifacts that satisfy audit checklists without embedding measurable risk reduction into engineering workflows. The EU AI Act’s Article 9(8) specifically requires testing against probabilistic thresholds, not policy documentation. An AI Governance Lead who cannot specify what those thresholds should be, or verify that testing is actually happening against them, is performing the form of compliance rather than the substance.

Role 8: AI Ethics and Compliance Officer

This role sits adjacent to the Governance Lead but diverges in orientation.

Where the Governance Lead owns the management system infrastructure, the Ethics and Compliance Officer focuses on the substantive content flowing through that infrastructure: fundamental rights impact assessments, fairness evaluations, transparency obligations, and interpretability standards.

The EU AI Act’s Article 9 requires identification and analysis of risks to health, safety, and fundamental rights. The NIST AI RMF defines fairness with harmful bias managed as one of seven trustworthiness characteristics, and its MEASURE subcategories require evaluation of AI systems across fairness, explainability, and privacy dimensions. The ENISA Multilayer Framework for AI Cybersecurity explicitly incorporates transparency, fairness, accuracy, explainability, and accountability as Layer II security considerations, placing ethical dimensions inside the security framework rather than alongside it.

Day to day, this role conducts fundamental rights impact assessments for high-risk AI systems, monitors for discriminatory model outputs, maintains documentation required for conformity assessment, and advises product teams on ethical deployment constraints.

The bridge from traditional compliance: you need technical fluency in how bias manifests in ML systems, specifically training data skew, representation gaps, and feedback loops that amplify initial imbalances through production cycles. Understanding that algorithmic discrimination is a security concern, not only an ethical one, is the mindset shift this role requires.

The failure mode is ethical abstraction: producing principles documents disconnected from the technical mechanisms that produce harm. An organization with a published AI ethics framework and no process for detecting fairness metric degradation in production models has the documentation of an ethical AI program and not the practice of one.

Handoff note: the Ethics and Compliance Officer populates the risk register that the Governance Lead’s quality management system is built to process. When both roles exist in the same organization, their outputs need a shared schema. Overlap on accountability for the same deliverable is the most common structural failure auditors identify under ISO 42001 surveillance reviews.

Role 9: AI Prompt Engineer (Security)

Editorial synthesis: no single standard currently codifies this role’s competency requirements.

This function is sometimes dismissed as a soft role. It is not.

The core mandate is designing, testing, and hardening system prompts and prompt-based security controls for LLM-integrated applications. OWASP LLM01 (Prompt Injection) and LLM07 (System Prompt Leakage) define the primary threat surface. LLM07 establishes that assumptions about system prompt isolation have proven incorrect across documented incidents. System prompts, which often contain business logic, operational constraints, and proprietary instruction sets, can be extracted through adversarial conversation. LLM01 acknowledges that no fool-proof prevention methods currently exist for prompt injection, which is a structurally honest statement: a language model processes instructions and user content through the same channel, with no deterministic parser distinguishing them.

Day to day, this role designs system prompts that minimize leakage surface, tests prompt injection resistance across direct and indirect vectors (NIST AI 100-2 classifies these as distinct attack types, NISTAML.018 and NISTAML.015), implements layered prompt architectures separating instruction from user input, and validates that security-relevant instructions cannot be overridden through adversarial inputs.

The bridge from traditional AppSec: you need to develop empirical testing methodology rather than relying on deterministic validation. Standard security testing asks whether a control works. Prompt security testing asks how often a control works under adversarial pressure, across a distribution of inputs, measured statistically. BIML-81 documented that the same prompt can produce substantially different responses across invocations. That variability is not a bug in the testing methodology; it is a property of the system being tested.

The failure mode is false confidence in system prompt guardrails: treating a prompt-based safety instruction as a security boundary when it is a probabilistic suggestion to a stochastic system. A practitioner who validates prompt security with a single pass of test inputs has not tested the system. They have tested one sample from the system’s output distribution.

Role 10: Quantum-AI Security Specialist

The urgency for this role is not coming from the future. It is already here.

Adversaries are harvesting encrypted AI communications today, storing them against the day when quantum computing makes decryption feasible. That operational posture, harvest now, decrypt later, means the migration timeline belongs to the defender while the harvest timeline belongs to the adversary. Model weights, training data, inference API traffic, and provenance signatures protected by classical cryptography right now are already in adversary storage. Whether that data retains sensitivity in five years or ten is the calculation this role exists to make.

The core mandate is preparing AI infrastructure for the post-quantum cryptographic transition. NIST finalized three post-quantum cryptographic standards in August 2024 as the tools for that transition. FIPS 203 (ML-KEM) provides key encapsulation at three security levels, from AES-128 equivalent to AES-256 equivalent, based on the Module Learning with Errors problem. FIPS 204 (ML-DSA) provides digital signatures across three parameter sets using Fiat-Shamir with Aborts on module lattices. FIPS 205 (SLH-DSA) provides stateless hash-based signatures across 12 parameter sets, serving as the conservative backup whose security rests entirely on hash function hardness rather than lattice assumptions.

Day to day, this role conducts cryptographic inventory of AI systems, identifies encryption protecting model weights and inference data that requires post-quantum migration, implements ML-KEM for API communications, deploys ML-DSA or SLH-DSA for model signing and provenance verification, and manages transition timelines. SLH-DSA’s larger signature sizes (up to 49,856 bytes at its highest security parameter set) make it better suited for model release signing than high-throughput API authentication, which is a practical implementation decision with no equivalent in pre-PQC system design.

The bridge from traditional cryptography: you need to understand the distinction between lattice-based and hash-based security assumptions and evaluate which AI assets face quantum risk based on their data sensitivity lifetime. A model weight file with a five-year useful life faces a different threat calculus than a training dataset containing ten years of sensitive user interactions.

The failure mode is temporal displacement: treating post-quantum migration as a 2030 problem. The harvest is already in progress. The question is not whether adversaries will eventually be able to decrypt what they are collecting. The question is whether your AI assets will still be sensitive when they do.

The Resolution: Your New Superpower

The floor and the ceiling are both real. The 82.3% that looks familiar is where current skills buy entry. The 17.7% that does not look familiar is where irreplaceability is built.

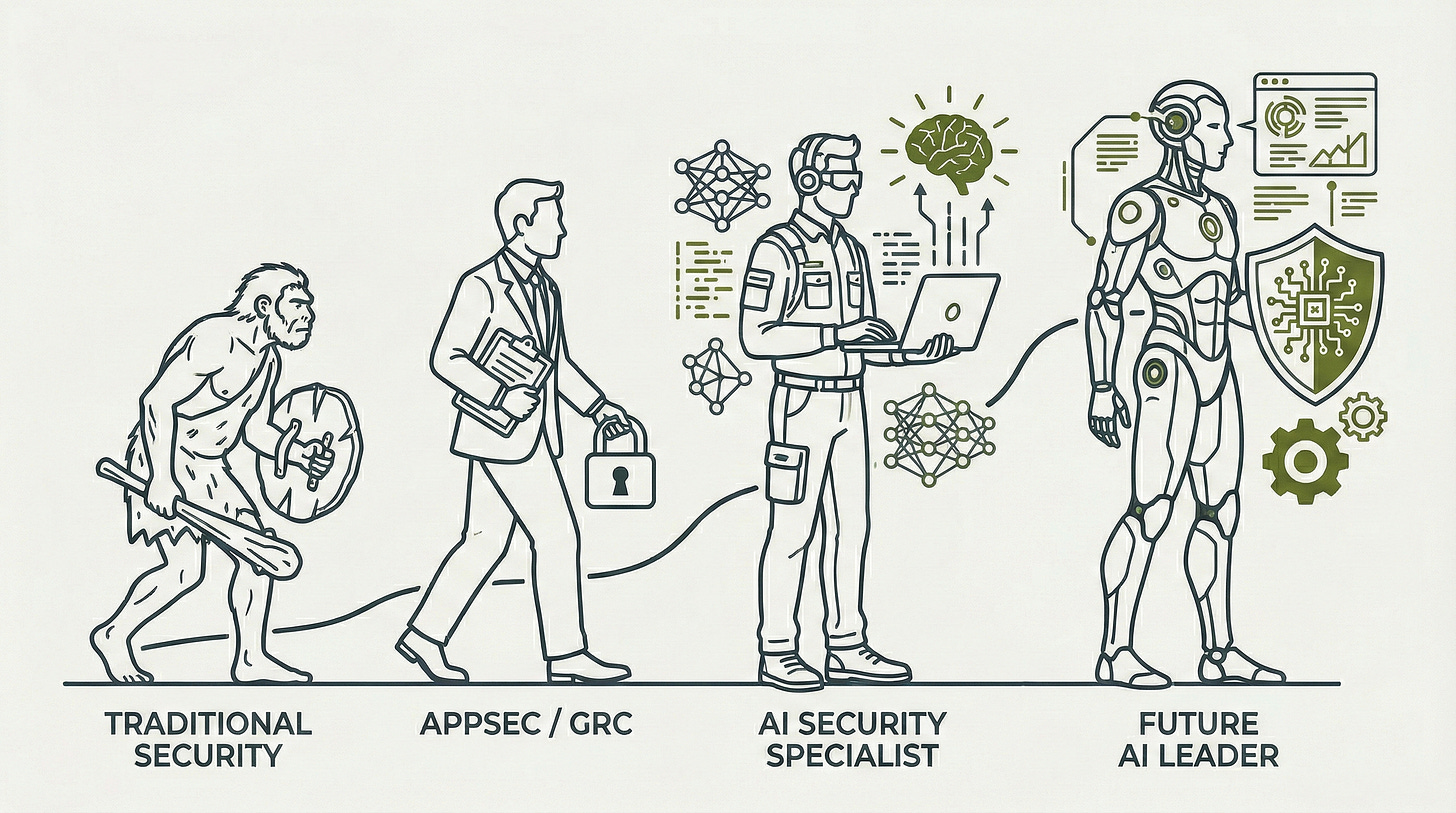

Here is where each background maps to highest-probability entry roles right now, given what is codified versus what is still being negotiated.

AppSec engineers have the shortest bridge. The three-step sequence (MITRE ATLAS case studies first, then NIST AI 100-2 E2025 for vocabulary, then OWASP LLM 2025 for assessment methodology) takes roughly three months of deliberate study and opens the door most directly to AI/ML Security Engineer, AI Offensive Orchestrator, and AI Threat Intelligence Analyst. These are the three roles with the deepest alignment between offensive security tradecraft and AI-specific threat knowledge. The Offensive Orchestrator role sits at the highest premium and thinnest talent supply of the three.

Practitioners who want cross-functional accountability before committing to an offensive or engineering specialization will find AI Security Specialist the most accessible initial landing, given its direct mapping to the NIST AI RMF assessment skills AppSec engineers already carry.

Data scientists arrive with ceiling knowledge already built. The gap is adversarial orientation: learning to ask how a system fails under attack rather than how it performs under normal conditions. BIML-81 is the entry point, because it speaks model architecture while reframing every design decision as an attack surface. From there, AI/ML Security Engineer is the natural landing role, given that practitioners from this background already understand what is inside the systems they are now learning to defend. AI Threat Intelligence Analyst is the secondary path for those whose strength is pattern recognition over implementation; for that track, the MITRE ATLAS case study library read as an incident classification exercise (matching observed anomaly to documented technique) rather than a technique reference is the right starting resource.

GRC and compliance practitioners have the governance track, but it carries a technical floor requirement most underestimate. The EU AI Act’s Article 9(8) mandates testing against probabilistic thresholds. Overseeing that obligation without understanding what probabilistic thresholds mean in a production ML system creates a compliance structure no auditor will accept at face value. The NIST AI RMF’s MEASURE function specifies quantitative evaluation across 13 subcategories. Governing those evaluations requires enough technical literacy to distinguish meaningful metrics from performative ones. For practitioners who close that gap, AI Governance Lead and AI Ethics and Compliance Officer are the two roles with the clearest regulatory mandate and the most acute talent shortage relative to enforcement timelines.

Traditional cryptographers and PKI engineers have a distinct path that the three background tracks above do not cover. Quantum-AI Security Specialist is the natural landing role for that cohort, and the NIST PQC migration guidance (FIPS 203, 204, and 205, published August 2024) constitutes both the starting resource and the primary ongoing reference for the work. AI training data and model weights with long sensitivity windows are already being collected by adversaries; the migration window narrows with every month that classical cryptography remains in place across AI infrastructure.

Three roles remain without single-standard codification as of February 2026: AI SOC Orchestrator, AI Incident Response Orchestrator, and AI Prompt Engineer for Security. CompTIA SecAI+ launched on February 17, 2026 as the first vendor-neutral AI security certification. No certification yet addresses AI red teaming or offensive AI security. For practitioners who want to position for these functions as they codify: traditional SOC experience is the strongest preparation for the AI SOC Orchestrator, traditional IR experience for the AI Incident Response Orchestrator, and AppSec for the AI Prompt Engineer. The practitioners who develop deep competency in those tracks now will have defined the role before the credential body captures it. That has historically been how the most valuable specializations in this field formed, not by waiting for the credential, but by becoming the reason the credential eventually exists.

Fact-Check Appendix

Statement: Of 243 documented AI security incidents analyzed, 82.3% were caused by traditional vulnerabilities rather than novel adversarial ML techniques. | Source: Caleb Sima, “Mythbusting AI Security Incidents,” November 6, 2024 | https://calebsima.com/2024/11/06/mythbusting-ai-security-incidents/

Statement: Nearly 89% of documented AI security incidents were researcher demonstrations rather than attacks by malicious actors operating in the wild. | Source: Caleb Sima, “Mythbusting AI Security Incidents,” November 6, 2024 | https://calebsima.com/2024/11/06/mythbusting-ai-security-incidents/

Statement: MITRE ATLAS documents 15 tactics, 66 techniques, 46 sub-techniques, and 33 real-world case studies; 14 new agent-focused techniques were added in October 2025. | Source: MITRE ATLAS |

https://atlas.mitre.org

Statement: NIST AI 100-2 E2025 classifies 22 or more distinct attack types with formal NISTAML identifiers across predictive and generative AI systems. | Source: NIST AI 100-2 E2025, March 2025 | https://csrc.nist.gov/pubs/ai/100/2/e2025/final

Statement: NIST’s secure software development guidance extended for generative AI systems in July 2024 defines practice PW.3 requiring confirmation of training, testing, fine-tuning, and alignment data integrity before use. | Source: NIST SP 800-218A, July 2024 | https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.800-218A.pdf

Statement: The Berryville Institute of Machine Learning identified 78 architectural risks specific to ML systems in January 2020. | Source: BIML Architectural Risk Analysis, January 2020 | https://berryvilleiml.com/results/ara.pdf

Statement: BIML extended that catalogue to 81 risks specific to large language models in January 2024. | Source: BIML LLM Architectural Risk Analysis, January 2024 | https://berryvilleiml.com/results/BIML-LLM24.pdf

Statement: Verified hiring requirements at AI-native organizations specify at least five years of hands-on application and infrastructure security experience for AI/ML Security Engineer functions. | Source: Anthropic career pages, verified postings February 2026 | https://anthropic.com/careers

Statement: OWASP LLM01 acknowledges that no fool-proof prevention methods currently exist for prompt injection. | Source: OWASP Top 10 for LLM Applications 2025 | https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf

Statement: OWASP LLM07 (System Prompt Leakage) establishes that assumptions about system prompt isolation have proven incorrect across documented incidents. | Source: OWASP Top 10 for LLM Applications 2025 | https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf

Statement: EU AI Act Article 9 requires a continuous iterative risk management process including testing against prior-defined metrics and probabilistic thresholds; Article 17 mandates 13 quality management system requirements including an accountability framework at paragraph (m); enforcement timelines extend through August 2027. | Source: EU AI Act, Regulation 2024/1689 | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng

Statement: ISO/IEC 42001:2023 is the first certifiable AI management system standard, following Plan-Do-Check-Act methodology; certification requires independent third-party audits valid for three years with annual surveillance. | Source: ISO/IEC 42001:2023 | https://www.iso.org/standard/42001

Statement: NIST AI 600-1 (GenAI Profile) extends the AI RMF to 12 specific risk categories with over 200 suggested actions and identifies incident disclosure as a primary generative AI compliance consideration. | Source: NIST AI 600-1, July 2024 | https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

Statement: NIST’s AI RMF MEASURE function specifies quantitative evaluation across 13 subcategories. | Source: NIST AI 100-1 (AI RMF 1.0), January 2023 | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Statement: ENISA’s Multilayer Framework for AI Cybersecurity explicitly incorporates transparency, fairness, accuracy, explainability, and accountability as Layer II security considerations. | Source: ENISA Multilayer Framework for Good Cybersecurity Practices for AI, June 2023 | https://www.enisa.europa.eu/publications/multilayer-framework-for-good-cybersecurity-practices-for-ai

Statement: NIST finalized three post-quantum cryptographic standards in August 2024: FIPS 203 (ML-KEM), FIPS 204 (ML-DSA), and FIPS 205 (SLH-DSA); SLH-DSA supports signature sizes up to 49,856 bytes at its highest security parameter set. | Source: NIST FIPS 203, 204, 205, August 2024 | https://nvlpubs.nist.gov/nistpubs/fips/nist.fips.203.pdf; https://nvlpubs.nist.gov/nistpubs/fips/nist.fips.204.pdf; https://nvlpubs.nist.gov/nistpubs/fips/nist.fips.205.pdf

Statement: NIST’s preliminary Cyber AI Profile addressing DETECT and RESPOND AI-specific considerations was released in December 2025. | Source: NIST IR 8596, preliminary draft, December 2025 | https://nvlpubs.nist.gov/nistpubs/ir/2025/NIST.IR.8596.iprd.pdf

Statement: CompTIA SecAI+ launched on February 17, 2026 as the first vendor-neutral AI security certification. | Source: CompTIA SecAI+ launch, PR Newswire, February 2026 | https://www.prnewswire.com/news-releases/where-ai-and-cybersecurity-converge-introducing-comptia-secai-302689399.html

Top 5 Prestigious Sources

MITRE ATLAS | https://atlas.mitre.org The authoritative adversarial threat taxonomy for AI systems, maintained by MITRE Corporation (a U.S. federally funded R&D center) with contributions from 16 member organizations. The 33-case study library represents the field’s primary empirical incident record.

NIST AI 100-2 E2025 (Adversarial Machine Learning Taxonomy) | https://csrc.nist.gov/pubs/ai/100/2/e2025/final The U.S. federal government’s formal classification of adversarial ML attack types, published by the National Institute of Standards and Technology. The definitive reference for attack vocabulary in this discipline.

OWASP Top 10 for LLM Applications 2025 | https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf The OWASP Foundation’s practitioner-level vulnerability classification for LLM-integrated applications. The community standard for application-layer AI security assessment.

EU AI Act (Regulation 2024/1689) | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng Legally binding regulation from the European Parliament with the most specific workforce accountability mandates of any current AI governance framework, covering role obligations from Article 4 through Article 31.

BIML LLM Architectural Risk Analysis | https://berryvilleiml.com/results/BIML-LLM24.pdf The Berryville Institute of Machine Learning’s January 2024 catalogue of 81 risks specific to large language model architectures. The most rigorous architectural risk analysis of the LLM stack currently available outside of NIST.

Peace. Stay curious! End of transmission.

Awesome follow up! Role 9 fan right here!