AI Security Theater: Why The Policy Isn't The Control

Expose AI security theater in vendor reviews. Learn why SOC 2 and AI policies fail to stop breaches, and how to implement true AI security controls.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

We are witnessing a dangerous era of AI security theater. Every time a vendor flashes a SOC 2 Type II report or a set of responsible AI principles as proof of their AI security posture, they are substituting paperwork for actual protection. I have seen this happen repeatedly in procurement reviews where critical questions about prompt injection testing or model weight extraction are met with blank stares. The data backs this up: a staggering 97% of organizations that experienced AI-related breaches lacked proper access controls. This is not an accident. It is a structural failure where policies are mistaken for technical controls. We sign leases with black-box tenants without building the necessary monitoring infrastructure. From shadow AI tools bypassing standard network filters to the absence of scheduled adversarial testing, the gap between having a rule and enforcing it is where the most expensive breaches originate. In this article, I break down the four acts of this security theater and explain exactly how to replace checkbox compliance with verifiable, automated security engineering.

The Itch: Why This Matters Right Now

Picture a security engineer in a vendor review call, thirty minutes into a standard procurement checkpoint. The vendor is confident. The deck has the right slides. SOC 2 Type II. A responsible AI framework published six months ago. A policy document titled “AI Governance Principles,” referenced in the last board report.

The engineer asks one question off-script.

“Can you show me your prompt injection test results from the last 90 days?”

Silence.

Not the silence of someone reaching for a file. The silence of someone who has never been asked that before.

That pause, the specific distance between a compliance artifact and an operational control, is AI security theater in its most recognizable form. The vendor is not lying. The slides are real. The SOC 2 report covers access management, encryption, and monitoring across five Trust Services Criteria the AICPA established in 2017. None of those criteria were designed for AI-native risks. None of them ask about prompt injection. None of them ask about model weight protection, training data provenance, output integrity monitoring, or shadow AI detection.

The vendor passes the review. The contract is signed. The access controls that would have caught the breach are never built.

IBM’s 2025 Cost of a Data Breach Report, based on 3,470 interviews across 600 organizations, found that 97% of organizations that experienced AI-related breaches lacked proper AI access controls at the time of the incident. That number does not describe a few unlucky outliers. It describes a structural condition. And the condition has a name.

The Deep Dive: The Struggle for a Solution

Think of an AI system deployed inside your organization as a black-box tenant.

The tenant moved in fast, referred by a well-credentialed building manager (the foundation model vendor with a SOC 2 report). The lease was signed without a full inspection. The tenant operates behind closed doors. You know roughly what they do. You have a house rules document they agreed to. But you cannot see inside the rooms where the consequential work happens, you do not know what the tenant’s suppliers are delivering through the service entrance, and your building’s existing fire inspection schedule was designed for a different kind of occupant entirely.

That is the structural condition. Now let me show you the four ways organizations pretend the inspection happened.

Act one: the checkbox policy

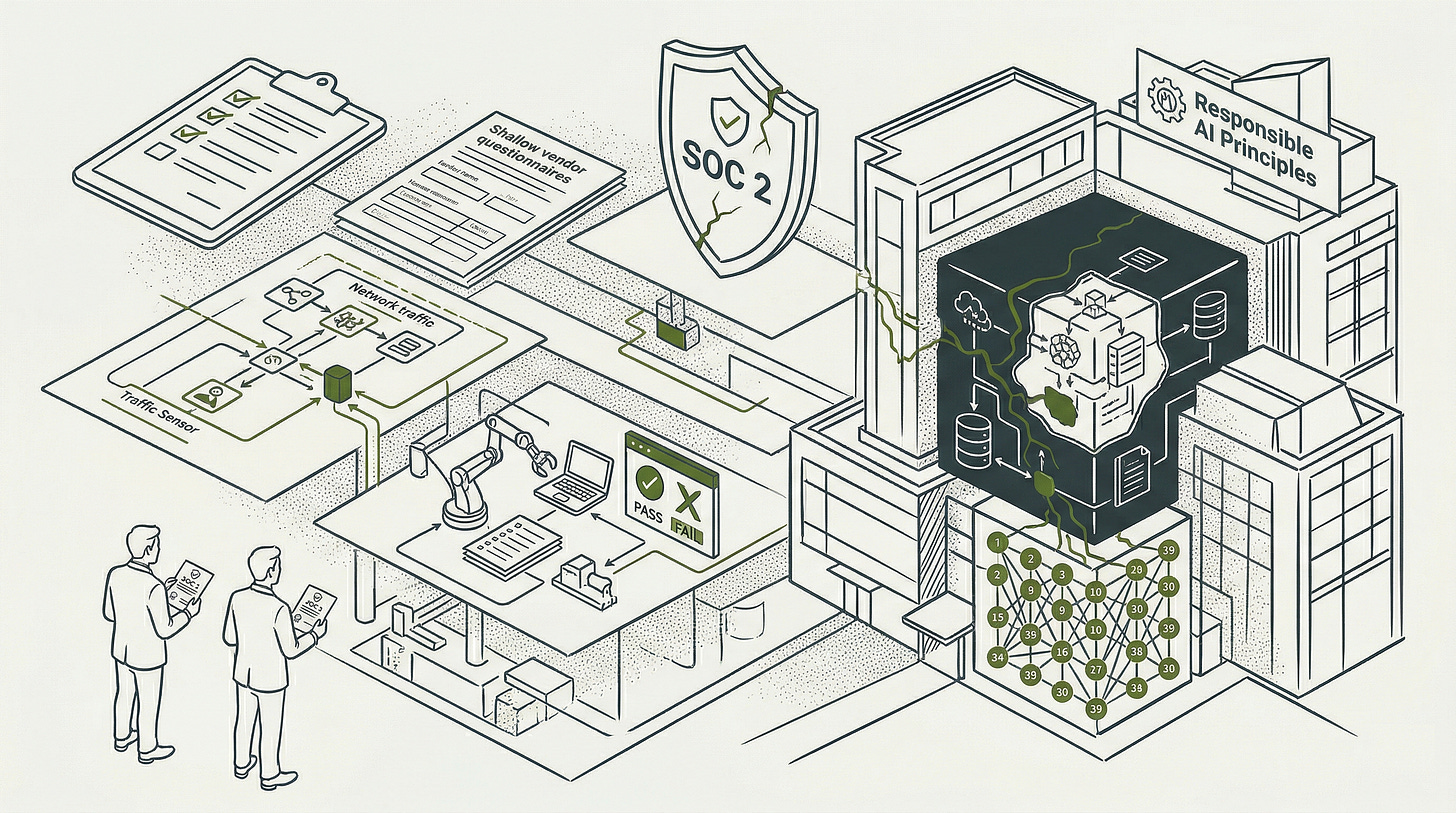

The house rules document. Your organization publishes an AI use policy: prohibited activities, acceptable use categories, an approval process for new tools. Leadership signs it. Legal reviews it. It gets filed and cited in the next board report. No detection layer is built beneath it. Employees continue using AI writing assistants, code completion tools, and transcription services through browser extensions that route over standard HTTPS connections your monitoring stack cannot distinguish from normal web traffic.

The tenant is using the service entrance the policy never mentioned.

IBM’s 2025 research found that shadow AI was a factor in 20% of all breaches studied. Shadow AI incidents carried a $670,000 premium above the average breach cost of $4.44 million. The policy exists. The detection capability does not. Those are two different things, and procurement treats them as one.

Act two: the vendor questionnaire pass-through

A partner sends a security questionnaire. Someone in procurement assembles the response in an afternoon: SOC 2 report attached, NIST CSF alignment noted, responsible AI principles document hyperlinked. The questionnaire moves through the approval queue. Nobody checks whether those artifacts address the specific risk surface the questionnaire was designed to probe.

Here is the concrete difference between a policy and a control, because this is where the gap becomes visible. A policy states that a condition must hold. A control is the mechanism that causes the condition to hold and produces evidence that it has held.

Consider the phrase: “Human reviewers will assess AI outputs before consequential decisions are made.”

That sentence does not build a confidence score display. It does not create an override button. It does not write a dual-authorization workflow. It does not generate a log entry that an auditor can pull. The policy asserts the intent; the control produces the event. In a questionnaire response, both look identical. In an incident investigation, only one of them is present.

Only 22% of organizations in IBM’s 2025 dataset conduct adversarial testing on their AI models at all. Questionnaire submission rates are presumably much higher.

Act three: SOC 2 as a shield

This form of theater is the most widely misunderstood because SOC 2 is a real framework. A Type II report means a licensed CPA firm examined your controls over a period of three to twelve months and found them operating effectively. That matters. The five Trust Services Criteria it covers, Security, Availability, Processing Integrity, Confidentiality, and Privacy, represent genuine baseline assurance for how your organization handles data.

Here is what the black-box tenant’s building inspection does not cover. Training data provenance and poisoning risk: not on the checklist. Model weight protection and extraction attacks: not on the checklist. Prompt injection and jailbreaking: not on the checklist. Inference logging and output integrity monitoring: not on the checklist. Non-human identity management for AI agents and automated pipelines: not on the checklist. Shadow AI detection and governance: not on the checklist.

Six categories of AI-specific risk. Zero coverage in the framework organizations cite most frequently as evidence of their posture. Schellman, an accredited SOC 2 certification body, documented these six gap categories directly from its own audit practice. IBM’s data confirmed the consequence: SOC 2 compliance did not close the access control gap in a single AI breach case it studied.

The building inspection certified the plumbing and the fire exits. The tenant is running a server farm in the basement.

Act four: responsible AI principles as a substitute for controls

This is the act that looks most like security and is furthest from it. Your organization invests genuine effort in a fairness, transparency, accountability, and explainability document. It passes legal review, ethics committee approval, and external communications polish. It satisfies board reporting requirements. It appears in sales conversations as evidence of AI maturity.

It has no mapping to the NIST AI Risk Management Framework’s Govern, Map, Measure, and Manage functions. It has no connection to the 39 normative control objectives in ISO 42001’s Annex A. A 2024 peer-reviewed study that tracked whether AI companies followed through on voluntary safety commitments they had publicly signed found that third-party reporting compliance averaged 34.4% across assessed organizations, with eight companies scoring zero on that dimension.

Principles without enforcement architecture are house rules for a tenant who knows you cannot check the rooms.

The four acts above are symptoms. The cause sits one level up, in the incentives that make theater the rational choice for every actor in the system.

The system that produces all four acts is not broken. It is working exactly as designed.

Procurement teams are measured on onboarding velocity. A vendor questionnaire answered with a SOC 2 citation closes faster than one requiring a full AI controls review. The procurement function does not own the downstream incident.

Compliance leads are measured on audit outcomes and deadline adherence. A responsible AI policy satisfies an internal audit request. The NIST MAP function, which requires inventorying every AI system’s context, intended purpose, potential negative impacts, and deployment environment before any risk measurement begins, requires technical vocabulary most compliance teams were not hired to develop. So they produce the artifact they know how to produce.

Vendors benefit from an ecosystem in which certification language substitutes for substantive control testing. IBM found that 46% of enterprise software buyers prioritize security certifications during vendor evaluation. The market rewards the artifact, not the control.

Boards receive responsible AI framework slides and SOC 2 citations without the vocabulary to distinguish them from operational controls, because nobody in the room is incentivized to ask the off-script question the engineer asked in the opening. The Proofpoint 2025 Voice of the CISO Report found that boardroom alignment with security leadership fell from 84% to 64% in a single year. The executive mandate that would be required to close the theater gap is moving in the wrong direction at precisely the moment AI risk is moving in the other.

The SANS 2025 AI Survey found that 100% of respondents planned to incorporate generative AI into their security functions within the year, while formal AI risk management programs were absent in the majority of organizations surveyed. Every organization intends to reach genuine posture. The implementation never catches up to the intention.

And the EU AI Act’s August 2026 high-risk system compliance deadline does not flex for intention.

The Resolution: Your New Superpower

Go back to that vendor call.

Same engineer. Same procurement checkpoint. Same off-script question about prompt injection test results from the last 90 days.

This time, the vendor does not pause. The security lead on the call shares a screen. A dashboard loads. Test run timestamps, attack categories, pass or fail by model version, remediation tickets linked to the failures, the most recent run from eleven days ago. The answer takes eight seconds. The prompt injection test results the engineer asked about are in that dashboard. They were always going to be there. The only question was whether anyone built the schedule that put them there.

That eight seconds is what genuine AI security posture feels like from the outside. Here is what produced it from the inside.

Your team has built log pipelines before. It has built access control layers before. It has built monitoring dashboards before. The two moves below apply those existing skills to a specific AI risk surface, with specific field requirements and specific detection properties. The novelty is the compliance specification, not the engineering category.

The first move is a shadow AI detection capability, not a policy prohibiting shadow AI, but a technical mechanism that identifies unsanctioned AI tool usage before a breach rather than after. IBM’s data shows that only 37% of organizations with AI governance policies have any audit mechanism for unsanctioned AI. The gap between having a rule and being able to enforce it is where the 20% of breaches involving shadow AI originate. A traffic inspection tool (CASB, or Cloud Access Security Broker) configured to classify requests reaching known AI service endpoints, combined with periodic OAuth grant audits and DLP rules detecting sensitive data patterns en route to AI domains, closes the detection gap that a policy document cannot close by definition.

The second move is adversarial testing on schedule. Not a red team exercise against the underlying infrastructure: adversarial testing against the model itself, covering prompt injection, jailbreaking, and data extraction attempts. IBM found that only 22% of organizations run this. Your NIST AI RMF MEASURE function output is the documentation that makes this testing a formal, repeatable activity rather than a one-time exercise that disappears into a Confluence page.

Both moves feed into ISO 42001 Annex A when the time for external certification arrives. The Annex A controls become verifiable because the underlying testing and detection infrastructure already produces evidence. The certification formalizes what is already running rather than constructing documentation for an auditor to accept without corresponding reality.

The black-box tenant metaphor completes here. The building inspection now has an actual schedule. The log store is the tamper-proof surveillance record that runs regardless of whether the tenant cooperates. The detection capability is the sensor on the service entrance. You are no longer a landlord who trusts a house rules document. You are a landlord with evidence.

The vendor who can answer the off-script question in eight seconds earned that capability. And in a market where 97% of AI-breached organizations had no access controls in place, eight seconds is a competitive advantage.

Fact-Check Appendix

Statement: 97% of organizations that experienced an AI-related breach lacked proper AI access controls. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: Shadow AI was a factor in 20% of all breaches studied; shadow AI incidents carried a $670,000 premium above the average breach cost of $4.44 million. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: Only 22% of organizations conduct adversarial testing on their AI models. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: Only 37% of organizations with AI governance policies have any mechanism to detect unsanctioned AI. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: 46% of enterprise software buyers prioritize security certifications during vendor evaluation. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: Organizations using AI and automation extensively in security saved $1.9 million per breach and reduced the breach lifecycle by 80 days. | Source: IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

Statement: A 2024 peer-reviewed study tracking AI company follow-through on voluntary safety commitments found that third-party reporting compliance averaged 34.4% across assessed organizations, with eight companies scoring zero. | Source: AAAI AIES 2024 Proceedings, “Do AI Companies Make Good on Voluntary Commitments to the White House?” | https://ojs.aaai.org/index.php/AIES/article/download/36743/38881/40818

Statement: 100% of respondents planned to incorporate generative AI within the year; formal AI risk management programs were absent in the majority of organizations surveyed. | Source: SANS 2025 AI Survey: Measuring AI’s Impact on Security Three Years Later | https://www.sans.org/white-papers/sans-2025-ai-survey-measuring-ai-impact-security-three-years-later

Statement: Boardroom alignment with CISOs fell from 84% in 2024 to 64% in 2025. | Source: Proofpoint, 2025 Voice of the CISO Report | https://www.proofpoint.com/us/resources/white-papers/voice-of-the-ciso-report

Statement: SOC 2 Trust Services Criteria cover five categories: Security, Availability, Processing Integrity, Confidentiality, and Privacy; criteria unchanged since 2017. | Source: AICPA, 2017 Trust Services Criteria (With Revised Points of Focus, 2022) | https://www.aicpa-cima.com/resources/download/2017-trust-services-criteria-with-revised-points-of-focus-2022

Statement: ISO 42001 contains 39 normative control objectives in Annex A. | Source: ISO/IEC 42001:2023 | https://www.iso.org/standard/42001

Statement: EU AI Act high-risk system full compliance deadline is August 2, 2026. | Source: European Commission, Regulation (EU) 2024/1689 | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng

Top 5 Prestigious Sources

IBM / Ponemon Institute, Cost of a Data Breach Report 2025 | https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls

AICPA, 2017 Trust Services Criteria for SOC 2 (With Revised Points of Focus, 2022) | https://www.aicpa-cima.com/resources/download/2017-trust-services-criteria-with-revised-points-of-focus-2022

SANS Institute, 2025 AI Survey: Measuring AI’s Impact on Security Three Years Later | https://www.sans.org/white-papers/sans-2025-ai-survey-measuring-ai-impact-security-three-years-later

European Commission, Regulation (EU) 2024/1689 (EU AI Act) | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng

AAAI AIES 2024 Proceedings, “Do AI Companies Make Good on Voluntary Commitments to the White House?” | https://ojs.aaai.org/index.php/AIES/article/download/36743/38881/40818

Peace. Stay curious! End of transmission.