EU AI Act High-Risk Classification & Liability Guide

Navigate the EU AI Act's high-risk tiers and avoid the liability trap. Learn the difference between AI providers and deployers under Article 25.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

I have spent the last few months watching product teams walk into a liability trap they didn’t even know existed. They build a hiring tool on a third-party model, put their name on it, and assume the model vendor owns the compliance. They are wrong. Under Article 25 of the EU AI Act, that team just became the ‘provider,’ inheriting the full weight of a conformity assessment. This article is a surgical dive into the binding law layer. I break down the four risk tiers, the eight prohibited practices that are already banned, and the specific triggers that shift liability from model makers to application developers. We move past the ‘responsible AI’ principles and into the specific requirements of Annex III: risk management systems, data governance evidence, and human oversight design. If you are building AI for hiring, credit scoring, or critical infrastructure, the August 2026 deadline is already inside your planning horizon. This is not a documentation exercise; it is a construction project. I provide the triage sequence you need to determine if your AI system owns your roadmap, or if you still own the system.

The Itch: Why This Matters Right Now

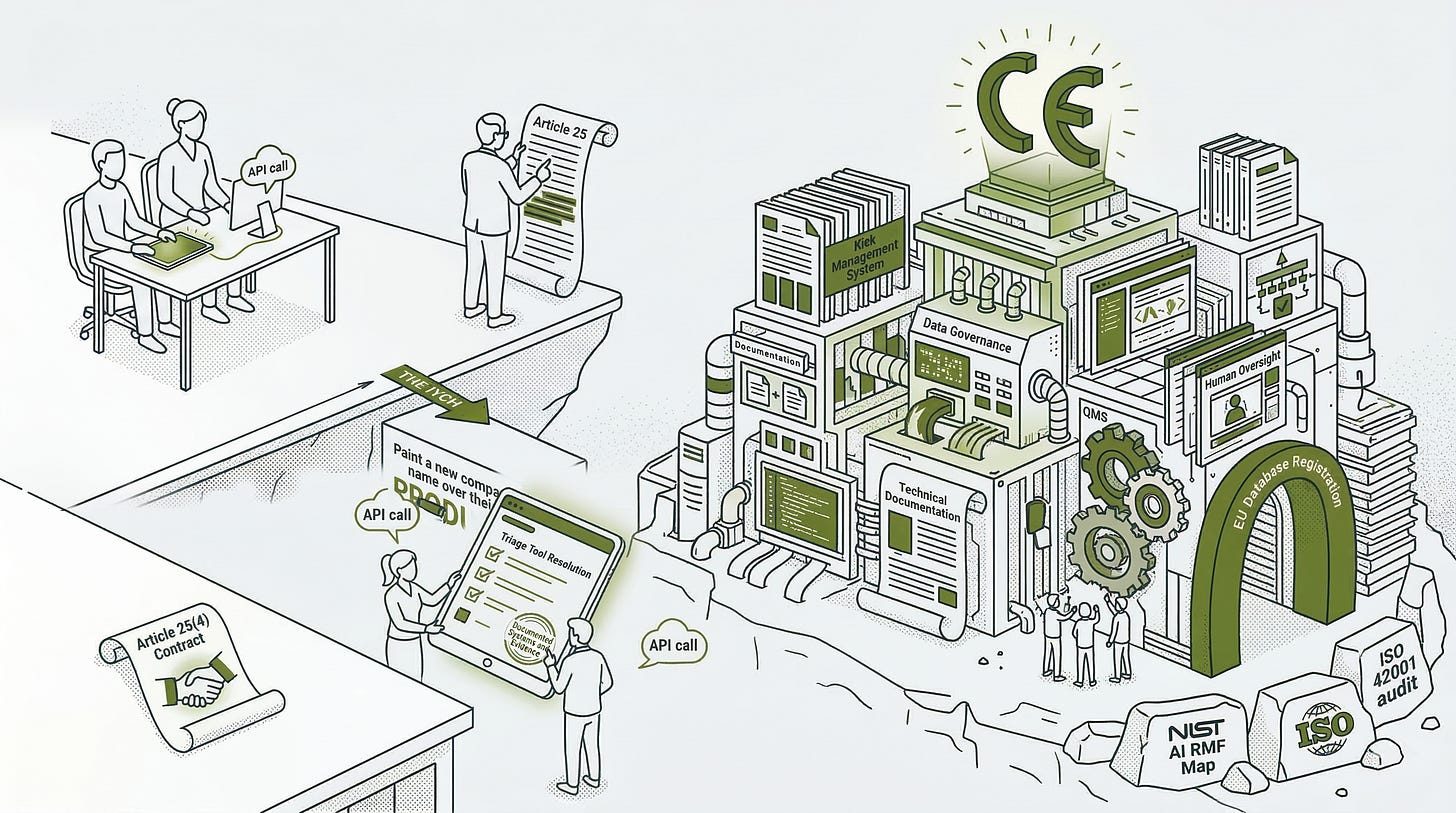

Picture a three-person product team.

They spent eleven months building a hiring screening tool on top of a third-party foundation model. They call the model through an API. They fine-tuned it on their industry’s job descriptions. They put their company name on the product. They are six weeks from customer launch.

Then someone in legal reads Article 25 of the EU AI Act.

The question that follows is short and uncomfortable: are we the provider of this system, or are we the deployer?

The answer determines everything. Providers of high-risk AI systems carry the full conformity assessment burden: risk management documentation, data governance evidence, human oversight design, quality management systems, CE marking, EU database registration, and post-market monitoring. Deployers carry considerably less: follow the provider’s instructions, implement human oversight, monitor performance, and report risks back upstream.

The difference between those two lists is not a documentation inconvenience. It is the distance between a manageable compliance workload and one that requires dedicated legal and engineering resources to execute properly before a regulatory deadline that is already inside your planning horizon.

The binding law layer, which is where this series has been heading since Article 1, is not abstract. It is a specific piece of legislation, with specific classification rules, specific compliance obligations, and specific penalties. And it contains at least one tripwire that a significant number of teams building on third-party foundation models do not know they have already crossed.

The Deep Dive: The Struggle for a Solution

Start with the risk architecture, because everything else branches from it.

The EU AI Act (Regulation (EU) 2024/1689, published July 12, 2024) sorts AI systems into four tiers. At the base is minimal risk: the majority of AI applications in commercial use today, unregulated beyond the market dynamics of self-certification. One tier up is limited risk, where transparency obligations already apply. If your system presents as human to a user, the user must know they are interacting with AI. That obligation is active now.

High risk carries the full compliance burden. General-purpose AI with systemic risk sits at a separate governance level altogether, managed by the European AI Office rather than national authorities. That distinction matters for teams building on third-party foundation models in a specific way. If the model you are building on carries GPAI systemic-risk designation under Article 51, its provider is already subject to Article 55 obligations: adversarial testing, incident reporting, and cybersecurity protections maintained at the model level. When Article 25(2) makes you the new provider of the downstream high-risk system, those two provisions, together with the written contract obligation in Article 25(4), give you the right to demand that technical foundation: the documentation exists because Article 55 required the upstream provider to produce it; the right to receive it comes from Article 25. The cooperation duty runs both directions: you owe the conformity assessment; the upstream provider owes you the technical foundation to execute it.

And above all of it, not really a tier at all, sit the eight prohibited practices. These are not compliance obligations. They are outright bans. Article 5 lists them: subliminal manipulation below the threshold of consciousness; exploitation of vulnerabilities tied to age, disability, or socioeconomic circumstance; biometric categorization inferring race, political opinions, religious beliefs, or sexual orientation; social scoring by public authorities; real-time remote biometric identification in public spaces for law enforcement; retrospective biometric identification without judicial authorization; predictive policing targeting individuals through profiling; facial recognition databases built from scraping without consent.

These bans became legally enforceable on February 2, 2025. Not a proposal. Not guidance. A prohibition with penalties attached.

Now the classification question that Article 2 put in front of you.

Article 6 of the Act defines high-risk through two independent gates.

The first gate: does your AI system serve as a safety component of a regulated product that already requires third-party conformity assessment under EU law? Medical devices, aviation systems, industrial machinery, motor vehicles. If yes, the AI component inherits the same regulatory weight as the product it sits inside.

The second gate: does your system’s intended use fall within any of the eight sectors listed in Annex III? Biometrics in sensitive contexts. Critical infrastructure management. Education and vocational training decisions. Employment and workforce management. Essential services including credit scoring, health insurance pricing, and emergency dispatch. Law enforcement. Migration, asylum, and border control. Administration of justice and democratic processes.

If your hiring screening tool filters job applications or evaluates candidates, it sits in Annex III sector 4(a). Article 6(2) puts it in the high-risk category. This is not a gray area.

One refinement matters. Article 6(3) allows a provider to argue their Annex III system is not actually high-risk, if it does not materially influence decision-making in a way that harms health, safety, or fundamental rights. That argument requires a documented assessment before the system goes to market. It has one absolute exception: any system performing profiling of natural persons is always high-risk. No argument available.

If a provider does take the Article 6(3) position for a different system, that documented assessment is not optional paperwork. It must exist before the system reaches the market. Under Article 49(2), the provider is also subject to a registration obligation with national competent authorities. If a regulator requests the documentation, it must be produced on demand. The Article 6(3) argument is available, but taking it creates its own paper trail. A compliance lead considering this route should treat the documented assessment as a live regulatory artifact, not a one-time filing.

Here is where the three-person product team discovers they have a problem.

Article 25 is the value chain responsibility provision. Think of it as a regulatory succession mechanism: a piece of legislation that does not let compliance obligations disappear simply because a downstream operator, not the original developer, is the one who shaped what the system became.

The article is short. Its logic is not.

Any distributor, importer, deployer, or other third party becomes the legal provider of a high-risk AI system, with the full obligations of Article 16, in three specific circumstances. First: they put their name or trademark on a high-risk system already on the market. Second: they make a substantial modification to a deployed high-risk system. Third: they modify the intended purpose of a non-high-risk system in a way that pushes it into the high-risk category under Article 6.

The hiring screening tool team has triggered at least two of those three.

They put their company name on the product. They fine-tuned the foundation model on domain-specific data, which almost certainly constitutes a substantial modification. Their intended use, candidate evaluation in employment, is an explicit Annex III sector. Under Article 25(2), the original foundation model provider is no longer considered the provider of this specific system for regulatory purposes. The product team is.

The foundation model provider is not released entirely. Article 25(2) requires the original provider to cooperate, handing over technical documentation, access, and assistance so the new provider can execute the conformity assessment. Article 25(4) requires both parties to formalize that cooperation by written contract. But the conformity assessment now belongs to the product team. Not the model vendor.

What a conformity assessment actually requires.

For most high-risk AI systems under Annex III, the assessment is a self-assessment: no external notified body required. The provider works through Annex VI, producing documented evidence across four obligation clusters.

The first cluster is system design and risk evidence: a documented risk management system running continuously across the full lifecycle, data governance procedures demonstrating that training datasets are representative and monitored for error, and technical documentation detailed enough for a regulator to assess compliance without access to the system itself.

The second cluster is operational integrity: automatic event logs recording incidents and anomalies throughout deployment, and demonstrated accuracy, robustness, and cybersecurity properties appropriate to the system’s intended use.

The third cluster is human accountability: transparency materials giving deployers everything they need to implement the system correctly, and documented human oversight mechanisms designed into the system’s architecture so that operators can intervene when outputs warrant it.

The fourth cluster is organizational governance: a quality management system showing that the provider’s internal processes, responsibilities, and corrective action procedures are sufficient to maintain compliance across the system’s full commercial life.

Once those clusters are documented, the provider issues an EU Declaration of Conformity, registers the system in the EU database under Article 71, and affixes CE marking.

Here is where the work from Article 2 stops being theoretical.

The NIST AI RMF documentation your team has been building is the substantive foundation for the risk management and testing evidence a conformity assessment requires. Specifically, the Map function’s outputs, documenting intended use, stakeholder context, and foreseeable misuse, form the backbone of the Article 11 technical documentation your assessment demands. The Measure function’s testing and evaluation records, covering fairness, reliability, and safety properties, produce the evidence your Article 15 accuracy and robustness obligations require. The ISO 42001 certification your organization has been pursuing is the management system audit trail that an external reviewer accepts as evidence of governance maturity. Without those artifacts already in place, the conformity assessment is not a documentation exercise. It is a construction project, undertaken under regulatory pressure, for a system already in production.

The August 2026 deadline for high-risk system compliance is inside your planning horizon. For high-risk systems embedded in regulated products, the deadline extends to August 2027, but that applies to a specific hardware product category, not to software-only Annex III systems.

Now the honest part.

The EU AI Act’s penalty structure is formidable on paper. Article 99 establishes three tiers. Violations of the Article 5 prohibitions carry penalties up to EUR 35 million or 7% of total worldwide annual turnover, whichever is higher. Violations of provider and deployer obligations carry penalties up to EUR 15 million or 3% of turnover. Supplying incorrect or misleading information to competent authorities carries a maximum of EUR 7.5 million or 1% of turnover. For SMEs, fines apply at whichever figure in each tier is lower, not higher.

Penalty provisions became applicable August 2, 2025. The EU AI Office and national competent authorities have investigation and enforcement powers. Several Member States were still in early stages of designating national competent authorities as of mid-2025. No named enforcement action with a confirmed penalty had been publicly documented against a specific operator under the Act at the time of this writing.

The GDPR is the instructive precedent here. It became enforceable May 25, 2018, following a two-year implementation window from its 2016 publication. The first significant fine, EUR 50 million issued by France’s data protection authority against a technology company, arrived in January 2019. Consistent cross-Member State enforcement took considerably longer to materialize. The AI Act will warm up along the same trajectory: early actions will target high-profile respondents, egregious violations, visible harms. The window between “rules in force” and “enforcement at scale” is not an exemption. It is a narrowing grace period.

One structural point that the U.S. experience makes concrete.

Executive Order 14110, the Biden administration’s comprehensive federal AI governance framework, was signed October 30, 2023. It lasted fourteen months. On January 20, 2025, the incoming administration revoked it. OMB Memorandum M-24-10, the operational instrument that mandated Chief AI Officers, use-case inventories, and minimum risk practices across federal agencies, was superseded by OMB M-25-21 in February 2025. Organizations that built compliance infrastructure oriented around M-24-10’s specific requirements absorbed the full cost of that investment when the framework changed underneath them.

The EU AI Act is not an executive order. It is a directly applicable Regulation under Article 114 of the Treaty on the Functioning of the European Union. It survives elections. It is enforceable across 27 Member States without further national transposition. Amending it requires the full EU legislative procedure. The Brussels Effect argument from Article 1 of this series rests on exactly this structural fact: the Act’s durability is constitutional, not rhetorical. Organizations planning AI infrastructure around EU compliance are not betting on a political preference. They are betting on primary law.

The Resolution: Your New Superpower

Here is the triage tool this article was building toward. Apply it to every EU-facing AI product in your portfolio, in order.

Does it land in an Annex III sector? Name the sector and the specific use case. If yes, high-risk classification is presumed. If no, you carry transparency obligations at most and the rest of this sequence does not apply.

Can you document the Article 6(3) non-high-risk argument? Only pursue this if the system does not materially influence individual decision-making and does not perform profiling. If you cannot document it cleanly before market placement, the high-risk path is your default: full high-risk providers register under Article 49(1), while providers taking the non-high-risk position register under Article 49(2); either way, a registration obligation exists.

Have any Article 25 triggers fired? Check three things: your name on the product, substantial modification of the upstream model, redirection toward an Annex III use case the original provider did not design for. One yes makes you the provider. All three require a written contract under Article 25(4) specifying the technical documentation and access your conformity assessment will need from your model vendor.

Does that contract exist? If not, it is the first document your legal team should draft. Without it, the cooperation duty Article 25(2) places on your upstream provider has no enforceable mechanism attached.

Is your NIST and ISO evidence ready? Map function outputs ground your Article 11 technical documentation. Measure function outputs satisfy Article 15. ISO 42001’s Annex A controls produce the quality management system evidence your fourth obligation cluster requires. If those artifacts are incomplete, your conformity assessment becomes a construction job with the clock already running.

The enforcement window is open and narrowing. The U.S. demonstrated what AI governance infrastructure looks like when it is built on a reversible executive instrument: fourteen months, then gone. The EU demonstrated what it looks like when it is anchored in primary law.

The organizations that treat August 2026 as an infrastructure question, starting with this triage sequence and grounding their conformity assessments in NIST and ISO work already underway, will arrive at that deadline with documented systems and defensible evidence.

The ones that wait for an enforcement action to make it urgent will arrive with a gap assessment they should have run eighteen months earlier, a model vendor contract that does not address Article 25(4), and a conformity assessment that is a construction project rather than a verification exercise.

The map was handed to you in Article 1. The translation layer was built in Article 2. This article is where the map becomes a deadline and the translation becomes evidence.

Fact-Check Appendix

Statement: EU AI Act (Regulation (EU) 2024/1689) was published in the Official Journal on July 12, 2024, and entered into force August 1, 2024. | Source: EUR-Lex, Regulation (EU) 2024/1689 | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng

Statement: Article 5 prohibited practices became legally enforceable on February 2, 2025. | Source: European Commission, EU AI Act Regulatory Framework | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Statement: GPAI model obligations became applicable on August 2, 2025. | Source: European Commission, EU AI Act Regulatory Framework | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Statement: High-risk AI system full compliance deadline is August 2, 2026; systems embedded in regulated products have an extended deadline of August 2, 2027. | Source: European Commission, EU AI Act Regulatory Framework | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Statement: Article 99 penalty tiers: up to EUR 35 million or 7% of worldwide annual turnover for prohibited practice violations; up to EUR 15 million or 3% for other operator obligation violations; up to EUR 7.5 million or 1% for supplying incorrect or misleading information. For SMEs, fines apply at whichever figure is lower within each tier. | Source: EU AI Act Article 99, AI Act Service Desk (European Commission) | https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-99

Statement: Annex III lists eight sectors triggering high-risk classification, including biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and administration of justice. | Source: EU AI Act Annex III, AI Act Service Desk (European Commission) | https://ai-act-service-desk.ec.europa.eu/en/ai-act/annex-3

Statement: Article 25 specifies three triggers under which a deployer, distributor, importer, or third party becomes the legal provider of a high-risk AI system: rebranding, substantial modification, or modification of intended purpose to bring a system into the high-risk category. | Source: EU AI Act Article 25, artificialintelligenceact.eu (Future of Life Institute, OJ version) | https://artificialintelligenceact.eu/article/25/

Statement: Article 25(4) requires providers of high-risk AI systems and their third-party AI component suppliers to specify obligations by written contract. | Source: EU AI Act Article 25, AI Act Service Desk (European Commission) | https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-25

Statement: Under Article 49(2), a provider who determines their Annex III system is not high-risk under Article 6(3) is subject to a registration obligation with national competent authorities. | Source: Under Article 49(2), a provider who determines their Annex III system is not high-risk under Article 6(3) is subject to a registration obligation with national competent authorities. | https://artificialintelligenceact.eu/article/49/

Statement: Under Article 49(1), providers of high-risk AI systems must register in the EU database before placing their system on the market or putting it into service. | Source: EU AI Act Article 49, artificialintelligenceact.eu (Future of Life Institute, OJ version) | https://artificialintelligenceact.eu/article/49/

Statement: GPAI models designated as systemic-risk under Article 51 are subject to Article 55 obligations including adversarial testing, serious incident reporting, and cybersecurity protections. | Source: EU AI Act Article 55, artificialintelligenceact.eu (Future of Life Institute, OJ version) | https://artificialintelligenceact.eu/article/55/

Statement: The GDPR became enforceable May 25, 2018, following a two-year implementation window from its 2016 publication. The first significant fine under GDPR, EUR 50 million issued by France’s CNIL against Google LLC, was issued January 21, 2019. | Source: EUR-Lex, Regulation (EU) 2016/679 (GDPR) | https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32016R0679

Statement: Executive Order 14110 was signed October 30, 2023, and rescinded on January 20, 2025, after fourteen months. | Source: Federal Register, EO 14110 | https://www.federalregister.gov/documents/2023/11/01/2023-24283/safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence and Federal Register, EO 14179 | https://www.federalregister.gov/documents/2025/01/31/2025-02172/removing-barriers-to-american-leadership-in-artificial-intelligence

Statement: OMB Memorandum M-24-10 was superseded by OMB M-25-21 in February 2025. | Source: OMB M-25-21 | https://www.whitehouse.gov/wp-content/uploads/2025/02/M-25-21-Accelerating-Federal-Use-of-AI-through-Innovation-Governance-and-Public-Trust.pdf

Top 5 Prestigious Sources

European Commission, Regulation (EU) 2024/1689 (EU AI Act), Official Journal | https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng

EU AI Act Service Desk (European Commission), Articles 25, 49, 55, and 99, Annex III | https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-99

Federal Register, Executive Order 14110, Vol. 88, No. 210 | https://www.federalregister.gov/documents/2023/11/01/2023-24283/safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence

Federal Register, Executive Order 14179 | https://www.federalregister.gov/documents/2025/01/31/2025-02172/removing-barriers-to-american-leadership-in-artificial-intelligence

Office of Management and Budget, Memorandum M-25-21 | https://www.whitehouse.gov/wp-content/uploads/2025/02/M-25-21-Accelerating-Federal-Use-of-AI-through-Innovation-Governance-and-Public-Trust.pdf

Peace. Stay curious! End of transmission.