International AI Governance: OECD, G7 & Bletchley Standards

Explore the global AI governance landscape. Learn how the OECD, G7, and Bletchley Process shape the standards that eventually become binding law.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

I have spent the last three articles showing you the tools with teeth: the EU AI Act’s penalties and ISO’s audit cycles. This final installment is about the layer above them: the one with no penalties and no auditors, yet it shapes every deadline you own. The international coordination layer is where the vocabulary of regulation is manufactured. By the time a term like ‘systemic risk’ reaches your compliance checklist, it has already been debated by 47 governments and stress-tested in G7 summits. I break down the OECD AI Principles update, the Bletchley Process laboratories, and Singapore’s Model Framework. We look at why the G7 Hiroshima Code of Conduct is an eleven-item checklist that is harder to grade than it looks, and how the UK’s AI Safety Institute is building the evidentiary infrastructure that binding law will eventually reference. This layer doesn’t announce itself with a regulatory notice; it arrives through market access logic and vendor contracts. I explain why a global AI treaty is a fantasy, but conceptual convergence is a reality you can’t ignore. This is your advance signal on the next regulatory cycle.

The Itch: Why This Matters Right Now

You have spent two articles learning about tools that carry teeth.

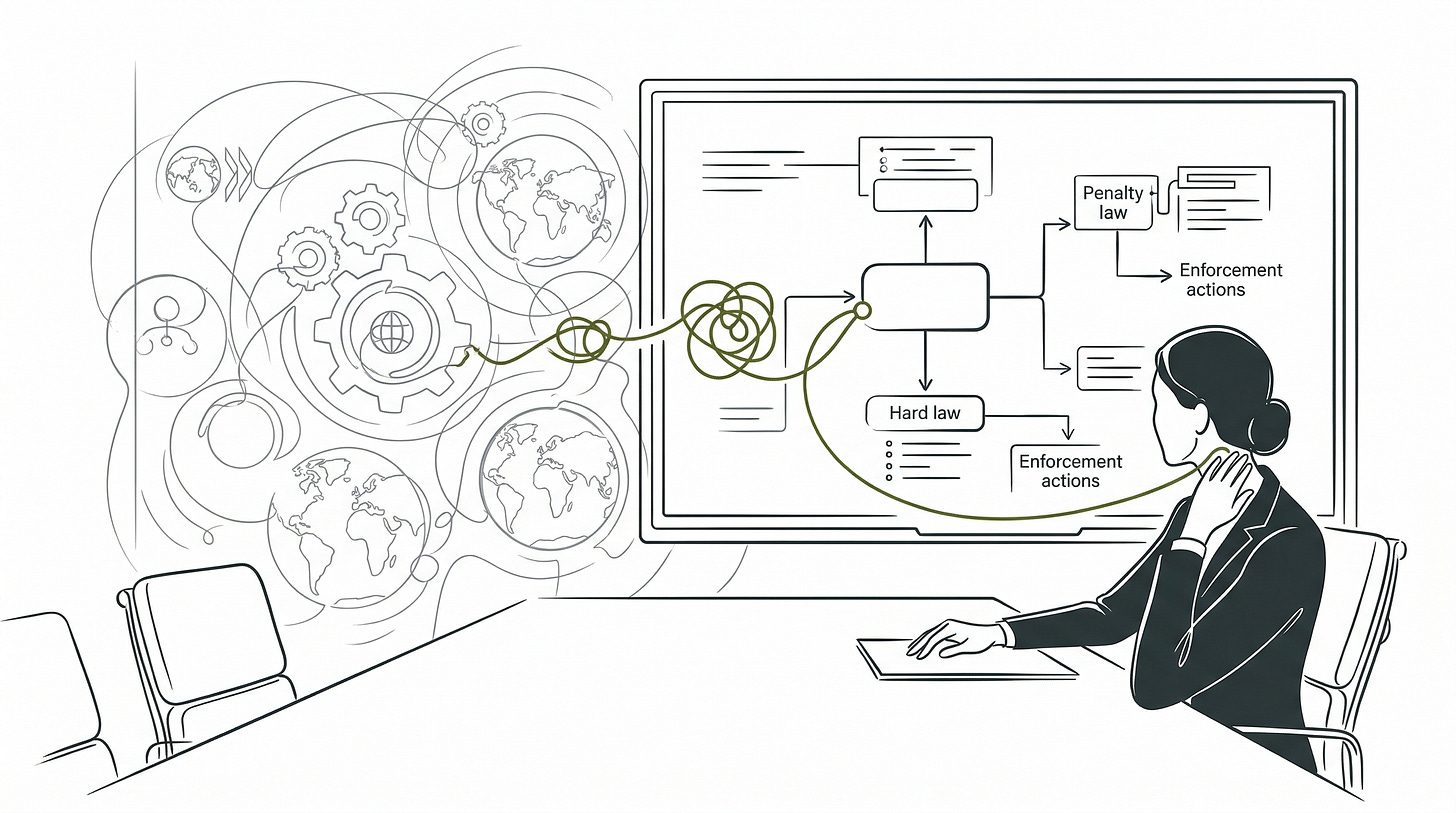

The EU AI Act has penalties reaching 7% of worldwide annual turnover. ISO/IEC 42001 requires a certification body to sign off. NIST AI RMF has been cited by name in federal procurement requirements. These instruments produce paper trails, audit cycles, and, eventually, enforcement actions. They are the layer that keeps compliance leads awake at night.

This article is about the layer above them: the one with no penalties, no auditors, and no August 2026 deadline.

Here is why you should care about it anyway.

Every vocabulary term your regulators used when drafting that binding law came from somewhere. The definition of an AI system that appears in the EU AI Act, the NIST framework, and Japan’s governance guidance did not materialize simultaneously in three separate rooms. It traveled. The CBRN risk categories and the AI evasion-of-oversight concern that appear in both the Seoul Ministerial Statement and Article 55 of the EU AI Act did not originate in a legislative chamber. They were stress-tested in the international coordination layer before either document was finalized.

The international coordination layer is where governance vocabulary gets manufactured. By the time a term reaches your compliance checklist, it has already been debated, revised, and adopted by 47 governments, a G7 summit process, and at least two multilateral scientific panels. You did not participate in that process. But you are subject to its output.

There is a second reason. The floor this layer is building does not announce itself with a regulatory notice. It arrives through market access logic. And that logic is already running.

The Deep Dive: The Struggle for a Solution

Start with the oldest instrument, because it is the one doing the most structural work without receiving much credit for it.

The OECD AI Principles are not a governance framework. They are the shared dictionary.

I want to start here because this is the one most compliance teams have filed under “background reading” and never opened again. That is a mistake, and the 2024 update is the reason why.

Adopted in May 2019 as the first intergovernmental standard on AI, and updated in May 2024 to address generative systems specifically, the Principles sit underneath every governance document covered in this series. They organize five value commitments: inclusive growth and sustainability, human rights and democratic values, transparency and explainability, robustness and safety, and accountability. Forty-seven governments have adopted them, including the EU, all 36 OECD member countries, and eleven partner economies.

What the Principles actually do is not enforcement. What they do is normalization. The definitions of an AI system and its lifecycle that appear in the OECD Recommendation have been cited or directly adopted in the EU AI Act, the NIST AI Risk Management Framework, and Japan’s national AI guidelines. When your legal team debates what counts as an AI system under the Act, they are arguing about a definition that was field-tested across 47 jurisdictions before a single compliance deadline was written.

The May 2024 update is worth your attention for three specific additions. Environmental sustainability entered Principle 1.1 for the first time, a signal that the energy footprint of large language models is now a governance variable, not just an engineering footnote. The obligation to address AI-amplified misinformation and disinformation entered Principle 1.2, a direct response to what generative systems produce at scale. And Principle 1.5, Accountability, received a significant expansion: it now explicitly covers harmful bias, intellectual property rights, and labor market consequences as dimensions of accountability that organizations and AI actors must address cooperatively across the supply chain.

That last addition is not abstract. It is the conceptual foundation for the supply chain liability logic that Article 25 of the EU AI Act operationalizes with enforcement teeth.

The Bletchley Process is the laboratory that identified the danger but cannot pull the fire alarm.

Here is what I think most people miss about this process: its value is not what it can stop. It is what it can document.

In November 2023, 28 countries and the EU gathered at Bletchley Park and signed a declaration establishing the first international safety-focused governance process for frontier AI systems. The Bletchley Declaration is not a treaty. It carries no legal liability, no penalties, and no secretariat with binding authority. What it carries is something more durable in the short term: a shared scientific mandate.

The Declaration commissioned Yoshua Bengio, Turing Award winner, to chair an international scientific report on AI safety. The final report arrived in January 2025, drawing on contributions from 100 AI experts across 30 nations plus the UN and OECD. It was explicitly designed on the model of the Intergovernmental Panel on Climate Change: separate the scientific assessment from the policy recommendation, so that nations with different political systems can participate in the same evidence base without being bound to the same policy conclusions.

That structure matters for your organization because it determines how quickly technical risk language moves from research consensus to regulatory requirement. The CBRN risk categories, the AI evasion-of-oversight concern, and the autonomous replication risk that now appear in the Seoul Ministerial Statement all passed through this scientific layer first.

The UK AI Safety Institute (AISI), established at Bletchley as the operational successor to the UK’s Frontier AI Taskforce and initially backed with £100 million in government funding, performs the technical evaluation work that underpins the process. AISI describes itself explicitly as not a regulator. When it evaluates a frontier model and identifies a dangerous capability, it provides feedback to the developer. It cannot halt a release, require a remediation, or impose any consequence. Its authority is entirely reputational. Its methodology is kept confidential to prevent gaming.

That combination, credible technical assessment with zero enforcement authority, is frustrating if you expect international governance to work like domestic regulation. It is intelligible if you understand that AISI is building the evidentiary infrastructure that binding law will eventually reference. The Seoul Declaration formalized a network of national AI safety institutes across ten countries and the EU. Singapore’s NTU Digital Trust Centre joined as the APAC node. The evaluation methodology being standardized across that network today is the methodology regulators will cite when they write the next generation of conformity assessment requirements.

The Singapore Model Framework is the practical field manual that the whole region carries.

Of the four instruments in this article, this is the one I most often see Western compliance teams underweight. If your organization has any APAC footprint, that is a gap worth closing.

Where the OECD Principles operate at the vocabulary level and the Bletchley Process operates at the frontier risk assessment level, Singapore’s Model AI Governance Framework operates at the level your engineers actually work at: organizational implementation.

Published in its second edition in January 2020 by Singapore’s PDPC and IMDA, the Framework translates ethical principles into four operational domains: internal governance structures and accountability assignment; human oversight calibration; operations management covering data quality, model explainability, and testing; and stakeholder communication. It was developed early enough to establish organizational muscle memory before the generative AI wave arrived, and it was backed with implementation tooling rather than principles alone.

Its regional influence is structural. The ASEAN Guide on AI Governance and Ethics, endorsed by all ten ASEAN member states in February 2024, draws directly from Singapore’s Framework architecture, applying its seven principles as the regional baseline for economies ranging from Singapore’s full governance readiness to Cambodia and Lao PDR, which had no national AI governance policy in place at all at the time of endorsement. Singapore’s Framework is not just one country’s approach. It is the practical floor for one of the world’s fastest-growing digital economies.

Project Moonshot, launched in open beta in May 2024, extends this infrastructure into the generative AI evaluation domain. It integrates red-teaming, benchmarking, and baseline testing in a single open-source platform with automated attack modules, over 100 benchmark datasets, and CI/CD pipeline integration. The AI Verify Foundation, which governs the toolkit, had grown to over 120 members by its first anniversary, including AWS, Dell, IBM, Microsoft, Meta, and Huawei. That membership spans the US-China technology divide. It is a testing framework that Chinese and American cloud providers participate in simultaneously, which is a form of technical coordination that no multilateral treaty has achieved.

In October 2023, IMDA and US NIST completed a joint mapping exercise between AI Verify and the NIST AI RMF in October 2023. That exercise is the kind of governance harmonization that reduces your compliance cost when operating across multiple jurisdictions. It does not produce a certificate. It produces interoperability.

The G7 Hiroshima Code is the eleven-item checklist that looks comprehensive until you try to grade it.

I am going to be direct about this one. Not because it is unimportant, but because the gap between what it promises and what it actually requires of organizations is wide enough to trip over.

Adopted October 30, 2023, the Hiroshima Process International Code of Conduct is voluntary, directed at frontier AI developers, and explicitly grounded in the OECD AI Principles. Its eleven commitments cover lifecycle risk evaluation, post-deployment vulnerability monitoring, transparency reporting, incident information sharing, AI governance policy disclosure, security controls for model weights, content provenance mechanisms like watermarking, safety research prioritization, SDG-aligned development priorities, international technical standards adoption, and data quality and intellectual property protections.

The operationalizability gap is real and worth naming directly. Commitments 1, 2, 3, and 6, the ones covering lifecycle risk evaluation, post-deployment monitoring, transparency reporting, and model weight security, describe specific technical activities that can be observed, documented, and audited. They map onto procedures your engineering team can implement. Singapore’s Project Moonshot directly implements two of them as automated tooling.

Commitments 8 and 9, which cover safety research prioritization and developing AI to address global challenges, contain no measurable targets, no reporting timelines, and no definition of what prioritization means in practice. Any organization can claim compliance with both at zero marginal cost of behavioral change.

The remaining five commitments (4, 5, 7, 10, and 11) sit in a genuine middle tier: technically specific enough to audit in principle, but not yet auditable in practice. Commitment 7 (watermarking and content provenance) depends on technology that does not yet work reliably at the scale generative systems require. Commitments 5, 10, and 11 (governance policy disclosure, international standards adoption, and data quality measures) lack any defined standard for what qualifying compliance looks like. Commitment 4, responsible incident information sharing across competing commercial organizations, is structurally the hardest of all eleven: it requires cross-industry trust infrastructure that no formal institution currently provides.

A peer-reviewed analysis of voluntary AI commitments published by the AAAI found that third-party reporting, one of the most directly verifiable dimensions of these commitments, scored an average of 34.4% compliance across companies assessed, with eight organizations scoring zero on that dimension. The absence of a monitoring mechanism is not a design flaw. It is the design.

The coordination picture is better than the institutional fragmentation suggests, and worse than the coordination language implies.

All four frameworks trace their definitional ancestry to the OECD Principles. The Hiroshima Code states this explicitly. Singapore’s Framework cross-references OECD accountability and transparency principles throughout. The Seoul Declaration acknowledged the OECD’s 2024 update. The definitional coherence of the ecosystem is genuine.

The institutional coherence is not. The G7 Code’s transparency reporting obligation and the AISI’s evaluation disclosure regime describe overlapping requirements with no joint implementation mechanism. An AI developer could satisfy one without the other. The OECD policy observatory and Singapore’s Compendium of Use Cases are parallel evidence repositories with no data-sharing architecture.

There is also a structural gap nobody has fully resolved. The OECD Principles and Singapore’s Framework address the full organizational AI stack. The Bletchley Process and the G7 Code address only frontier AI: large-scale foundation models trained by a small number of well-resourced organizations. A startup deploying a fine-tuned open-source model sits inside Singapore’s Framework scope and almost entirely outside the Bletchley Process’s frontier focus. The governance attention is concentrated at the top of the capability distribution. The deployment risk is distributed everywhere else.

The Resolution: Your New Superpower

The binding law layer gives you deadlines. The international coordination layer gives you something the Act cannot: advance signal on what the next version of those deadlines will require.

The Bletchley Process, the OECD Principles, and the G7 Code are the forums where the technical language of the next regulatory cycle is being drafted right now.

The shared risk threshold language from the Seoul Ministerial Statement describes AI capability categories that 27 governments agreed, for the first time, could constitute “severe risks.” That language is not in the EU AI Act today. The probability that it informs the next revision of the Act, or a new regulatory instrument from the European AI Office, is not low. Organizations that treat the Seoul risk categories as future regulatory signal today will have documented risk assessments ready when those categories arrive with enforcement mechanisms attached.

One conflation causes real planning errors, and it is worth naming before you carry this article into a strategy conversation.

The Brussels Effect belongs to the binding law layer, not this one. The EU AI Act is what forces any organization with EU-facing operations to comply or lose market access. That coercive force comes from primary legislation, not from voluntary forums. The OECD Principles and the G7 Code do not produce that pressure directly.

What the international coordination layer produces is something different: conceptual convergence. The vocabulary and risk categories being standardized in OECD and G7 forums today are what the next revision of the Act, or a new instrument from the European AI Office, will be written in. Organizations that dismiss this layer as advisory miss the signal it is carrying. The Brussels Effect makes EU standards the operational floor. The international layer determines what the floor looks like in the revision cycle after this one.

The floor is being poured. The pour does not announce itself in a regulatory filing. It moves through market access conditions, through procurement requirements from enterprise customers who demand ISO 42001 certification, through vendor contracts that reference NIST AI RMF documentation, through model API terms of service that incorporate G7 Code commitments.

One more calibration before the three questions.

A binding global AI treaty is not a realistic near-term outcome. Three structural facts explain why. First, US-China technology competition makes any enforcement mechanism that could constrain either power’s AI development almost certainly unachievable through negotiation. Second, the Council of Europe Framework Convention on Artificial Intelligence, opened for signature in 2024 and the closest existing instrument to a binding international AI treaty, covers 46 members and excludes most of the world’s largest AI-developing economies outside Europe. It is a significant regional commitment that cannot carry the weight of a global standard. Third, the precedents from GDPR and the OECD global minimum tax both show that voluntary norm-setting in exclusive forums can eventually produce global behavioral change, but both took over a decade, and neither involved two nuclear-armed powers competing for strategic technological dominance. The more probable path is the one already visible: voluntary framework convergence producing a de facto global standard through market access logic rather than negotiated obligation. The organizations that treat governance as infrastructure rather than a pending global agreement will be building durable systems while others are still waiting for an instrument that will not arrive on the timeline they are assuming.

That calibration has a practical output.

Three questions to run through your organization now.

Do you know which international frameworks your upstream vendors have committed to? The 16 companies that signed the Frontier AI Safety Commitments at Seoul have made documented obligations on adversarial testing, incident reporting, and cybersecurity protections. Those commitments exist. If your model vendor is one of them and you are a high-risk provider under Article 25, the Article 25(4) contract discussed in Article 3 should reference those commitments and specify your right to the technical documentation they require the vendor to produce.

Does your governance team track OECD AI Principles updates? The May 2024 update added supply chain accountability, bias, and environmental sustainability as explicit accountability dimensions. Those additions are already informing how national regulators interpret existing accountability obligations. Your responsible AI policy should be reviewed against the updated Principle 1.5 language before your next compliance cycle.

Is your APAC team using Singapore’s Model Framework or Project Moonshot as baseline? If your organization operates in Southeast Asia and your AI governance posture is built on EU and US frameworks only, you are missing the practical reference architecture that the entire ASEAN regulatory conversation is built around. The AI Verify Foundation’s ISO 42001 mapping means Project Moonshot test outputs can feed directly into the conformity evidence your certification body will want to see.

The map from Article 1 has three layers. You now have all three.

The technical standards layer gives you the operational tools: NIST for shared vocabulary and risk documentation, ISO 42001 for external verification, IEEE for upstream values traceability.

The binding law layer gives you the deadlines: February 2025 for prohibited practices, August 2025 for GPAI obligations, August 2026 for full high-risk system compliance.

The international coordination layer gives you the signal: the conceptual vocabulary your next compliance cycle will be written in, the risk categories likely to acquire enforcement mechanisms, and the interoperability architecture that determines how much of your compliance work can carry across jurisdictions.

None of the three layers is optional if you are building or deploying AI systems that touch users at scale. The question is only which layer you read first, and whether you read it before or after the deadline it is pointing toward arrives.

Fact-Check Appendix

Statement: 47 governments have adhered to the OECD AI Principles as of May 2024, including the EU and all 36 OECD member countries. | Source: OECD, “OECD Updates AI Principles to Stay Abreast of Rapid Technological Developments,” 3 May 2024 | https://www.oecd.org/en/about/news/press-releases/2024/05/oecd-updates-ai-principles-to-stay-abreast-of-rapid-technological-developments.html

Statement: The Bletchley Declaration was signed by 28 countries plus the EU on 1 November 2023. | Source: UK Government (DSIT), “The Bletchley Declaration by Countries Attending the AI Safety Summit, 1-2 November 2023” | https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration/the-bletchley-declaration-by-countries-attending-the-ai-safety-summit-1-2-november-2023

Statement: The International AI Safety Report was chaired by Yoshua Bengio, drew on 100 AI experts from 30 nations plus the UN and OECD, and was delivered in final form in January 2025. | Source (final report): arXiv, International AI Safety Report (final), January 2025 | https://arxiv.org/abs/2501.17805 | Source (Bengio chairing commission): UK Government Chair’s Statement, 2 November 2023 | https://www.gov.uk/government/publications/ai-safety-summit-2023-chairs-statement-2-november/chairs-summary-of-the-ai-safety-summit-2023-bletchley-park

Statement: The UK AI Safety Institute was initially backed with £100 million in government funding. | Source: UK Government (DSIT), “Introducing the AI Safety Institute,” January 2024 | https://www.gov.uk/government/publications/ai-safety-institute-overview/introducing-the-ai-safety-institute

Statement: AISI is not a regulator and is ultimately not responsible for release decisions made by the parties whose systems it evaluates. | Source: UK Government (DSIT), “AI Safety Institute Approach to Evaluations,” February 2024 | https://www.gov.uk/government/publications/ai-safety-institute-approach-to-evaluations/ai-safety-institute-approach-to-evaluations

Statement: The Seoul Declaration was signed by 10 countries and the EU on 21 May 2024; the Seoul Ministerial Statement was signed by 27 countries and the EU on 22 May 2024. | Source: Republic of Korea MoFA, Seoul Declaration; UK Government, Seoul Ministerial Statement | https://www.mofa.go.kr/eng/brd/m_5674/view.do?page=1&seq=321007 and https://www.gov.uk/government/publications/seoul-ministerial-statement-for-advancing-ai-safety-innovation-and-inclusivity-ai-seoul-summit-2024

Statement: 16 AI technology companies signed the Frontier AI Safety Commitments at the Seoul Summit. | Source: UK Government press release, “New Commitment to Deepen Work on Severe AI Risks Concludes AI Seoul Summit,” 22 May 2024 | https://www.gov.uk/government/news/new-commitmentto-deepen-work-on-severe-ai-risks-concludes-ai-seoul-summit

Statement: China did not sign the Seoul Ministerial Statement. | Source: UK Government, Seoul Ministerial Statement signatory list, 22 May 2024 | https://www.gov.uk/government/publications/seoul-ministerial-statement-for-advancing-ai-safety-innovation-and-inclusivity-ai-seoul-summit-2024

Statement: Singapore’s ASEAN Guide on AI Governance and Ethics was endorsed by all 10 ASEAN member states at the 4th ASEAN Digital Ministers’ Meeting on 2 February 2024. | Source: ASEAN Secretariat, “ASEAN Guide on AI Governance and Ethics,” February 2024 | https://asean.org/book/asean-guide-on-ai-governance-and-ethics/

Statement: The AI Verify Foundation grew to over 120 members by May 2024, including AWS, Dell, IBM, Microsoft, Meta, and Huawei. | Source: Singapore IMDA, “Singapore Launches Project Moonshot” (press release), 31 May 2024 | https://www.imda.gov.sg/resources/press-releases-factsheets-and-speeches/press-releases/2024/sg-launches-project-moonshot

Statement: IMDA and US NIST completed a joint mapping exercise between AI Verify and the NIST AI RMF in October 2023. | Source: Singapore IMDA, “Project Moonshot, powered by AI Verify, and AI Collaborations” (factsheet), 31 May 2024 | https://www.imda.gov.sg/resources/press-releases-factsheets-and-speeches/factsheets/2024/project-moonshot

Statement: Third-party reporting voluntary commitment compliance scored an average of 34.4% across AI companies assessed, with eight companies scoring zero on that dimension. | Source: AAAI AIES 2024 Proceedings, “Do AI Companies Make Good on Voluntary Commitments to the White House?” | https://ojs.aaai.org/index.php/AIES/article/download/36743/38881/40818

Statement: The Hiroshima Process International Code of Conduct contains 11 commitments and explicitly states it builds on the existing OECD AI Principles. | Source: G7 (Japan Presidency), “Hiroshima Process International Code of Conduct for Organizations Developing Advanced AI Systems,” 30 October 2023 | https://www.mofa.go.jp/files/100573473.pdf

Statement: The Council of Europe Framework Convention on Artificial Intelligence, Human Rights, Democracy, and the Rule of Law was opened for signature in 2024 and covers 46 Council of Europe member states. | Source: Council of Europe, Framework Convention on AI | https://www.coe.int/en/web/artificial-intelligence/the-framework-convention-on-artificial-intelligence

Top 5 Prestigious Sources

OECD, Recommendation of the Council on Artificial Intelligence (OECD AI Principles, 2019, updated May 2024) | https://www.oecd.org/en/topics/ai-principles.html

UK Government (DSIT), “The Bletchley Declaration,” 1 November 2023 | https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration/the-bletchley-declaration-by-countries-attending-the-ai-safety-summit-1-2-november-2023

G7 (Japan Presidency), “Hiroshima Process International Code of Conduct for Organizations Developing Advanced AI Systems,” 30 October 2023 | https://www.mofa.go.jp/files/100573473.pdf

Singapore PDPC/IMDA, “Model AI Governance Framework, Second Edition,” January 2020 | https://www.pdpc.gov.sg/help-and-resources/2020/01/model-ai-governance-framework

AAAI AIES 2024 Proceedings, “Do AI Companies Make Good on Voluntary Commitments to the White House?” | https://ojs.aaai.org/index.php/AIES/article/download/36743/38881/40818

Peace. Stay curious! End of transmission.