LiteLLM Supply Chain Attack: Why Your AI Stack Is Vulnerable

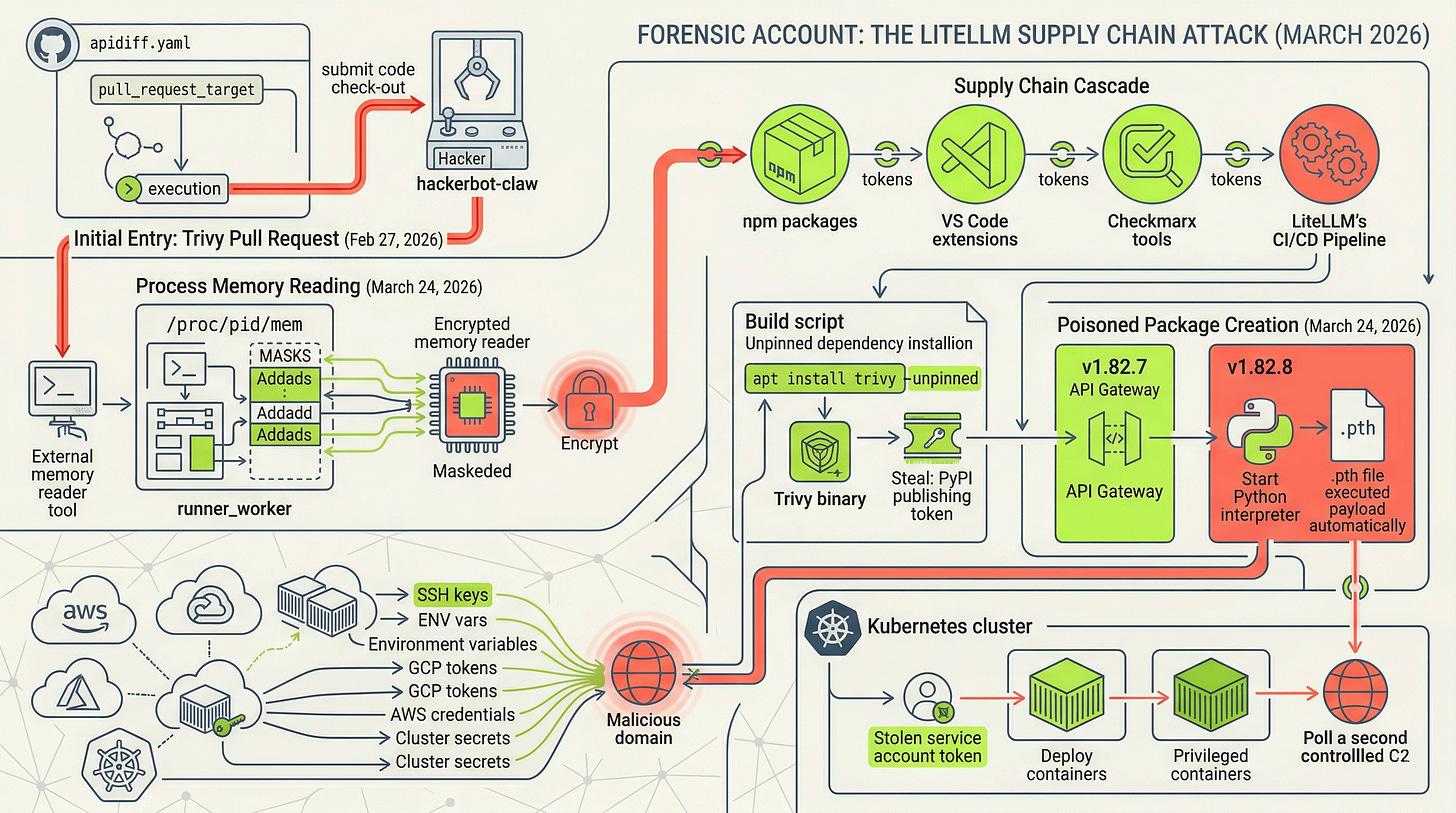

An analysis of the March 2026 LiteLLM supply chain breach. Learn why model security fails when the infrastructure and supply chain remain unexamined.

Disclaimer

A note before we start: I use LiteLLM daily. I have recommended it in previous articles. I think it is genuinely good software built by a team that responded to this incident with transparency and speed, and I will continue using it. None of that changes the analysis below. If anything, it sharpens it: the organizations most exposed to this attack are exactly the ones that adopted LiteLLM because it is good at what it does. Popularity and architectural centrality are what made it a target. This piece is not a verdict on the project. It is a forensic account of what happened to it, and what that means for everyone building on top of it.

TL;DR

Your AI agent is locked down. You have spent months on prompt injection filters, inference guardrails, and red-teaming the model. But while you were watching the front door, the attacker walked through the loading dock. In March 2026, the LiteLLM supply chain attack proved that the most dangerous threat to your AI stack does not need to understand a single line of AI code. By compromising a routine security scanner and exploiting unpinned dependencies, threat actors gained the keys to every major LLM provider simultaneously. This was not an AI problem: it was a boring, traditional, and devastating infrastructure failure. We are moving at a competitive pace that treats supply chain hygiene as overhead, creating a dependency graph that is wide, shallow, and entirely unexamined. If you are protecting the model but ignoring the pipeline that delivers it, you are defending the wrong fortress.

The Itch: Why This Matters Right Now

It started with a frozen laptop.

Callum McMahon, a research scientist at FutureSearch AI, was working through a routine session when his 48GB Mac began behaving badly. Processes that should have opened in milliseconds were taking ten seconds. CPU was pegged. The machine was drowning in work it hadn’t been asked to do.

He hard reset it, opened a Docker container, and started pulling the thread.

What he found was that a Cursor MCP plugin had pulled in a version of LiteLLM as a transitive dependency, and that version was running a fork bomb. A malicious file installed alongside the package was spawning child Python processes that each spawned more child Python processes, each triggering the same file. Exponential. Uncontrolled. The entire machine was the collateral damage.

The fork bomb was a bug in the malware. A mistake the attacker hadn’t intended. Which means that without that bug, the credential stealer running underneath would have executed silently, shipped its payload, and left no visible symptom. You would never have known.

McMahon reported it to PyPI. The packages were quarantined within 46 minutes of the first upload. But quarantine on PyPI does not reach into pip caches, Docker image layer histories, or CI pipeline artifact stores that had already downloaded the package before that action fired. LiteLLM’s own incident report puts the full exposure window at 10:39 UTC to 16:00 UTC, roughly 5.5 hours. FutureSearch’s analysis of PyPI’s public download logs shows approximately 47,000 environments had already received the malicious versions inside the quarantine window alone. The broader exposure is still being measured.

Here is the thing that matters for you, whether you are building AI applications, running security for an organization that uses them, or advising teams that do: not a single line of AI code was involved in this attack. No model was touched. No prompt was injected. No inference endpoint was exploited. The attacker needed to understand nothing about large language models to get inside the infrastructure managing them.

That is the point of this piece. And the question it raises is not what your AI security stack does to protect the model. It is what it does to protect the infrastructure the model never sees.

The Deep Dive: The Struggle for a Solution

LiteLLM is the universal translator of the AI development stack.

Give it a request, and it routes it to whichever LLM provider you have configured: OpenAI, Anthropic, Azure OpenAI, Google Vertex AI, AWS Bedrock. It normalizes the API formats, tracks costs, handles retries, and manages the keys for all of them simultaneously. Wiz Research reports that LiteLLM appears in 36% of monitored cloud environments. But that figure needs unpacking before it becomes real.

A compromised LiteLLM deployment does not hand an attacker a single set of cloud credentials. It hands them the API keys for every LLM provider the organization uses, in one grab. OpenAI, Anthropic, Azure OpenAI, Google Vertex AI, AWS Bedrock: simultaneously, from one package, in one environment. For a CISO, the blast radius question is not “did someone get into our cloud account?” It is “did someone get into all of our AI providers at once?”

That is the target. Now let’s follow the attackers.

This incident belongs to a recognizable lineage. In 2020, NOBELIUM compromised SolarWinds’ Orion build pipeline to distribute a backdoor inside a signed, legitimate software update. In 2024, a threat actor spent two years building maintainer trust in the XZ Utils compression library before inserting a backdoor into the build artifacts, not the source code. In 2026, TeamPCP harvested a PyPI publishing token from a CI/CD pipeline and uploaded malicious packages directly to the official registry. The structural constant across all three: the attacker’s target was not application code. It was the mechanism by which trusted code reaches production. The attack surface was the supply chain, and the entry point was the infrastructure organizations trust automatically.

The Entry Point Nobody Fixed

The campaign did not begin with LiteLLM. It began twenty-five days earlier with Trivy, Aqua Security’s open-source vulnerability scanner. Trivy is the tool that tells you whether your containers and code have security problems. The irony is surgical.

On February 27, 2026, an autonomous bot registered under the name hackerbot-claw submitted a pull request to Trivy’s GitHub repository. The pull request exploited a trigger class called pull_request_target. To understand why that matters, you need to understand what that trigger actually does, and more importantly, what it does when combined with two other completely ordinary workflow steps.

GitHub Actions workflows respond to different events. The standard pull_request trigger runs workflow code inside an isolated sandbox, using the permissions of the fork that submitted the request. It cannot see the base repository’s secrets. It is, by design, unprivileged. The pull_request_target trigger is different. It runs workflow code in the context of the base repository, granting access to everything the repository owns: its secrets, its tokens, its deployment credentials. The intended use case is legitimate: sometimes a workflow needs base repository permissions to post a comment or update a status check in response to a pull request from an external contributor.

The danger arrives in three steps, and all three have to be present.

Step one: a workflow uses pull_request_target, which grants base repository privilege to the workflow runtime.

Step two: that same workflow checks out code from the pull request head, fetching the submitter’s code into the runner environment.

Step three: the workflow executes that checked-out code, whether through a build step, a test runner, a linting script, or anything else that invokes it.

At that point, attacker-controlled code is running inside a privileged context it did not earn. The sandbox is gone. The keys are on the table. Trivy’s “API Diff Check” workflow (apidiff.yaml) had all three steps. Hackerbot-claw’s pull request supplied the malicious code that step three executed.

Now here is the detail most coverage of this incident skips, and it matters for how you think about your own exposure.

The malicious code did not simply read environment variables. That would have been bad enough. What it actually did was read GitHub Actions Runner Worker process memory directly, using the Linux /proc/pid/mem interface. This is the memory space where the GitHub Actions runtime stores secrets internally, including secrets marked as masked in the UI. Masking prevents a secret from appearing in log output. It does nothing to prevent a process with sufficient permissions from reading the memory address where that secret lives.

The practical implication: organizations that rely on “we do not print secrets to logs” as a sufficient control are still fully exposed to this attack class. The attacker does not need the secret to appear in a log or to be explicitly referenced in the workflow. They need code execution in the right context, access to the runner’s process list, and the /proc/pid/mem read. That is it. A Personal Access Token was exfiltrated to a domain the attacker controlled within minutes of the workflow firing.

This attack class is documented. GitHub’s own security guidance covers it. The security research community calls it the “Pwn Request.” StepSecurity’s CI/CD analysis identifies it as one of the most common misconfigurations across public repositories, not because it is obscure but because pull_request_target is genuinely useful, its danger is non-obvious, and the three-step combination is easy to write without recognizing what you have built. Trivy, a security tool, had this pattern in its own codebase. That is not a criticism of the Trivy team. It is a statement about how normalized the misconfiguration has become.

Now, the detail that has not appeared in most coverage of this incident, and which carries a caveat worth stating plainly: multiple researchers who analyzed the early campaign reported that hackerbot-claw was not a human manually submitting pull requests. It was an autonomous AI agent, and some accounts specifically name the model behind it. If accurate, the implication lands with some weight. An AI-powered bot exploited a documented CI/CD misconfiguration to steal the credentials that ultimately compromised AI infrastructure. The security tool got owned. The tool that owned it may have been AI. The infrastructure it eventually reached manages AI. The irony is almost too neat, which is part of why the attribution deserves the caveat: it comes from sources whose methodology is not fully disclosed, and the claim has not been confirmed by any government advisory or law enforcement body. Treat it as reported, not settled.

What is settled: the account was created eight days before the attack. The exploit was precise, automated, and reused across at least seven other open-source repositories in the same campaign window. Whether the operator behind it used AI tooling or wrote the bot by hand, the capability gap between “knows about pull_request_target” and “can weaponize it at scale” is narrowing. That is the part worth carrying forward.

Aqua Security disclosed the breach on March 1 and rotated credentials. But the rotation was not atomic. Old and new credentials existed simultaneously for several days. The attacker retained access through that window.

Five Days of Cascading Trust

By March 19, the threat actor group that Datadog Security Labs tracks as TeamPCP had reactivated their access to Aqua’s infrastructure. They pushed a malicious Trivy binary to official distribution channels: GitHub Releases, Docker Hub, container registries, package repositories. In parallel, they force-pushed 76 of 77 version tags in the trivy-action GitHub Action repository. Any pipeline referencing Trivy by version tag now silently ran attacker code.

This is where something subtle matters. Git tags are not immutable. A tag like @v0.28.0 is a label. Anyone with push access can reassign that label to point to a completely different commit. No new release, no branch change, nothing visible in the workflow file itself. The pipeline looks identical. The code it runs is not.

The malicious Trivy action read GitHub Actions runner memory directly, sweeping for the pattern GitHub uses internally to store masked secrets. It collected everything, encrypted it, and shipped it out. When direct exfiltration failed, it created a public GitHub repository using the victim’s own token, uploaded the stolen data there, and walked away.

Over the next three days, those stolen tokens cascaded. Compromised npm credentials seeded a self-propagating worm across at least 64 packages. Stolen GitHub tokens reached Checkmarx’s scanning tools and VS Code extensions. Each victim’s infrastructure became the entry point for the next.

On March 24, LiteLLM’s build pipeline ran its routine security scan. The pipeline’s shell script installed Trivy using the apt package manager without a pinned version, pulling whatever was latest from Aqua Security’s distribution channel. The latest version was the compromised binary. It ran inside GitHub Actions with full runner permissions, found the PyPI publishing token in runner memory, and sent it home.

Thirteen minutes later, a second malicious package was uploaded.

Two Versions, One Important Difference

Version 1.82.7 embedded its payload inside the module that runs LiteLLM’s API gateway. The malicious code executed whenever anything imported that module. If you were running LiteLLM as a proxy, the payload ran.

Version 1.82.8 was more aggressive. It added a .pth file to the Python site-packages directory. Python processes these files automatically every time the interpreter starts, regardless of what the application imports. Any Python process, whether running LiteLLM, pip, pytest, or an IDE’s background language server, triggered the credential stealer. The file was correctly declared in the package’s own manifest with a matching checksum. Standard hash verification passed. The package was exactly what PyPI advertised. It was just not safe.

This is where the MCP angle connects directly to something I covered in the MCP article The Protocol That Wired Your AI Agent to the World (And Left the Door Unlocked) (which examined the trust assumptions baked into MCP plugin architectures). McMahon’s machine was exposed not through a deliberate pip install litellm command. LiteLLM arrived as a transitive dependency through a Cursor MCP plugin. He never chose it. The plugin did. If you are building or using MCP servers, your dependency graph now includes everything those servers pull in, pinned or not. That surface is wider than most people realize, and article Can You Trust That Skill? raised exactly this question from a different angle (what it means to trust a tool your AI system calls without verifying its integrity): when the tools your AI stack relies on are themselves unverified, the trust you extend to your AI system is only as sound as the weakest link in that chain.

What the Payload Actually Did

The three-stage payload followed a consistent pattern across both versions.

Stage one was collection. SSH keys, environment variables, AWS credentials, GCP service account tokens, Azure secrets, Kubernetes configuration files, database passwords, shell history, git credentials, cryptocurrency wallet files. It also queried cloud metadata endpoints directly, meaning workloads running on cloud instances handed over their instance credentials as well.

Stage two was exfiltration. The collected material was compressed, encrypted with AES-256-CBC using a session key protected by a hardcoded 4096-bit RSA public key, and shipped via HTTPS to a domain designed to look like legitimate LiteLLM infrastructure. It was not.

Stage three was persistence. If a Kubernetes service account token was present, the malware swept all cluster secrets across all namespaces and deployed a privileged container to every node in the cluster. Each container mounted the host filesystem and installed a backdoor service that polled a second attacker-controlled domain every 50 minutes for new instructions.

Of the 2,337 packages on PyPI that listed LiteLLM as a dependency, 88% had version specifications broad enough to receive the compromised versions. And 23,142 pip installs of version 1.82.8 executed the malicious payload during the installation process itself, before any application code ran.

The Organizational Failure Underneath

None of the individual failures here are exotic. An unpinned tool install in a shell script. A long-lived API token stored as an environment variable. A version tag reference in a GitHub Actions workflow. Non-atomic credential rotation after a known breach.

Each of these is documented. Each has a known fix. The reason they compounded is that AI development teams have been moving at a pace where supply chain hygiene is treated as overhead. Dependencies get added, version constraints get loosened, CI scripts get copy-pasted. In the AI ecosystem specifically, where iteration speed is treated as a competitive advantage, this has produced a dependency graph that is wide, shallow in its pinning, and almost entirely unexamined at the install boundary.

The Resolution: Your New Superpower

Start with the budget question, because that is where this incident actually lands.

Many organizations running AI applications have allocated security investment toward model-level risks: prompt injection, inference guardrails, safety tooling, red-teaming against the model itself. Those investments matter. They also did not touch a single layer of this attack. The shell script, the environment variable, the version tag in the GitHub Actions workflow: that is where the exposure happened. If your AI security posture is strong at the model layer and unexamined at the supply chain layer, you have protected the part of the stack that was not targeted.

The technical controls that would have broken each link in this chain fall into three categories: what you install and how you verify it, how you authenticate publishing credentials, and what runtime permissions you grant by default.

Pin your tools by hash, not by version number. A Trivy installation that specifies a version string still pulls whatever binary corresponds to that version from the configured repository. An installation that verifies a SHA-256 hash fails loudly if the binary has changed. The same logic applies to GitHub Actions: pin to a full 40-character commit SHA rather than a version tag. A SHA refers to a specific, immutable state of the repository. A version tag refers to whatever the maintainer last pointed it at.

Replace long-lived publishing tokens with PyPI’s Trusted Publishers integration. This issues short-lived, workflow-bound OIDC credentials instead of static secrets. A stolen OIDC token cannot be reused outside the authenticated workflow context it was issued for. The attacker found a static password in runner memory. Under Trusted Publishers, there is no static password to find.

At the install boundary, automated scanning tools detected both malicious LiteLLM versions within seconds of publication. Organizations that had install-time tooling in the path were protected before they knew there was anything to be protected from.

For Kubernetes, the lateral movement stage depends entirely on finding an automounted service account token. Setting that to false as a cluster default, and granting API access only to workloads that provably require it, removes the mechanism the payload relied on.

If you do only one thing, replace static publishing tokens with OIDC-based Trusted Publishers. It removes the credential class the attacker relied on entirely, and it costs nothing to implement.

None of these controls are expensive. None require novel security research. What they require is treating the pipeline that delivers your AI infrastructure with the same scrutiny you apply to the AI infrastructure itself.

The model never knew a thing.

Fact-Check Appendix

Statement: LiteLLM is present in 36% of cloud environments. | Source: Wiz Security Research, “Three’s a Crowd: TeamPCP Trojanizes LiteLLM in Continuation of Campaign” | https://www.wiz.io/blog/threes-a-crowd-teampcp-trojanizes-litellm-in-continuation-of-campaign

Statement: PyPI quarantined the malicious packages within 46 minutes of the first upload; FutureSearch’s BigQuery analysis of PyPI download logs shows approximately 46,996 downloads within that quarantine window. | Source: FutureSearch AI, “LiteLLM Hack: Were You One of the 47,000?” | https://futuresearch.ai/blog/litellm-hack-were-you-one-of-the-47000/

Statement: LiteLLM’s official report places the full exposure window at 10:39 UTC to 16:00 UTC on March 24, 2026. | Source: LiteLLM / BerriAI, “Security Update: Suspected Supply Chain Incident” | https://docs.litellm.ai/blog/security-update-march-2026

Statement: Of 2,337 PyPI packages depending on LiteLLM, 2,054 (88%) had version specifications that allowed the compromised versions. | Source: FutureSearch AI, “LiteLLM Hack: Were You One of the 47,000?” | https://futuresearch.ai/blog/litellm-hack-were-you-one-of-the-47000/

Statement: Version 1.82.7 was uploaded at 10:39:24 UTC and version 1.82.8 at 10:52:19 UTC on March 24, 2026, a 13-minute gap indicating live attacker iteration. | Source: Endor Labs, “TeamPCP Isn’t Done” | https://www.endorlabs.com/learn/teampcp-isnt-done

Statement: 23,142 pip installs of version 1.82.8 executed the malicious payload during the installation process itself, before any application code ran. | Source: FutureSearch AI, “LiteLLM Hack: Were You One of the 47,000?” | https://futuresearch.ai/blog/litellm-hack-were-you-one-of-the-47000/

Statement: Version 1.82.8’s .pth file was correctly declared in the wheel’s RECORD file with a matching SHA-256 hash; pip install with hash verification would have passed without error. | Source: Snyk, “How a Poisoned Security Scanner Became the Key to Backdooring LiteLLM” | https://snyk.io/articles/poisoned-security-scanner-backdooring-litellm/

Statement: Trivy-action version tags, 76 of 77, were force-pushed to malicious commits on March 19, 2026. | Source: Datadog Security Labs, “LiteLLM compromised on PyPI: Tracing the March 2026 TeamPCP supply chain campaign” | https://securitylabs.datadoghq.com/articles/litellm-compromised-pypi-teampcp-supply-chain-campaign/

Statement: The non-atomic credential rotation on March 1 allowed the attacker to retain access through the rotation window, enabling the March 19 attack. | Source: Aqua Security, “Update: Ongoing Investigation and Continued Remediation” | https://www.aquasec.com/blog/trivy-supply-chain-attack-what-you-need-to-know/

Statement: At least 64 npm packages were compromised through the CanisterWorm self-propagating worm seeded by stolen npm tokens. | Source: Datadog Security Labs | https://securitylabs.datadoghq.com/articles/litellm-compromised-pypi-teampcp-supply-chain-campaign/

Statement: CVE-2026-33634 was assigned a CVSS score of 9.4 and classified under CWE-506 (Embedded Malicious Code). | Source: NVD/NIST, CVE-2026-33634 | https://nvd.nist.gov/vuln/detail/CVE-2026-33634

Top 5 Sources

Datadog Security Labs: “LiteLLM compromised on PyPI: Tracing the March 2026 TeamPCP supply chain campaign” | https://securitylabs.datadoghq.com/articles/litellm-compromised-pypi-teampcp-supply-chain-campaign/

FutureSearch AI (Callum McMahon): “Supply Chain Attack in litellm 1.82.8 on PyPI” | https://futuresearch.ai/blog/litellm-pypi-supply-chain-attack/

Wiz Security Research: “Three’s a Crowd: TeamPCP Trojanizes LiteLLM in Continuation of Campaign” | https://www.wiz.io/blog/threes-a-crowd-teampcp-trojanizes-litellm-in-continuation-of-campaign

Endor Labs (Kiran Raj): “TeamPCP Isn’t Done” | https://www.endorlabs.com/learn/teampcp-isnt-done

Snyk: “How a Poisoned Security Scanner Became the Key to Backdooring LiteLLM” | https://snyk.io/articles/poisoned-security-scanner-backdooring-litellm/

Peace. Stay curious! End of transmission.