MCP, the protocol that wired your AI agent to the world (and left the door unlocked)

Explore the security risks of Model Context Protocol (MCP). Learn how AI agents use MCP to access data and the critical vulnerabilities like tool poisoning.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

I remember the day Anthropic dropped the Model Context Protocol. It felt like the “holy grail” moment for everyone building AI agents. We finally had a universal translator that could plug a single model into thousands of tools without the grinding manual labor of custom adapters. The industry went wild: OpenAI, Google, and Microsoft all jumped in within months. But as I started digging into the specification, that excitement turned into a familiar, cold realization: we were handing out universal keycards to every room in the building without checking if the locks were actually installed. This article is my deep dive into the “missing” security layers of MCP. I’ve spent weeks auditing server registries and tracking the first real-world exploits: from tool poisoning that whispers hidden instructions to your agent, to path-traversal breaches that expose thousands of downstream apps. If your agent speaks MCP, it already has the power to act. My goal is to make sure it doesn’t do something you can’t take back before the market wakes up to the risk.

The Itch: Why This Matters Right Now

Picture a new hire on their first day. They show up eager, capable, and ready to work. You hand them a keycard that opens every door in the building. Finance. Legal. The server room. The executive floor. You don’t tell them which rooms to avoid. You assume they’ll figure it out.

That’s essentially what happened when AI agents got MCP.

Launched on November 25, 2024, the Model Context Protocol is the standard that solved one of the most grinding problems in agentic AI: how do you connect one AI system to thousands of tools without writing a custom adapter for every single combination? Before MCP, every integration was a bespoke handshake. You needed a new connector for your calendar, a different one for your code repository, another for your database. Engineers call this the M x N problem. M models times N tools equals an integration sprawl that nobody could afford to maintain.

MCP collapsed that sprawl into a single, universal protocol. It’s built on JSON-RPC 2.0, a battle-tested message format, and it gives AI agents the ability to discover tools, request data, and trigger actions across virtually any service, all through one standardized conversation.

The response from the industry was immediate. OpenAI adopted it in March 2025. Google followed in April. Microsoft in May. Today, the ecosystem counts more than 97 million monthly SDK downloads and over 10,000 servers. The protocol is now governed by the Agentic AI Foundation, a Linux Foundation entity.

Your AI agent almost certainly speaks MCP already.

The question I’ve been sitting with is this: does it know when to keep its mouth shut?

The Deep Dive: The Struggle for a Solution

What MCP Actually Is

Before the villains walk on stage, you need to know who built the theater.

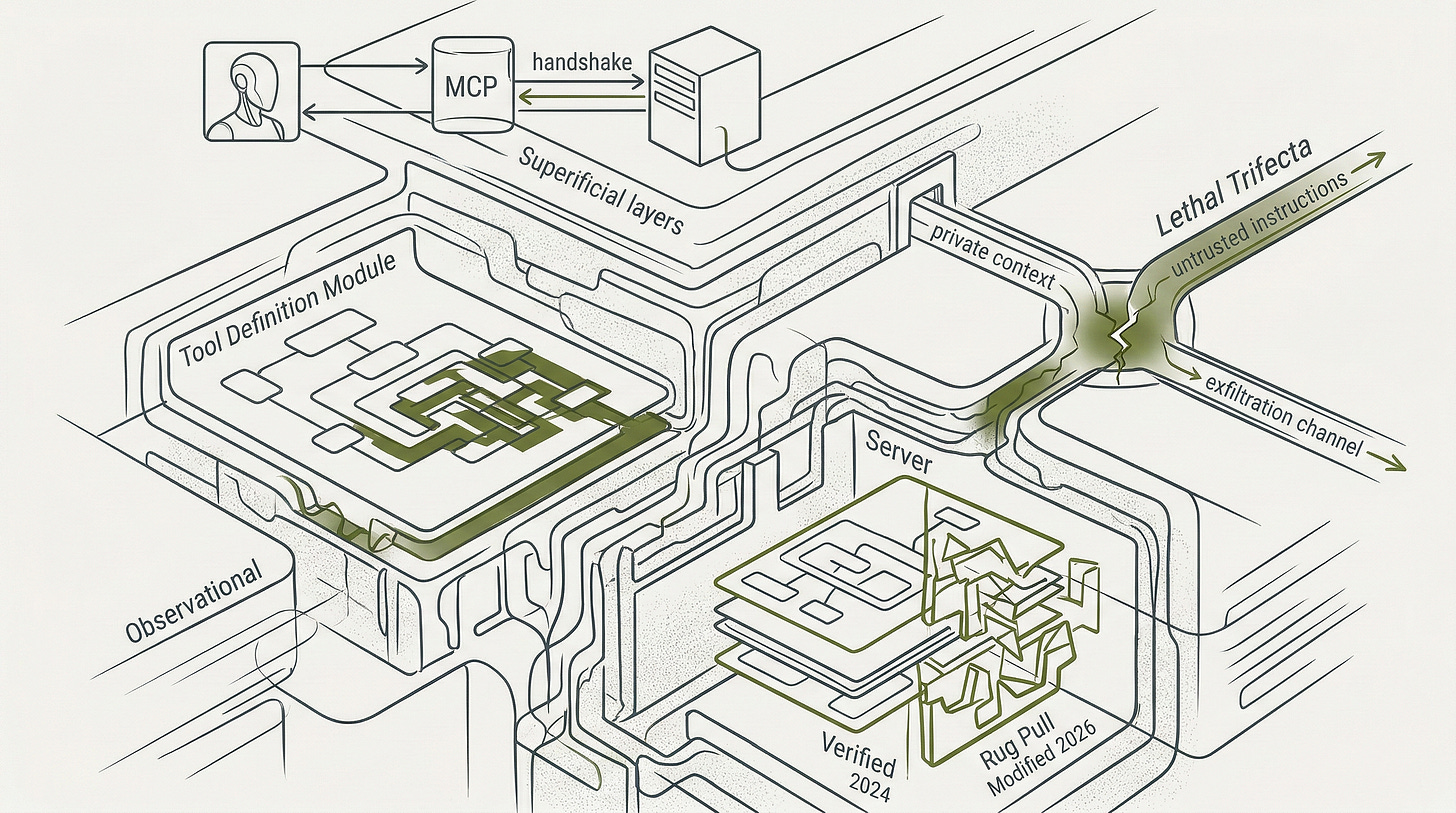

MCP has three characters with very different jobs. The host is the application you interact with: Claude Desktop, VS Code, a custom enterprise chatbot. It’s the director, coordinating everything. The client lives inside the host and manages one dedicated connection per external service. Think of it as a translator working on the director’s behalf, one per conversation partner. The server is the external system being connected: a calendar API, a GitHub repository, a company database. Servers are the ones with the keys to something real.

When an agent session starts, these three characters go through a formal introduction ritual. The client announces what it’s capable of and what protocol version it speaks. The server responds with its own capabilities, its name, and a set of instructions describing what it can do. Both sides shake hands and the session opens.

Here’s the thing about that handshake: the server introduces itself. The client has no independent way to verify the introduction is honest. The protocol records the exchange. It does not authenticate the participants. That asymmetry is where everything interesting, and everything dangerous, begins.

The Keycard That Trusts Everyone

Those three characters interact through three types of server capabilities. Tools are actions the AI model can invoke on its own, things like creating a file or sending a message. Resources are data sources the host application controls and surfaces to the model. Prompts are templated instructions the user explicitly selects. That hierarchy matters, because tools carry the highest autonomy and the most risk.

Here’s the structural problem. MCP’s specification, as of its November 2025 version, does not formally specify a principal hierarchy. The chain from human to orchestrator to subagent to tool is implicit in the design philosophy, but there is no protocol-level mechanism that enforces who can authorize what. Authorization using OAuth 2.1 was added to the spec in March 2025, which represented real progress. But OAuth is marked optional. Stdio transports, the most common local deployment method, are explicitly exempt from the OAuth framework entirely.

What this means in practice: a server can tell a host anything it wants about itself, and the host has no cryptographic way to verify whether the server is who it claims to be. There is no provenance mechanism. When a researcher audited the Glama.ai registry (one of the major MCP server directories), roughly 100 of the 3,500 listed servers linked back to repositories that did not exist.

That gap extends to a distinction that matters enormously in practice: the difference between a first-party and a third-party server has no formal protocol-level representation. When a user installs a third-party stdio server from an untrusted npm package, that server executes locally with full user privileges. The protocol treats it identically to a server built by the same team that built the host. There is no label, no flag, no cryptographic marker that distinguishes the two.

The protocol isn’t lying to you. It simply never promised to check.

Meet the Villains

Security researcher Johann Rehberger gave a name to one of the nastiest attack classes in this ecosystem: tool poisoning. Here’s how it works. A malicious MCP server embeds hidden instructions inside a tool’s description field, the text that tells the AI what the tool is for. The AI reads that description and treats it as legitimate instruction. Rehberger demonstrated this with Unicode tag characters that are invisible to human reviewers but fully readable to the model. The tool says “summarize emails” to the user. It whispers something else entirely to the agent.

Simon Willison, whose meticulous security writing covers this territory in close detail, identified what he calls the lethal trifecta: private data in context, untrusted instructions from an external source, and an available exfiltration channel. Any MCP-connected agent that has access to all three is a loaded scenario. The attacker doesn’t need to breach your perimeter. They need your agent to read a malicious document that contains an instruction, because the agent will often follow it.

Then there’s the rug pull. A server builds trust by behaving responsibly for weeks or months. Then it updates its tool definition to request something far more invasive. Because most MCP clients don’t re-verify server behavior after initial approval, the agent keeps trusting the server. The keycard was legitimate when you handed it out. Nobody checked whether the locks changed.

Cross-server shadowing adds another layer. If you run multiple MCP servers simultaneously, a malicious server can inject instructions telling the AI to use a different, compromised server for a sensitive operation. The AI reroutes without telling you.

The Observability Gap Nobody Talks About

There’s a quieter problem that makes every attack above harder to detect: you often can’t see what your agent did.

MCP’s native logging lets servers emit log messages to the client, following RFC 5424 severity levels, and that is where the protocol’s built-in capability ends. There are no persistent audit trails, no correlation IDs that tie a sequence of tool calls together, no user attribution for specific actions, and no tamper-evident storage. If your agent exfiltrated a document, the protocol won’t tell you. You would need to build that instrumentation yourself, on top of the protocol, in a way the spec does not guide you toward.

A forensic investigation of any of the incidents below would face this constraint first: the protocol itself has no memory of what the agent did.

That silence is the condition every attack below exploits.

The Counter-Positions, Examined Honestly

Four arguments get made in MCP’s defense, and they all deserve direct engagement.

The first is that OAuth 2.1 plus TLS is sufficient. The research says otherwise. Prompt injection through tool descriptions, sampling-based resource theft, and rug pull attacks have no analog in traditional API security. OAuth secures the connection. It does not secure the content of the tool description. These are different attack surfaces.

The second is that MCP’s trust model is fundamentally broken and cannot be fixed. This overstates the case. The protocol is young. Its authorization framework is robust when properly implemented. The Security Enhancement Proposal process is active. The fact that the spec makes authorization optional is a design debt, not an architectural death sentence.

The third is that MCP will be displaced by competitors. Google’s Agent-to-Agent protocol (A2A) was explicitly designed to complement MCP rather than replace it. IBM’s ACP merged into A2A. Both now sit under the same AAIF governance umbrella.

The fourth is that vendor adoption is overstated and the protocol isn’t ready for production. This one is worth taking seriously, because Thoughtworks’ Technology Radar placed MCP in “Trial” status as of November 2025. That designation means something specific: the protocol is worth pursuing for sophisticated teams, but enterprise-wide validation is incomplete. What is also true is that Block, Bloomberg, and Amazon are confirmed production deployers, not pilots or proofs-of-concept. They didn’t get there by treating MCP as production-ready by default. They got there by adding governance layers the spec doesn’t require: allow-lists, OAuth enforcement, external audit logging, and registry vetting. The honest verdict is that MCP is production-ready under governance, and a liability without it. The protocol didn’t ship with those controls. Your deployment has to.

The Resolution: Your New Superpower

The security community caught up to MCP faster than it did to most infrastructure-layer standards. That speed is your advantage.

Here is where to put it to work, structured as a decision you can make right now.

If you run third-party stdio servers, treat every installation as you would an open-source dependency entering a production codebase. Verify the repository exists, is actively maintained, and has a traceable publication history. Cross-reference against the Vulnerable MCP Project tracker before approving any server for use. GitHub’s Lockdown Mode, which strips invisible Unicode characters and sanitizes content from untrusted contributors, is worth enabling for any IDE-integrated MCP setup.

If you run remote HTTP-based servers, OAuth 2.1 is not optional for you, regardless of what the spec says. Implement Authorization Code Grant with PKCE. Enforce token audience binding so that tokens issued for one server cannot be replayed against another. Require Protected Resource Metadata so clients know exactly which authorization server to trust.

If you are deploying MCP without external audit logging, your exposure is real and silent. The protocol’s native log messages are session-scoped and ephemeral. They will not satisfy an incident investigation, a compliance audit, or an EU AI Act Article 12 requirement when that provision comes into force in August 2026. Build OpenTelemetry instrumentation that captures tool call sequences, the identity of the initiating user or process, and the data that was accessed or modified. Do this before your first production incident, not after.

If you have approved any tool descriptions without reviewing them as an adversary would, that review is overdue. Unusual Unicode, conditional logic that references other servers, or instructions that describe actions beyond the stated tool function: all of these are manipulation surfaces, not implementation quirks.

Here is the forward-looking reality. MCP is fourteen months old. The OWASP guides, the CVE disclosures, the governance frameworks, the regulatory alignment: this infrastructure is being built in real time, and security teams who engage now are shaping it. The teams who wait for the ecosystem to harden on its own will inherit whatever defaults get baked in by people who weren’t paying attention.

MCP gave your AI agent hands. It can write, read, search, schedule, send, and execute with a fluency that wasn’t possible twelve months ago. That capability is real and it earns its place in production.

The lock it came with was installed at manufacturing. The teams who understand that are already changing it.

Fact-Check Appendix

Statement: MCP launched on November 25, 2024, with Python and TypeScript SDKs and six reference servers. Source: MCP Blog (Anniversary post) | https://blog.modelcontextprotocol.io/posts/2025-11-25-first-mcp-anniversary/

Statement: The ecosystem counts more than 97 million monthly SDK downloads and over 10,000 servers. Source: MCP Blog (Anniversary post) | https://blog.modelcontextprotocol.io/posts/2025-11-25-first-mcp-anniversary/

Statement: OpenAI adopted MCP in March 2025, Google in April 2025, Microsoft in May 2025. Source: Anthropic AAIF announcement | https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

Statement: The protocol is governed by the Agentic AI Foundation (AAIF), a Linux Foundation entity, announced December 9, 2025. Source: Anthropic AAIF announcement | https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

Statement: Approximately 100 of 3,500 Glama.ai registry servers linked to nonexistent repositories. Source: Wiz Security Research, "MCP and LLM Security Research Briefing," April 17, 2025 | https://www.wiz.io/blog/mcp-security-research-briefing

Statement: OWASP published the Secure MCP Development Guide on February 16, 2026. Source: OWASP GenAI | https://genai.owasp.org/resource/a-practical-guide-for-secure-mcp-server-development/

Statement: NIST opened an AI Agent Standards Initiative in January 2026, with an RFI closing March 9, 2026. Source: NIST AI RMF | https://www.nist.gov/itl/ai-risk-management-framework

Statement: EU AI Act Articles 12 and 13 high-risk provisions apply from August 2, 2026. Source: EU AI Act (official text) | https://artificialintelligenceact.eu/article/12/ and https://artificialintelligenceact.eu/article/13/

Statement: MCP’s authorization specification uses OAuth 2.1 with Authorization Code Grant, PKCE, and Resource Indicators (RFC 8707), added in March 2025. Source: MCP Authorization Specification | https://modelcontextprotocol.io/specification/2025-11-25/basic/authorization

Statement: Thoughtworks placed MCP in “Trial” status on its Technology Radar as of November 2025. Source: MCP Blog (Anniversary post) | https://blog.modelcontextprotocol.io/posts/2025-11-25-first-mcp-anniversary/

Statement: Block, Bloomberg, and Amazon are confirmed production MCP deployers. Source: Anthropic AAIF Donation Announcement | https://www.anthropic.com/news/donating-the-model-context-protocol-and-establishing-of-the-agentic-ai-foundation

Top 5 Prestigious Sources

OWASP GenAI Working Group | OWASP MCP Top 10 and Agentic AI Top 10 for 2026 | https://genai.owasp.org

NIST | AI Risk Management Framework and AI Agent Standards Initiative (January 2026) | https://www.nist.gov/itl/ai-risk-management-framework

EU AI Act (Official Text) | Articles 12 and 13, transparency and logging obligations for high-risk AI systems | https://artificialintelligenceact.eu

JFrog Security Research | CVE-2025-6514 disclosure, mcp-remote critical vulnerability (CVSS 9.6) | https://jfrog.com/blog/2025-6514-critical-mcp-remote-rce-vulnerability/

Palo Alto Networks Unit 42 | MCP attack vectors: sampling-based resource theft, conversation hijacking, covert tool invocation | https://unit42.paloaltonetworks.com/model-context-protocol-attack-vectors/

Peace. Stay curious! End of transmission.