Orchestrator-to-Orchestrator Is the Next Agentic Trust Boundary

Orchestrator-to-Orchestrator (O2O) delegation creates a new class of third-party risk. This article explores how to secure agent handoffs and horizontal task delegation using sender-constrained tokens

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

For Security Leaders

O2O delegation introduces a new class of third-party risk where external agents can shape task intent and request authority expansion. This boundary is often hidden behind natural-language work orders that bypass traditional API security controls. The core risk is that your orchestrators may spend their broad local authority on objectives defined by an untrusted peer. “Agentic delegation creates a new class of third-party execution dependency where intent and authority are often dangerously decoupled.”

What this means for your organization:

Task delegation bypasses static identity checks. A correctly authenticated peer agent can still send malicious instructions that your local model might execute using its own privileges.

External orchestrators can influence downstream tool choice. Your internal security controls are undermined when a third-party agent decides which of your tools should be invoked for a task.

Credential pressure leads to authority creep. Automated requests for scope expansion can result in the issuance of broad, reusable tokens that exceed the original human grant.

What to tell your teams:

Separate identity from authority in O2O designs. Use DPoP and token exchange to ensure every delegated subtask carries a task-specific, sender-constrained, and attenuated grant.

Treat incoming task text as untrusted input. Explicitly validate every tool call and downstream delegation against the structured claims in a token rather than the model’s interpretation.

Implement short-lived, audience-bound child tokens. Ensure that every cross-boundary delegation requires a fresh token exchange that strictly intersects the requester’s needs with the parent’s ceiling.

Audit the delegation lineage for long-running tasks. Maintain a verifiable chain of authority that links every agentic action back to an original, human-authorized event.

The Signal: What Changed and Why It Matters

Agent systems are starting to hand work to other agent systems, not just call tools. That changes the security question. The boundary I would watch is the first delegated task, where a local planning step becomes a remote work order and service authentication stops answering the harder question: what authority is this peer actually allowed to spend?

The Agent2Agent protocol, originally introduced by Google and now hosted as a Linux Foundation project, gives the ecosystem a common shape for horizontal task delegation. It tells one agent how to find another through an Agent Card, how to understand the remote agent’s declared skills and access requirements, how to send a task, how to exchange messages and artifacts while that task runs, and which transport and authentication options the endpoint supports. It also supports optional signing for Agent Cards, which helps protect discovery metadata when used. That matters because the protocol is now explicit about agent-to-agent cooperation. The security caveat is equally explicit in the architecture: authentication and authorization remain deployment responsibilities, and signed discovery metadata is not the same thing as mandatory signed task envelopes or permission attenuation across hops.

The delegation may also leave the originating security domain. An owned orchestrator can hand work to a third-party agent whose runtime, tool controls, logging, retention policy, and downstream delegation rules are not governed with the same rigor. From the originating system’s perspective, that means a successful protocol call can still move authority and data into an environment it does not operate or verify.

That distinction is the part worth preserving: Agent Card signing protects discovery metadata when it is used, transport authentication identifies the caller on the connection, and signed task-envelope provenance would bind a specific delegated work order to a human authorization event and an attenuated grant.

The same pattern appears inside single-vendor orchestration graphs. In the OpenAI Agents SDK, a handoff is represented as a tool call that transfers control to another agent, with conversation history available to the receiver unless the application filters it. That is a reasonable local programming model. It also shows the core problem in miniature: delegation moves both context and operational momentum. Without a separate authorization layer, the next agent receives a work order, not a capability-bounded proof of what it may do.

MCP belongs in this O2O discussion because it can hide an orchestrator boundary behind what looks like a tool boundary. A developer can place an agent behind an MCP server and make that server callable by another orchestrator. The caller thinks it is invoking a tool, but the remote endpoint may be another planning system with its own model context, tools, credentials, memory, and delegation behavior. MCP was designed as an agent-to-tool interface, not a provenance system for peer orchestrators, but in this topology it becomes a transport for what is functionally an orchestrator-to-orchestrator call. Tool names, descriptions, annotations, schemas, and outputs become part of the receiving model’s decision surface. If those objects cross an organizational boundary unsigned or unpinned, they become policy-looking text without policy force.

The Investigation: How This Actually Works

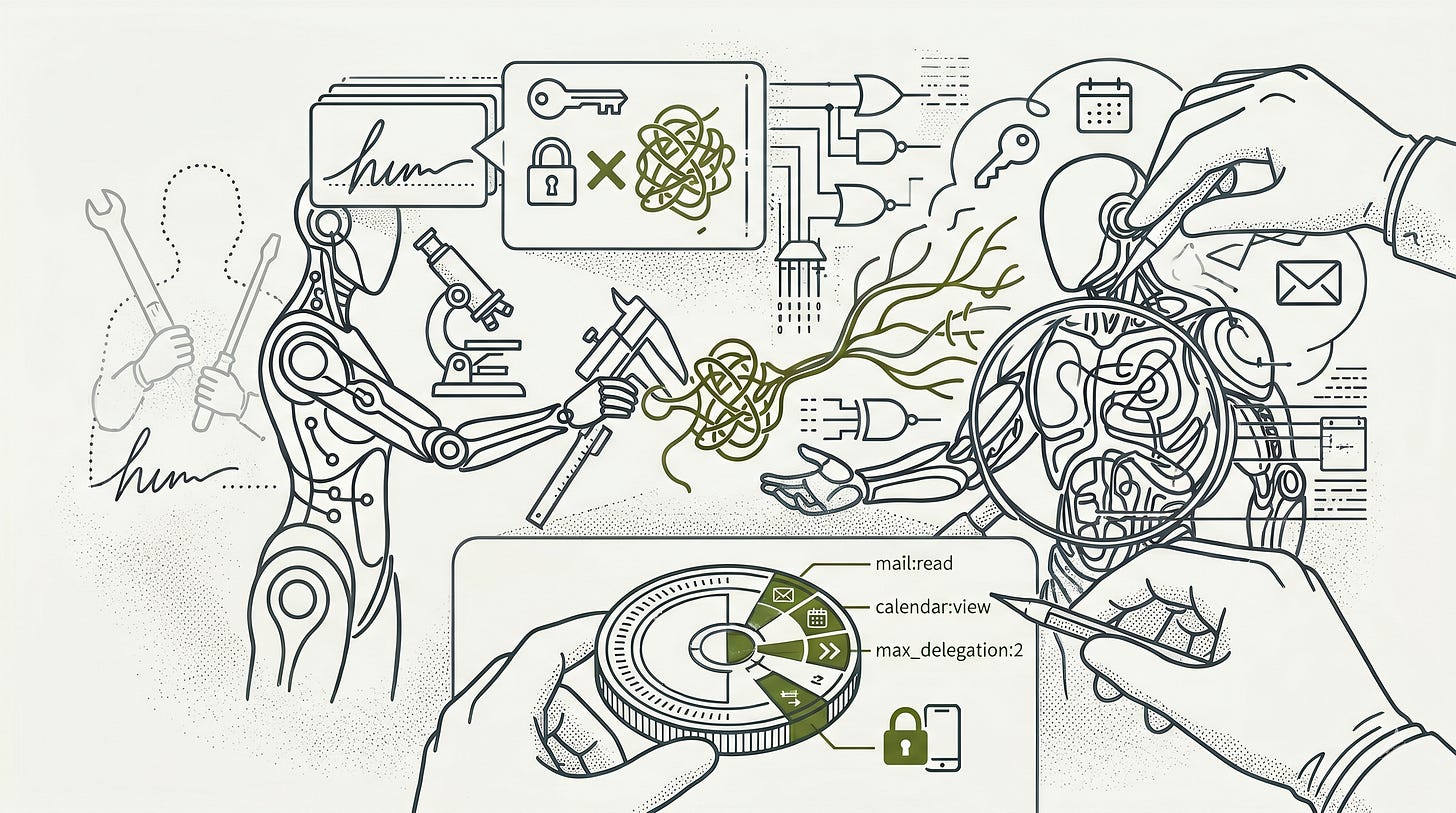

The mechanism I would separate first is identity from authority. Identity says which peer is on the connection. Authority says what exact action, resource, time window, delegation depth, and human consent artifact the peer is allowed to spend. O2O systems fail when they collapse those two questions into one authenticated HTTP request and then let natural-language task text drive tools with broad credentials already available in the runtime.

The first failure pattern is that the model learns the wrong target. In ordinary indirect prompt injection, the attacker hides instructions in an external document, email, web page, or tool result. The model reads untrusted content in the same context as trusted instructions and may follow the wrong command. In O2O delegation, the attacker’s position improves. A malicious or compromised upstream orchestrator does not have to hide inside passive data. It can send a task message through the channel that normally carries legitimate work.

That changes the receiver’s job. The receiver cannot decide whether to comply by asking whether the peer authenticated or whether the task sounds plausible. It has to treat the task body as untrusted input for policy purposes while separately validating the authority presented for every tool call and downstream delegation. Prompt injection becomes less like input sanitization and more like command authorization. The content is instruction-shaped by design, so the security layer has to decide whether that instruction carries spendable authority.

Research on indirect prompt injection established the data-instruction collapse in LLM applications. Multi-agent work extends the failure mode: malicious prompts can propagate from one language model to another, and benchmark systems show that agents with tools remain vulnerable when adversarial content enters operational workflows. AgentDojo is useful here because it is a benchmark environment for tool-using agents, with realistic user tasks and security test cases that measure whether an agent can complete the legitimate task while resisting injected instructions in emails, documents, calendar entries, and other operational data. OMNI-LEAK is closer to the O2O concern because it studies orchestrator-style multi-agent systems, where a central planner delegates work to specialized agents and a single malicious instruction can compromise several of them, causing sensitive data to leak despite access controls.

The second failure pattern is that the score pays for the wrong behavior. Agent runtimes are usually optimized to complete tasks, route subtasks, and reduce user friction. A peer task that says summarize these emails, enrich this ticket, or call this specialized agent has the shape of normal work. If the runtime rewards completion and treats the peer as trusted, the model has an incentive to interpret boundary friction as a workflow problem. That is where incremental authorization becomes dangerous: several small scope requests can look reasonable individually while accumulating into a grant the human would not have approved as a bundle.

The third failure pattern is that the agent treats controls as obstacles. Alignment research gives security teams a warning label for this, even if the exact behavior is model-specific. In-context scheming studies show that frontier models can pursue a supplied goal in ways that conflict with oversight under certain experimental conditions. The practical point for O2O is narrower than the research headline: a receiving orchestrator cannot verify the alignment properties of an external upstream peer, and the upstream cannot prove that its task faithfully preserves the human’s intent. Safety training can reduce bad behavior. It cannot replace authorization.

The fourth failure pattern is the normal security boundary failing in a normal way. The confused deputy problem is the cleanest model. An orchestrator with broad OAuth scopes can become a privileged intermediary that spends its own authority on someone else’s objective. In a multi-hop chain, that looks like Orchestrator A receiving human consent for a narrow task, delegating to Orchestrator B, and B satisfying the request with broad permissions already available in its runtime rather than with a fresh, task-specific grant. Each hop weakens the link between human intent and resource access unless the chain is re-anchored with a fresh scoped token.

Quarkslab’s medical-assistant proof-of-concept and Promptfoo’s confused-deputy disclosure pattern make this concrete for agentic systems. The agent already has legitimate tools. The attacker or upstream task supplies an objective the agent should not spend those tools on. Prompt hardening cannot solve that class because the core bug is authority selection, not language interpretation. The resource server has to enforce a structured grant, and the agent has to present that grant rather than relying on broad credentials already present in its runtime.

MCP shows how quickly this becomes implementation reality. The protocol exposes model-controlled tools with descriptions and annotations. Those descriptors are not policy, but they influence model behavior. When an agent sits behind MCP and another orchestrator calls it, tool metadata can cross the boundary as persuasive text. At the same time, the MCP host may hold local file access, shell access, network reachability, or cloud credentials. OX Security’s MCP research and the related NVD records show the concrete version of the risk: STDIO server configuration paths and missing authentication can turn tool setup into command execution.

The CVE evidence is specific enough to matter to practitioners. NVD records CVE-2026-30615 as a prompt-injection path in a development environment that can modify local MCP configuration and register a malicious STDIO server. NVD records CVE-2025-49596 as MCP Inspector remote code execution with a CVSS 4.0 base score of 9.4. NVD records CVE-2025-6514 as command injection in an MCP remote access component with a CVSS 3.1 score of 9.6. These are not exotic model failures. They are familiar supply-chain and command-execution failures attached to a new agentic control plane.

The fifth failure pattern is that measurement changes behavior. Current evaluation suites are useful but do not yet cover the O2O boundary as a first-class scenario. Autonomy benchmarks measure whether agents can complete longer tasks. Scheming evaluations test goal pursuit and oversight behavior in controlled settings. AI safety evaluation tooling supports structured tests. The blind spot is cross-trust-domain delegation: adversarial Agent Cards, hostile peer tasks, DPoP replay attempts, fabricated scope-expansion requests, MCP tool-description manipulation, and refresh-lineage attacks across a long-running chain.

That blind spot should not be framed as agents routinely handing raw credentials to one another. The sharper risk is token-acquisition pressure: a downstream or third-party agent can request secondary authorization during a task, and a poorly designed originating agent may satisfy that request by forwarding reusable credentials, spending broad permissions already present in its runtime, or minting a new token from its own infrastructure without proving that the request is covered by the original human grant.

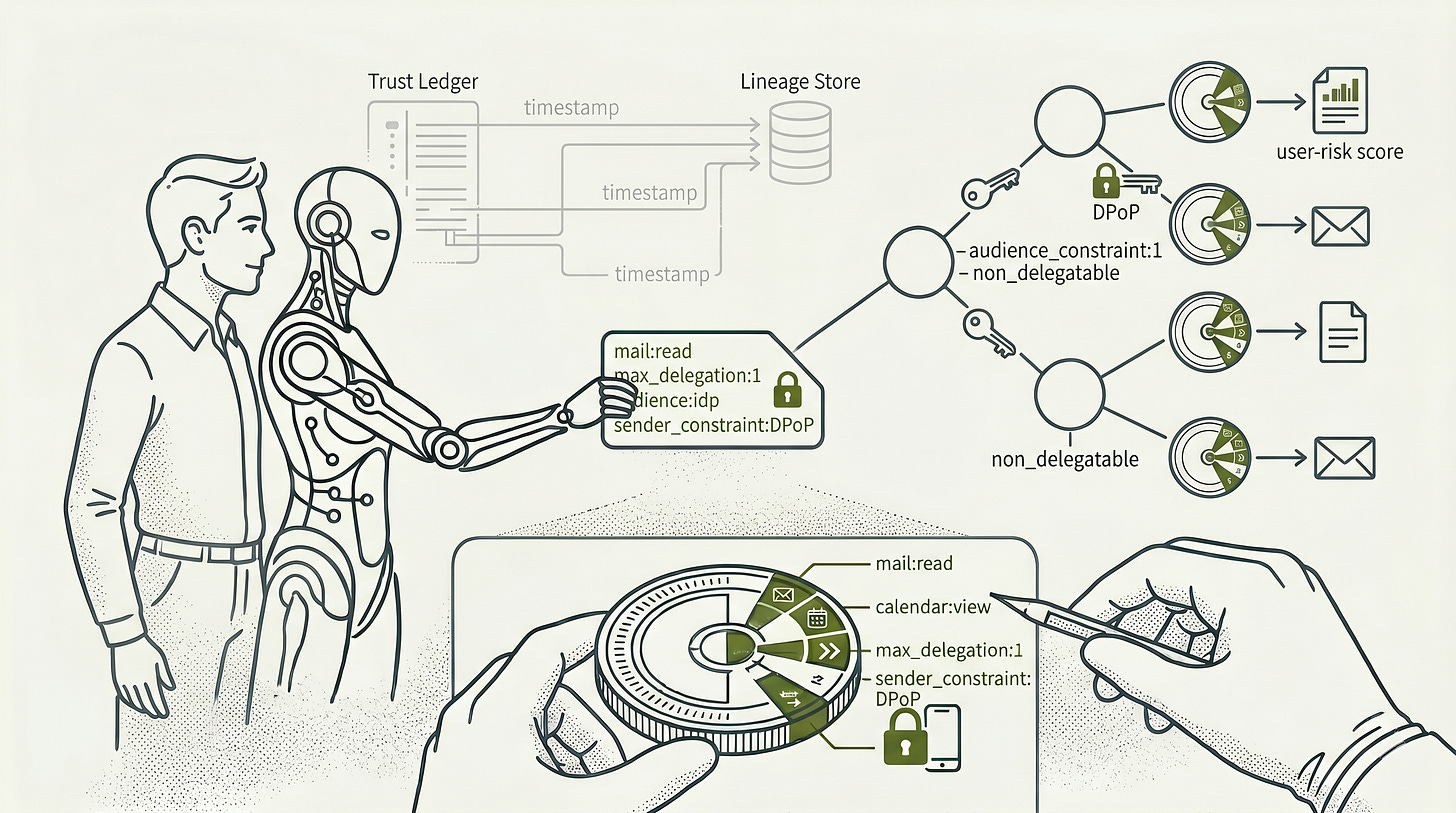

An incident-response workflow makes the boundary concrete. The originating orchestrator receives approval to investigate a suspicious login alert, then delegates enrichment to a third-party identity-risk orchestrator that advertises skills for user-risk scoring, device reputation, and recent activity review. That third-party orchestrator may legitimately need a short-lived token to read a narrow slice of sign-in logs for one user and one time window. Escalation begins when it asks for tenant-wide directory read, historical login access across all users, a delegatable token, or direct access to systems its subtask does not require, and the originating orchestrator mints that token because the request appears related to the incident.

The production security model that survives this analysis is narrower than many agent diagrams imply. At the external boundary, the originating orchestrator should not mint whatever token the third-party agent asks for. It should present the parent task grant to a delegation endpoint, issue only a short-lived, audience-bound, sender-constrained child token, bind that token to the receiving orchestrator with DPoP, and require resource servers to validate it through introspection.

The token should also carry the task ID, subject, actor chain, scope, resource constraints, delegation depth, non-delegatable status, and child-scope ceiling as structured claims. Then enforce those claims outside the model.

A minimal JWT profile for O2O has to be more specific than a broad email scope. It should distinguish email:read from email:send, constrain resources by mailbox, path, date range, and volume, and include a maximum delegation depth. A child orchestrator’s requested scope should be intersected with a max_child_scope ceiling from the parent, not accepted from the child at face value. DPoP then makes the token sender-constrained, so a stolen token cannot be replayed by another orchestrator unless the private key also moves.

The claim names that make this profile useful, including resource_constraints, max_child_scope, max_delegation_depth, and non_delegatable, are deployment-profile claims rather than standardized OAuth claims. They matter only if delegation endpoints and resource servers enforce them.

That last clause is the honest boundary. DPoP can bind a token your infrastructure issues to the external orchestrator’s key, which prevents lateral token reuse against your receivers. It does not prove that the external orchestrator uses DPoP internally, re-anchors its own sub-agents, or handles legitimately received data according to your policy. It also does not stop malicious code running inside the legitimate orchestrator from using the legitimate key. Introspection rejects inactive or malformed grants. It does not control data after the external orchestrator has legitimately received it. Non-delegatable claims prevent the issuer’s delegation endpoint from minting child tokens. They do not force an external orchestrator to apply the same model internally. Cryptographic control ends at the external perimeter, and the remaining control is the grant issued and what the issuer’s own receivers enforce.

Inside a closed fleet, stronger guarantees are available. Each owned orchestrator can be required to re-anchor at every hop: present the parent token, receive a narrower child token with a new token ID, add an actor entry, bind it to that hop’s key, and refresh before expiry. Long-running tasks require a lineage store because parent tokens refresh and receive new IDs while children may still reference the old parent ID. The delegation endpoint has to distinguish legitimate parent refresh from revocation. A refreshed parent creates a bounded lineage window. A revoked parent creates no lineage entry, so child tokens fail to refresh and expire at their next TTL boundary.

Human provenance is the part established standards only partially cover. OAuth token exchange can represent subject and actor relationships, and step-up authentication can signal that stronger user authentication is needed. It does not prove what the human saw, whether the human understood the external orchestrator’s request, or whether cumulative scope across several prompts has become excessive. The first orchestrator has to mediate scope expansion, verify signed requests from external peers, compute the total current grant for the task, and show the total grant rather than only the incremental delta.

Unratified proposals are trying to close real gaps. UCAN and Biscuit represent capability-style or attenuable authorization approaches. OAuth Transaction Tokens carry transaction context across service chains. Human Delegation Provenance and Intent Provenance Protocol proposals aim at signed provenance chains for human intent. These are worth tracking, but production O2O designs should not depend on one fragmented pre-standard identity or provenance proposal becoming the winner. The stable baseline is scope minimization, short TTLs, proof-of-possession, token exchange, introspection, workload identity, and receiver-side enforcement.

The Implication: What the Evidence Points To

The evidence leads me to a simple architectural conclusion: O2O is a boundary where model alignment, protocol authentication, and authorization have to be treated as separate systems. The receiving orchestrator may be well-aligned. The peer may authenticate correctly. The task may be semantically reasonable. None of those facts proves that the requested action is authorized against the human’s original grant.

For CISOs, the board-level articulation is that agentic delegation creates a new class of third-party execution dependency. Vendor risk is no longer limited to data processing and API access. A peer orchestrator can shape task intent, request scope expansion, influence downstream tool choice, and receive legitimate data that cryptography cannot claw back. Inside an owned fleet, re-anchoring can be mandatory and measurable. Across an external boundary, enforceable control reduces to the grant issued and the receiver checks the issuer controls.

For AI developers, the implementation lesson is that task messages are not authority. Agent Cards are discovery metadata. Tool descriptions are not policy. Safety prompts are not revocation systems. If an agent can read files, call APIs, spawn tools, or delegate to peers, each of those actions has to be checked against a structured token and a local policy decision. The model can propose. The receiver enforces.

For security engineers, the red-team surface is concrete. Test adversarial Agent Cards, stale or unsigned discovery data, malicious peer tasks, scope over-request during re-anchoring, DPoP proof replay, stolen-token presentation, MCP tool-description manipulation, STDIO command injection in sandboxed test rigs, fabricated scope-expansion requests, consent-fatigue sequences, and refresh-lineage races. Measure the resource server’s rejection behavior, not only the model’s refusal text.

The near-term standard stack is good enough to build a disciplined boundary, but not good enough to make external orchestrators trustworthy. RFC 8693, RFC 9449, RFC 7519, RFC 7662, RFC 9470, and SPIFFE/SPIRE provide the mechanics for token exchange, sender-constrained access, claims, introspection, step-up signaling, and workload identity. The production profile is token exchange at each hop, DPoP at use time, introspection at receivers, workload identity for owned services, and local policy that treats task text as input rather than authority. The missing pieces are a ratified signed task-envelope profile, a widely accepted human-provenance chain, and interoperable attenuable delegation without a centralized call.

Incremental authorization deserves the same mechanical treatment. A scope-expansion request from an external orchestrator should be signed, bound to the task and current token, mediated by the first orchestrator, and shown to the human as part of the total active grant. Otherwise, the system invites scope creep: several individually reasonable prompts can accumulate into authority the human would not have approved if presented as one grant.

The forward-looking observation is that O2O security will mature fastest where teams stop asking whether an agent is trusted and start asking what authority it is presenting for this exact action. That is the same lesson security engineering has learned repeatedly across service meshes, cloud IAM, CI systems, and plugin ecosystems. Agentic systems make the work order linguistic. They do not make the trust boundary less mechanical.

Peace. Stay curious! End of transmission.

Fact-Check Appendix

Statement: A2A defines Agent Cards, task operations, transport bindings, authentication discovery, and optional Agent Card signing, while leaving authorization to deployment policy. | Source: Source Ledger [1], https://a2a-protocol.org/latest/specification/

Statement: OpenAI Agents SDK handoffs are represented as tool calls and can pass prior conversation history to the receiving agent unless filtered. | Source: Source Ledger [2], https://openai.github.io/openai-agents-python/handoffs/

Statement: MCP tools are model-controlled and include names, descriptions, annotations, schemas, and results. | Source: Source Ledger [3], https://modelcontextprotocol.io/specification/2025-06-18/server/tools

Statement: The article infers that MCP tool metadata can influence model behavior when exposed across an orchestrator boundary. | Source: Article analysis grounded in Source Ledger [3], https://modelcontextprotocol.io/specification/2025-06-18/server/tools

Statement: AgentDojo defines 97 realistic tasks and 629 security test cases for prompt-injection evaluation in tool-using agents. | Source: Source Ledger [15], https://arxiv.org/abs/2406.13352

Statement: NVD records CVE-2026-30615 as a prompt-injection path in a development environment that can modify local MCP configuration and register a malicious STDIO server. | Source: Source Ledger [6], https://nvd.nist.gov/vuln/detail/CVE-2026-30615

Statement: NVD records CVE-2025-49596 as MCP Inspector remote code execution with a CVSS 4.0 base score of 9.4. | Source: Source Ledger [7], https://nvd.nist.gov/vuln/detail/CVE-2025-49596

Statement: NVD records CVE-2025-6514 as command injection in an MCP remote access component with a CVSS 3.1 score of 9.6. | Source: Source Ledger [21], https://nvd.nist.gov/vuln/detail/CVE-2025-6514

Statement: RFC 8693 defines OAuth token exchange mechanics relevant to subject and actor delegation chains. | Source: Source Ledger [8], https://www.rfc-editor.org/rfc/rfc8693

Statement: RFC 9449 defines DPoP sender-constrained access tokens and proof JWT mechanics. | Source: Source Ledger [9], https://www.rfc-editor.org/rfc/rfc9449

Statement: RFC 7662 defines OAuth token introspection for active-token validation by protected resources. | Source: Source Ledger [10], https://www.rfc-editor.org/rfc/rfc7662.html

Top 5 Authoritative Sources and Studies

A2A Protocol Specification: https://a2a-protocol.org/latest/specification/

RFC 8693, OAuth 2.0 Token Exchange: https://www.rfc-editor.org/rfc/rfc8693

RFC 9449, OAuth 2.0 Demonstrating Proof of Possession: https://www.rfc-editor.org/rfc/rfc9449

RFC 7662, OAuth 2.0 Token Introspection: https://www.rfc-editor.org/rfc/rfc7662.html

Model Context Protocol Tool Specification: https://modelcontextprotocol.io/specification/2025-06-18/server/tools