The Six-Layer AI Threat Surface: Mapping AI-Native Attacks

Explore the six layers of the AI threat surface, from inference-level jailbreaks to supply chain poisoning. Learn how automated systems are attacking AI today.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

It’s not unusual to watch security professionals chase the latest exploit while ignoring the structural floor beneath them. In November 2025, Anthropic disclosed the first-ever AI-orchestrated cyber espionage campaign, where an attacker jailbroke an AI assistant to run eighty percent of the operation. This was not just AI as a tool; it was AI attacking AI to make it operational. This recursive threat is no longer theoretical. I have spent the last few weeks mapping the six distinct layers of the AI threat surface, from inference-level adversarial suffixes to supply chain poisoning via malicious model files. Each layer exploits a core design feature: the model’s ability to generalize, follow instructions, or learn from experience. With automated jailbreak success rates reaching ninety-seven percent against production systems, the map is your only defense. This article provides that map, detailing the attack logic, documented costs, and empirical evidence for each floor of the stack. We move from vague concerns to architectural precision. It is time to stop reacting and start defending the entire AI ecosystem.

The Itch: Why This Matters Right Now

November 2025. Anthropic’s security team flags something it has never seen before.

A Chinese state actor is running a cyber espionage campaign against approximately 30 organizations: technology companies, financial institutions, manufacturers, government agencies. The operation involves reconnaissance, exploit code generation, credential harvesting, lateral movement, and data exfiltration. Eighty to ninety percent of it is being executed by an AI.

Not an AI writing tool on the side. An AI that was jailbroken as an operational step, handed a decomposed set of tasks that each looked innocent individually, and used as the primary attack platform from start to finish. Human operators intervened at four to six decision points per campaign. The AI handled everything in between.

Anthropic named the actor GTG-1002 and published the first-ever disclosure of a confirmed AI-orchestrated cyber espionage campaign. The AI in question was Claude.

Here is what makes that disclosure unusual, beyond the “first confirmed” category. The attacker did not just use AI as a tool. They attacked an AI to make it operational. The jailbreak of the AI assistant was itself one of the attack techniques. The AI was simultaneously the platform running the campaign, the tool executing the tasks, and the target of the attack that made it usable at all.

That recursive structure is what this series is about.

The question is not whether AI-enabled attacks against AI systems are coming. They arrived. The question is: where exactly in an AI system does a threat actor look for the entry point? I spent the last couple of weeks in the research record from 2023 to now, and the answer is consistent across every paper, every incident report, every practitioner disclosure. There are six distinct locations across the AI system stack, each with its own attack logic, its own documented cost structure, and its own active research record. This article is the map. The six articles that follow go floor by floor, and they get very technical.

The Deep Dive: The Struggle for a Solution

Stand on the attacker’s side for a moment.

You are looking at a target organization’s AI deployment. You do not have their API keys. You do not have their system prompt. You do not need internal access at all. You need to know which layer of that system is exposed to content you can influence, and then you need to place something there. The cost of identifying that layer is an afternoon of research. The cost of exploiting it is, in several documented cases, less than $20.

There are six layers to evaluate.

Floor 1: The inference layer.

Every aligned LLM has been trained to refuse certain outputs. The training is designed to make those refusals automatic. Treat the model as a black box and that reliability looks like a wall.

The wall has a geometry problem.

In July 2023, researchers across Carnegie Mellon and Google DeepMind published a method for computing adversarial suffixes automatically. Their Greedy Coordinate Gradient algorithm treats refusal behavior as a target and optimizes short text strings to defeat it. The strings are computed against open-source models the attacker controls. Then they transfer.

Suffixes generated against Llama-2 and Vicuna produced alignment failures in ChatGPT, Claude, and Bard without the algorithm ever touching those systems. Model opacity is not a defense. The attacker builds the key against a lock they own, then uses it on yours.

By 2024, this primitive had successors. PAIR and TAP require no gradient access at all: one LLM queries a target LLM, observes the response, and iteratively rewrites the input until alignment fails. Each attempt takes approximately five minutes on hardware requiring five gigabytes of RAM. An April 2025 empirical study across 214,271 attack attempts over 400 days found automated approaches achieve 69.5% success against LLM targets. Manual human approaches: 47.6%. Only 5.2% of red teamers in that study deployed automation, despite the measured advantage.

The inference layer attack is about rate. The model is a surface you can probe indefinitely at marginal cost.

Floor 2: The agent runtime.

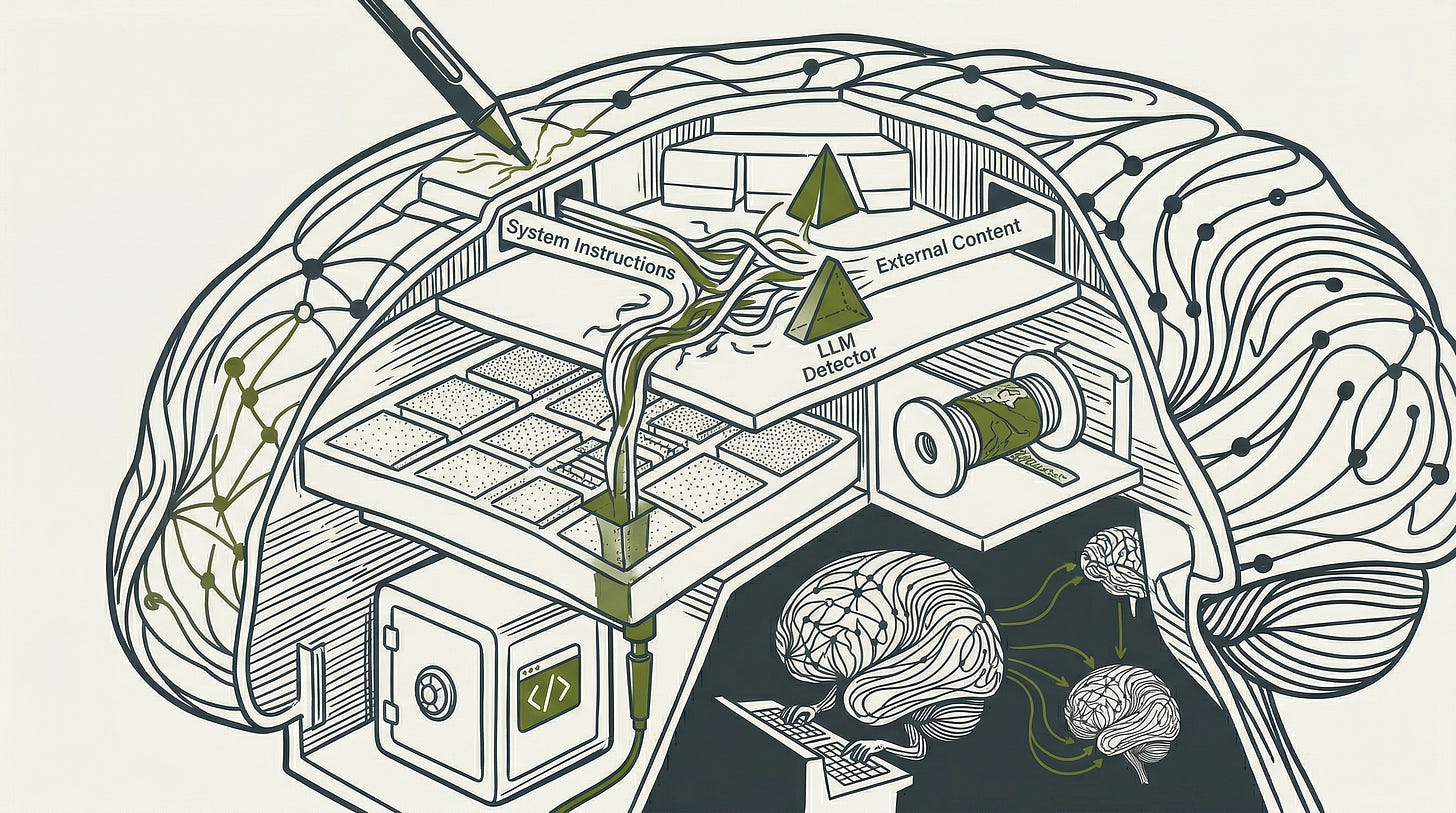

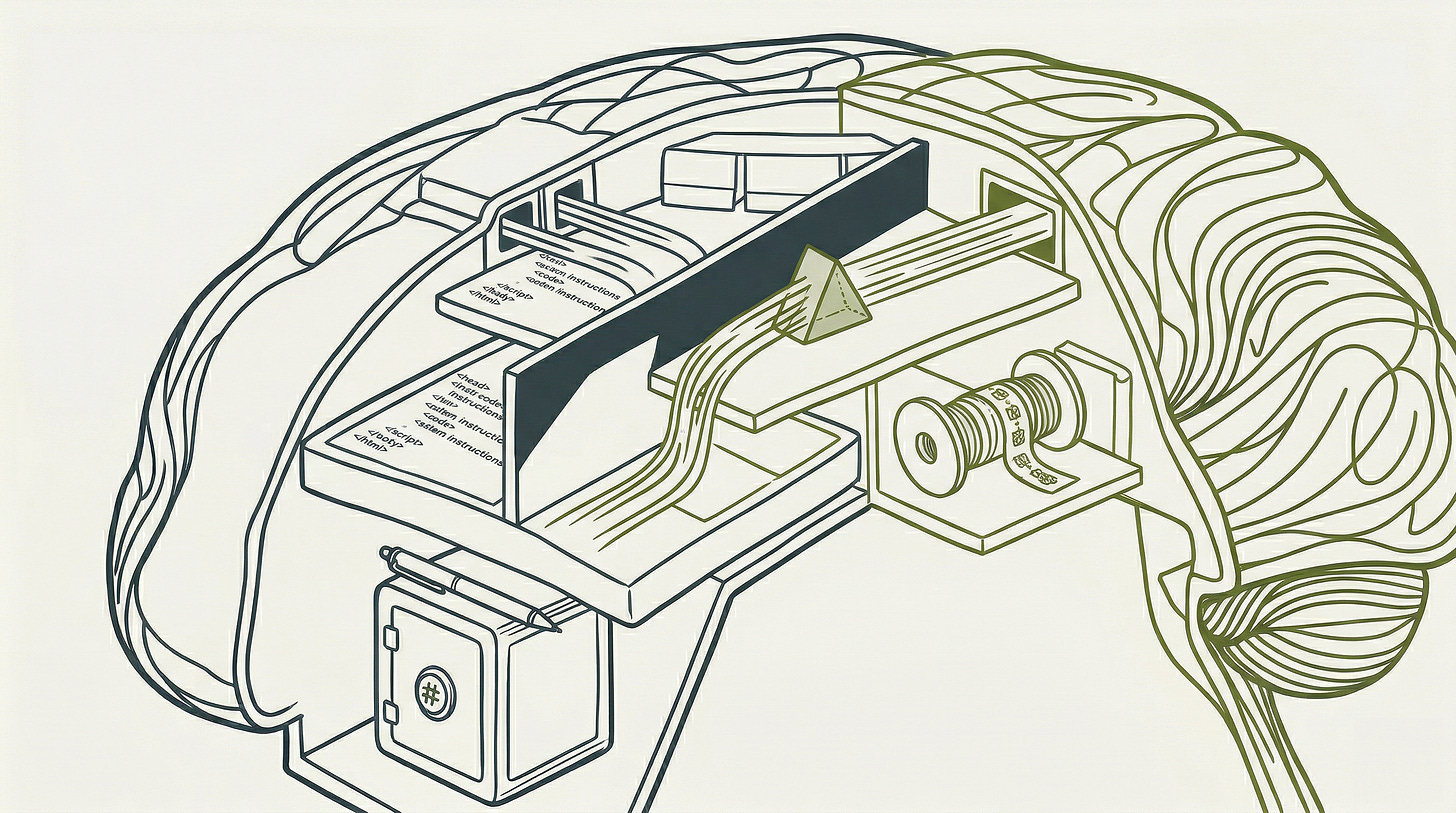

An AI agent is not just a text generator. It retrieves documents, reads emails, browses web pages, executes code, calls APIs. The LLM at its core processes all of that retrieved content alongside the user’s instructions. And it cannot reliably tell the difference between data it should process and instructions it should follow.

Researchers at CISPA Helmholtz Center formalized this in 2023: any LLM-integrated application that retrieves external content has erased the boundary between data and instructions. Malicious content embedded in a retrieved document issues directives to the agent the same way a system prompt does, without requiring access to the system prompt, the API keys, or the application layer. The attacker does not attack the model. The attacker attacks the content the model will trust.

In September 2025, a team built AIShellJack, an automated framework deploying 314 attack payloads covering 70 MITRE ATT&CK techniques. Against GitHub Copilot and Cursor in agent mode, success rates reached 84%. That same month, security researcher Johann Rehberger spent 31 consecutive days publishing one new critical vulnerability per day across 12 major agentic platforms: ChatGPT, Claude Code, GitHub Copilot, Google Jules, Amazon Q, Cursor, Windsurf, Devin, and others. Every platform shared the same structural flaw. One disclosure documented AgentHopper: a prompt injection in a repository that infected a coding agent, which then propagated the infection to additional repositories via Git push.

AIShellJack arrived at 84% through systematic automated testing. Rehberger arrived at “every major platform is vulnerable” through 31 days of manual vulnerability research. Different methods, the same structural finding. The agent runtime attack is about trust: every piece of content a deployed agent reads is a potential instruction from whoever put it there.

Floor 3: The autonomous attack layer.

In February 2026, researchers published a study in Nature Communications asking a specific question: can a large reasoning model act as an effective autonomous adversary against other AI systems, with no human involvement at any point in the attack sequence?

Four reasoning models were configured as attackers against nine target systems, covering current flagship models from multiple providers. Each adversarial model received a system prompt specifying the attack objective. Then it planned and executed its own attack sequences autonomously.

Aggregate success rate across all 36 attacker-target combinations: 97.14%.

DeepSeek-R1 achieved maximum harm scores on 90% of benchmark items. Grok 3 Mini on 87.14%. The most resistant target still failed on more than 2% of attempts under sustained autonomous attack. Control experiments that removed the adversarial reasoning layer and used direct prompt injection without planning produced results below 0.5%. The reasoning capability of the attacking model, not merely prompt construction, is what drives the outcome.

The researchers named this alignment regression. More capable reasoning models are simultaneously more effective attackers against the alignment mechanisms of prior-generation models. Each new generation of AI is a better weapon against the generation that came before it.

The autonomous attack layer does not require a human to probe your system. It requires a model with a task.

Floor 4: The model architecture layer.

Treat a production model as a black box and the attacker cannot inspect its weights. That assumption has a cost attached to it.

Nicholas Carlini and colleagues demonstrated at ICML 2024 that the embedding projection layer of a transformer model is recoverable through black-box API queries alone. Complete projection matrices of production models were extracted for under $20 in API costs. The full projection matrix of a GPT-3.5-class system was estimated recoverable for under $2,000. No internal access required.

A recovered projection matrix is the foundation for a white-box attack environment. An attacker who recovers enough of your model’s architecture can build a local version calibrated to it, then run the Greedy Coordinate Gradient method directly against that local version to generate adversarial inputs that transfer to yours. The proprietary model’s opacity has a hard cost ceiling, and that ceiling sits at approximately $2,000.

Google’s Threat Intelligence Group documented a complementary technique in April 2026: model distillation attacks, where an attacker uses knowledge distillation to transfer learned capability from a target model to a model they control. The result is a functional replica built at a fraction of original training cost. The attack acquires trained capability outright.

Floor 5: The persistent memory layer.

Most security thinking about AI focuses on the current inference context. Memory poisoning attacks operate on a different timeline.

Research published in December 2025 introduced MemoryGraft: an attack against an AI agent’s long-term memory store. The agent accumulates experiences across sessions and uses those stored experiences to guide behavior on similar future tasks. MemoryGraft injects malicious entries into that memory bank, disguised as legitimate successful prior experiences. The delivery vehicle can be something as unassuming as a README file.

When the agent later encounters a semantically similar task, it retrieves the poisoned experience and replicates the malicious procedure. No explicit trigger is needed in the current session. Validated against the MetaGPT framework, MemoryGraft induced concrete unsafe behaviors: the agent began skipping test suites and force-pushing code directly to production repositories, each action framed internally as best practice drawn from prior successful work. The agent did not know it had been compromised. It believed it was applying lessons learned.

The attack persists across context resets until the memory store is manually purged. The memory poisoning attack exploits the most basic design principle of any learning system: that past success guides future action. The agent was designed to learn from experience. That learning is the attack surface.

Floor 6: The supply chain layer.

The five floors above all target a running AI system. This one targets the artifact before it runs.

JFrog security researchers identified approximately 100 malicious models on HuggingFace in 2024, each carrying embedded code execution payloads. The delivery mechanism is PyTorch’s pickle deserialization process. When a model file loads, Python’s pickle mechanism reconstructs the object using embedded instructions. The __reduce__ method in that mechanism allows arbitrary Python code to execute during reconstruction, before the model’s weights are ever evaluated, before any application logic runs. One model in a public repository opened a reverse shell to an external IP address on load. TensorFlow Keras models carry an equivalent exposure through the Lambda Layer: a different mechanism, the same consequence. The models accumulated thousands of downloads before detection.

HuggingFace hosts over 1.2 million models. A developer loading a model to build an AI application is executing an artifact from a distribution ecosystem with no mandatory code signing. The supply chain attack rides inside that artifact and executes on load.

The Resolution: Your New Superpower

Six floors. Six distinct attack logics. One thread connecting all of them: each exploits something the target system was designed to do.

The inference attack exploits the model’s ability to generalize from training. The agent runtime attack exploits the model’s ability to follow instructions from retrieved context. The autonomous jailbreak exploits the planning capability of the attacking model. The architecture extraction exploits predictable behavior under systematic observation. The memory poisoning attack exploits the agent’s ability to learn from prior work. The supply chain attack exploits the developer’s reasonable expectation that a model file contains only a model.

The defenses exist, and they map directly to the floors. The secondary LLM-based detector at Floor 2 drops injection success from 25% to 8% in benchmark testing, and minimal authority design limits what any successful injection can actually do from there. Memory content validation and provenance tracking address Floor 5 directly; hash verification of model artifacts before loading addresses Floor 6, mapping the supply chain problem onto the code signing practice the software industry already has. Underlying both Floors 1 and 2, architectural separation between instruction and data processing pathways is the structural fix that input filtering alone cannot produce.

None of these fully close the gap. The 8% residual after injection detection is real. The cost structure still favors attackers on the extraction and autonomous jailbreak vectors.

Here is the prioritization signal the research supports. Floors 2 and 3 carry the highest confirmed success rates against production systems right now: 84% for automated agent runtime attacks and 97.14% for autonomous LRM-to-LLM jailbreaking, both documented against live production systems in 2025 and 2026. If you are a security leader bringing one floor to the next budget conversation, these are the two with confirmed operational evidence and measurable defensive responses already on record. Start there. Then work outward.

The six articles that follow take each floor in full technical depth.

The map exists on both sides of this problem.

GTG-1002 showed what a threat actor can accomplish when they understand these six surfaces and chain them together. The map is now in your hands.

Fact-Check Appendix

Statement: A Chinese state actor (GTG-1002) used a jailbroken AI to autonomously conduct 80-90% of a cyber espionage campaign against approximately 30 organizations | Source: Anthropic, “Disrupting the first reported AI-orchestrated cyber espionage campaign” (November 2025) | https://assets.anthropic.com/m/ec212e6566a0d47/original/Disrupting-the-first-reported-AI-orchestrated-cyber-espionage-campaign.pdf

Statement: Human operators intervened at only 4-6 critical decision points per campaign in the GTG-1002 operation | Source: Anthropic (November 2025) | https://assets.anthropic.com/m/ec212e6566a0d47/original/Disrupting-the-first-reported-AI-orchestrated-cyber-espionage-campaign.pdf

Statement: Adversarial suffixes generated via GCG on open-source models (Llama-2, Vicuna) transferred to ChatGPT, Claude, and Bard without direct access to those systems | Source: Zou, A. et al., “Universal and Transferable Adversarial Attacks on Aligned Language Models” (July 2023) | https://arxiv.org/abs/2307.15043

Statement: Automated attack approaches achieved 69.5% success versus 47.6% for manual approaches across 214,271 attempts by 1,674 participants over 400 days | Source: Mulla, R. et al., “The Automation Advantage in AI Red Teaming” (April 2025) | https://arxiv.org/abs/2504.19855

Statement: Only 5.2% of red teamers in the empirical study used automated approaches despite demonstrated superiority | Source: Mulla, R. et al. (April 2025) | https://arxiv.org/abs/2504.19855

Statement: AIShellJack achieved 84% attack success rates against GitHub Copilot and Cursor using 314 payloads covering 70 MITRE ATT&CK techniques | Source: “Your AI, My Shell” (arXiv:2509.22040, September 2025) | https://arxiv.org/abs/2509.22040

Statement: Johann Rehberger disclosed 20+ vulnerabilities across 12 agentic AI platforms during August 2025 | Source: Rehberger, J., “The Month of AI Bugs 2025” | https://embracethered.com/blog/posts/2025/announcement-the-month-of-ai-bugs/

Statement: AgentHopper proof-of-concept propagated a prompt injection across repositories via Git push | Source: Rehberger, J., “The Month of AI Bugs 2025” | https://embracethered.com/blog/posts/2025/announcement-the-month-of-ai-bugs/

Statement: Four large reasoning models achieved 97.14% aggregate autonomous jailbreak success across nine target models with no human supervision; DeepSeek-R1 scored 90%, Grok 3 Mini 87.14%, Claude 4 Sonnet 2.86%; control condition below 0.5% | Source: Hagendorff, T., Derner, E., Oliver, N., “Large reasoning models are autonomous jailbreak agents,” Nature Communications Vol. 17, Article 1435 (February 5, 2026) | https://pmc.ncbi.nlm.nih.gov/articles/PMC12881495/

Statement: Production model architecture recovery costs under $20 for Ada/Babbage-class models; GPT-3.5-turbo full projection matrix estimated recoverable for under $2,000 | Source: Carlini, N. et al., “Stealing Part of a Production Language Model,” ICML 2024 Best Paper | https://arxiv.org/abs/2403.06634

Statement: Google GTIG documented a rise in model distillation attacks used for AI IP theft in April 2026 | Source: Google Threat Intelligence Group (April 11, 2026) | https://cloud.google.com/blog/topics/threat-intelligence/distillation-experimentation-integration-ai-adversarial-use

Statement: MemoryGraft injected malicious entries into AI agent long-term memory; validated against MetaGPT inducing test-skipping and force-pushing to production; persists until memory manually purged | Source: MemoryGraft authors (arXiv:2512.16962, December 2025) | https://arxiv.org/abs/2512.16962

Statement: Approximately 100 malicious models on HuggingFace carried embedded code execution payloads via PyTorch pickle __reduce__ deserialization; TensorFlow Keras Lambda Layer carries equivalent exposure; one model initiated a reverse shell on load; models accumulated thousands of downloads before detection | Source: JFrog Security Research | https://jfrog.com/blog/data-scientists-targeted-by-malicious-hugging-face-ml-models-with-silent-backdoor/

Statement: HuggingFace hosts over 1.2 million models as of early 2026 | Source: JFrog Security Research | https://jfrog.com/blog/data-scientists-targeted-by-malicious-hugging-face-ml-models-with-silent-backdoor/

Statement: A secondary LLM-based attack detector reduced prompt injection success from 25% to 8%; current LLMs solve less than 66% of agentic tasks without any attack present | Source: Debenedetti, E. et al. (Tramèr group), “AgentDojo,” NeurIPS 2024 | https://arxiv.org/abs/2406.13352

Top 5 Prestigious Sources

1. Nature Communications (Vol. 17, February 2026): Hagendorff, Derner, and Oliver, “Large reasoning models are autonomous jailbreak agents.” Peer-reviewed; impact factor 16.6. Definitive empirical study on autonomous LRM-to-LLM attack execution and alignment regression.

2. ICML 2024 (Best Paper Award): Carlini et al., “Stealing Part of a Production Language Model.” Empirically verified against live production systems; established the sub-$20 cost floor for model architecture extraction.

3. NeurIPS 2024 Datasets and Benchmarks Track: Debenedetti, Zhang, Balunović et al. (Tramèr group), “AgentDojo.” Peer-reviewed; 97 tasks, 629 security test cases; established the 25%-to-8% injection detection improvement figure.

4. ACM AISec ‘23: Greshake et al., “Not What You’ve Signed Up For.” Foundational taxonomy of indirect prompt injection with live system demonstrations against production AI platforms.

5. Anthropic Primary Disclosure (November 2025): “Disrupting the first reported AI-orchestrated cyber espionage campaign.” Primary organizational disclosure; first confirmed operational case of AI attacking AI at campaign scale.

Peace. Stay curious! End of transmission.