The Manager Layer - Orchestrating Swarms Without Losing Control

Multi-agent AI finally has real standards. MCP, A2A protocols, and microVM isolation turn chaotic agent swarms into reliable infrastructure. The architecture playbook is here.

TL;DR

The wild west of multi-agent AI is over.

For years, building systems where multiple AI agents coordinate felt like herding cats through a tornado. Agents forgot critical instructions buried in their “million-token” context windows. Coordination loops turned workflows into infinite ping-pong matches. One rogue agent could cascade failures across entire systems.

But 2024-2025 changed everything. The industry finally stopped innovating on protocols and started agreeing on them. MCP became the universal connector between agents and tools. A2A became the standard for agent-to-agent communication. Firecracker microVMs made hardware-level isolation fast enough for production.

The research is in: multi-agent systems using structured deliberation can substantially outperform single-agent baselines on complex tasks. Context windows over 128K tokens suffer significant “lost in the middle” accuracy drops. And organizations have a 3-6 month window to define strategy before the landscape consolidates.

This article maps the architectural patterns, consensus protocols, and isolation techniques separating production-grade swarms from expensive failures. The chaos finally has a cure.

The Itch: Why This Matters Right Now

Picture a heist crew where the hacker thinks the safe is on floor three, the driver is circling the wrong building, and the safe-cracker is waiting for a signal that will never come. That’s your multi-agent AI system when coordination fails.

You’ve felt the promise: agents that research, write code, and handle workflows while you sleep. You’ve also met the reality—confident hallucinations, infinite coordination loops, and context windows that “forget” critical instructions buried in the middle.

Here’s what changed: this chaos wasn’t inevitable. It was an architecture problem masquerading as an AI problem.

The format wars are over. MCP standardizes tool connections. A2A standardizes agent communication. Isolation patterns from distributed systems now apply to agent containment. If you’re deploying agents at scale—or debugging ones already stumbling in production—you need to understand what finally works.

The Deep Dive: The Struggle for a Solution

The Villain: Your Context Window Is Lying to You

Marketing materials will tell you that today’s language models can handle millions of tokens. Meta’s Llama 4 Scout boasts a 10 million token context window. Surely, you think, if I can stuff everything into one giant context, I don’t need the complexity of multiple agents coordinating.

This is the “lost in the middle” trap.

Language models exhibit U-shaped attention bias—they perform well on information at the very beginning and end of context, but accuracy craters for anything in the middle. Liu et al. (TACL 2024) demonstrated that performance drops of over 20% occur when relevant information sits mid-context, with some models performing worse than closed-book baselines. GPT-3.5-Turbo’s multi-document QA performance can collapse entirely when forced to retrieve from the middle of its input.

This is why multi-agent decomposition isn’t optional. Each sub-task gets fresh context where nothing gets lost in the middle.

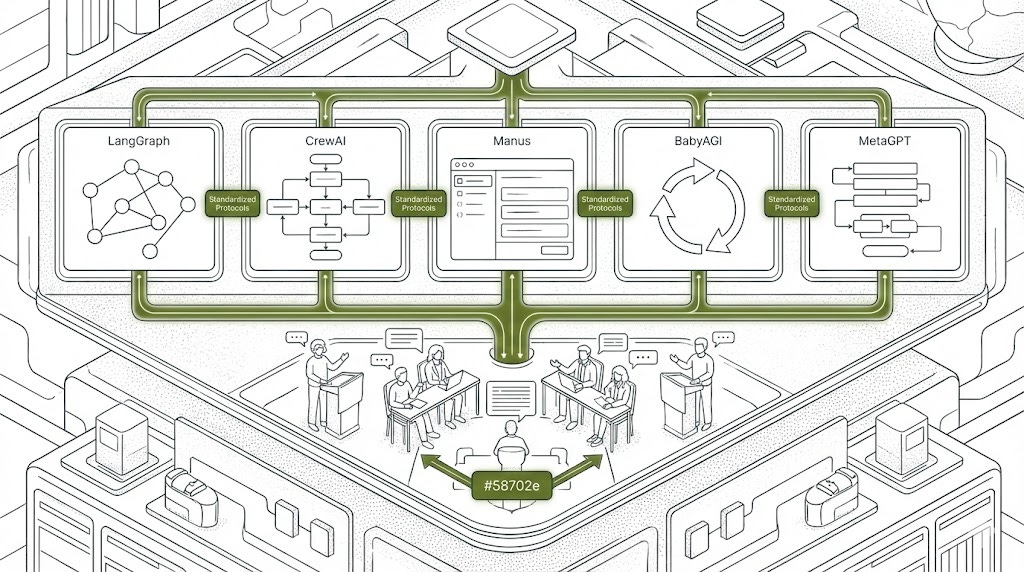

The Orchestration Wars: Five Philosophies for Coordinating Agents

We’ll examine the five most widely adopted frameworks in detail below. For a quick comparison of these and other rising contenders, see the reference guide at the end of this section.

LangGraph treats workflows as state machines. State, nodes, and edges define everything. The key differentiator: first-class checkpointing that persists every state transition, enabling failure recovery and human intervention at any point. The forward_message tool in langgraph-supervisor prevents the “telephone game” where instructions degrade through delegation chains.

CrewAI thinks in roles. You define a Project Manager, Researcher, Writer—each with responsibilities. When delegation is enabled, agents gain two tools: “Delegate work to coworker” and “Ask question to coworker.” The tradeoff: each delegation creates 3+ LLM calls. Critical rule: give tools only to workers, keep managers focused on coordination, disable delegation on specialists to prevent infinite loops.

Manus AI rejected hierarchy for context engineering. Peak Ji’s philosophy: be the boat rising with model progress, not a pillar stuck to the seabed. Their architecture obsesses over KV-cache efficiency—with 100:1 input-to-output ratios, the difference between cached tokens ($0.30/M) and uncached ($3.00/M) is architecturally significant. The todo.md pattern pushes plans into recent attention. Failed actions stay in context: “Erasing failure removes evidence.”

BabyAGI evolved toward self-building agents. Yohei Nakajima’s design separates code definitions, execution logs, and accumulated knowledge into three layers. The learning mechanism analyzes every task alongside its output to generate “reflections” stored for future retrieval—distinguishing “what I did” from “what I learned.”

The 2024-2025 wave brought specialized approaches. Microsoft’s AutoGen adopted the actor model—message passing and event-driven coordination across clouds. Stanford’s DSPy treats LLM interactions as compilable programs; Khattab et al. (ICLR 2024) demonstrated improvements from 33% to 82% through automatic optimization. MetaGPT encodes SOPs into agent teams—a single command like “Create a 2048 game” produces user stories, architecture, and complete code through coordinated Product Manager, Architect, Engineer, and QA agents.

Quick Reference: Framework Comparison

The guide below summarizes the frameworks discussed above alongside emerging alternatives worth watching.

LangGraph

Philosophy: Workflows as state machines with typed transitions

Differentiator: First-class checkpointing enables failure recovery and human-in-the-loop at any node

Best for: Complex stateful workflows requiring auditability and intervention points

Watch out: Steeper learning curve; overkill for linear pipelines

CrewAI

Philosophy: Organizations as role-based teams with delegation

Differentiator: Automatic delegation tooling (”Delegate work to coworker,” “Ask question to coworker”)

Best for: Rapid prototyping of role-based collaboration patterns

Watch out: Each delegation creates 3+ LLM calls; costs compound quickly

Manus AI

Philosophy: Context engineering over model fine-tuning

Differentiator: KV-cache optimization with 100:1 input-to-output ratios; logit masking preserves cache

Best for: Cost-sensitive production deployments at scale

Watch out: Requires deep infrastructure investment; less portable

BabyAGI

Philosophy: Self-building agents through reflection

Differentiator: Separates code definitions, execution logs, and accumulated knowledge into distinct layers

Best for: Autonomous task discovery and learning systems

Watch out: Experimental; limited production hardening

AutoGen

Philosophy: Distributed computing patterns for AI (actor model)

Differentiator: Message-passing and event-driven coordination across cloud boundaries

Best for: Multi-cloud deployments requiring geographic distribution

Watch out: Complexity overhead for single-environment use cases

MetaGPT

Philosophy: Software company SOPs encoded as agent teams

Differentiator: Single command → complete software artifacts (stories, architecture, code, QA)

Best for: Automated software development pipelines

Watch out: Rigid role structure; less adaptable to non-software domains

DSPy

Philosophy: LLM interactions as compilable programs

Differentiator: Automatic prompt optimization achieving 2-3x quality improvements without manual tuning

Best for: Teams iterating rapidly on prompt-sensitive applications

Watch out: Optimization runs can take 60-90 minutes; requires labeled examples

When Agents Disagree: The Science of Synthetic Consensus

When multiple agents process information in parallel, how do you know which one to believe?

Simple voting works better than you’d expect. Sun et al. (arXiv:2502.14743, 2025) demonstrated that scaling up agents and taking majority votes consistently improves accuracy. The magic starts with as few as three agents—positive synergies emerge where the group outperforms any individual.

But simple voting has limits. Zhao, Wang, and Peng (EMNLP 2024) analyzed 52 multi-agent systems and found over-reliance on “dictatorial voting”—single agents making final decisions—while ignoring sophisticated social choice mechanisms.

Multi-round deliberation changes the game. Studies show multi-round deliberation with structured protocols can substantially outperform single-agent baselines—though gains are highly task-dependent. Finance and planning domains show the strongest multi-agent benefits, while simpler retrieval tasks may see minimal gains or even degradation from coordination overhead. Chen et al.’s ReConcile framework runs round-table conferences among diverse LLMs (ChatGPT, Claude, Bard) where confidence-weighted voting synthesizes their disagreements.

The secret weapon against groupthink? Devil’s advocate patterns. Dedicated agents challenge emerging consensus with Socratic questions. The “Amplifying Minority Voices” framework deploys a Summary Agent to consolidate consensus, a Conversation Agent generating empathetic counterarguments, and a Paraphrase Agent that anonymizes minority opinions so they’re evaluated on merit rather than source.

The Hidden Tax: When Complexity Costs More Than It Delivers

Multi-agent architectures multiply costs in ways that aren’t obvious until production.

API economics compound fast. A CrewAI delegation chain creates three or more LLM calls per handoff. A five-agent workflow with two delegation layers can easily hit 15-20 API calls for a single user request. At $3 per million input tokens, a workflow processing 50K tokens per call costs $0.15 per request in inference alone—before retries, before failures, before the coordinator’s overhead.

Latency stacks sequentially. Parallel agent execution helps, but coordination checkpoints serialize. A four-agent pipeline with 500ms per agent call and three synchronization points adds 2+ seconds of irreducible latency—often unacceptable for interactive applications.

The single-agent heuristic: If your task doesn’t require (1) genuinely distinct expertise domains, (2) parallel information gathering, or (3) adversarial verification, a well-prompted single agent with tools will likely outperform a multi-agent system while costing 5-10x less.

Cost mitigation exists. Manus AI’s KV-cache optimization exploits the 100:1 input-to-output ratio typical of agent workflows. Cached tokens at $0.30/million versus uncached at $3.00/million represents a 10x cost difference—but requires architectural commitment to cache-friendly context management.

The question isn’t whether multi-agent systems can solve your problem. It’s whether the coordination tax is worth paying.

Containing the Blast: How Distributed Systems Wisdom Saves Agent Swarms

When coordination fails—and it will—you need failures to stay contained. Distributed systems engineers solved this decades ago. Now their patterns are saving agent architectures.

The bulkhead pattern treats your system like a ship. Watertight compartments mean one breach doesn’t sink everything. For agents, this means resource pool separation: separate API quotas, compute instances, and rate limits per agent cluster. Dedicated memory pools per domain. And critically, avoiding synchronous inter-service calls that extend failure domains. If Agent A calls Agent B synchronously, Agent B’s timeout becomes Agent A’s problem.

The circuit breaker pattern prevents cascade failures. Three states: CLOSED (normal operation), OPEN (failing fast, not attempting calls), and HALF-OPEN (testing if recovery is possible). After five failures, the breaker opens. After 30-60 seconds, it half-opens to test one request. Three successes close it again. Fallback strategies chain gracefully: Primary Model → Secondary Model → Local Model → Cached Response. Exponential backoff with jitter prevents thundering herds when services recover.

The MAST framework mapped where multi-agent systems actually fail. Cemri et al. (arXiv:2503.13657, 2025) identified three dominant categories across 1,600+ annotated traces: specification and system design issues (the largest contributor), inter-agent misalignment during execution, and task verification gaps. These categories captured 14 distinct failure modes—from reasoning-action mismatch to premature termination. Six cascading patterns emerge: monoculture collapse (shared base models with correlated vulnerabilities), conformity bias (agents reinforcing errors into false consensus), and deficient theory of mind (incorrect assumptions about what other agents know).

MicroVM isolation is now mandatory for containing code execution risks. Docker provides process-level separation, but agents executing arbitrary code need hardware boundaries. Firecracker microVMs, the technology behind AWS Lambda, achieve sub-125ms startup with full kernel isolation. gVisor provides user-space kernel interception at ~200ms. The selection criteria are explicit: Docker for read-only retrieval agents, gVisor minimum for code execution, Firecracker mandatory for anything touching financial data, PII, or infrastructure.

The Human Guardrail: When Escalation Becomes Mandatory

Not every decision should be automated. The question is knowing when to pull the emergency brake.

LangGraph provides first-class interrupt patterns. Static interrupts compile into the graph at known high-risk nodes. Dynamic interrupts trigger at runtime based on conditions—risk level exceeding 0.8, for instance. Both require a checkpointer storing state so execution can resume after human input. The pattern: pause with context (proposed action, risk level, reasoning), collect approval, route to execution or alternative path based on the response.

Confidence-based tiering defines escalation thresholds. Critical priority (90-100% confidence in a problem) triggers immediate escalation plus automated containment. High priority (75-89%) goes to on-duty analysts. Medium (60-74%) queues for review during quieter periods. Below 40% gets logged for pattern detection only.

Specific triggers should bypass confidence calculations entirely: repeated failures (three or more attempts), high-stakes keywords (billing, legal, security, delete), negative sentiment detection, and transactions exceeding value limits. The tiered response: Tier 1 attempts AI self-correction through rephrasing or alternative knowledge bases. Tier 2 hands off to a specialized agent. Tier 3 presents full generated context to a human.

Kill switches require external state storage—outside the agent runtime where agents can’t disable them. Redis-based flags let you check enablement before every action and execute global emergency stops across all agents with a single pipeline command. Graceful degradation follows a sequence: detect anomalous behavior, inject tighter restrictions, reduce autonomy level, require more checkpoints, limit available actions, and ultimately disable the agent entirely.

The Resolution: Your Decision Framework

The architectural choices reduce to three questions:

How stateful is your workflow? High state complexity (branching logic, recovery needs) → graph-based orchestration. Low complexity → role-based or single-agent with tools.

What’s your failure tolerance? Mission-critical operations demand bulkhead isolation + circuit breakers + human escalation. Exploratory tasks can run leaner.

Where does cost bite hardest? Token-bound budgets favor context engineering (Manus-style caching). Latency-bound applications need parallelization. Iteration-bound teams benefit from DSPy’s programmatic optimization.

What remains unsolved: Dynamic agent spawning, cross-organization trust protocols, and standardized debugging interfaces are active research frontiers. The MCP/A2A convergence suggests tooling will consolidate before these deeper problems resolve.

Your move this week: Instrument one existing workflow with failure categorization using MAST’s taxonomy. You’ll learn more about your system’s actual failure modes in two hours than in two months of intuition-driven debugging.

Gartner projects 40% of enterprise applications will feature task-specific agents by end of 2026. The window to define strategy is three to six months before consolidation. The swarm has been tamed. The question is whether you’ll be directing it.

Coming next in this series: We examine the threat model for Agentic AI.

References

Liu, N. F., et al. “Lost in the Middle: How Language Models Use Long Contexts.” Transactions of the Association for Computational Linguistics 12:157–173 (2024).

Sun, X., et al. “Multi-Agent Coordination across Diverse Applications: A Survey.” arXiv:2502.14743 (2025).

Zhao, J., Wang, S., & Peng, N. “An Electoral Approach to Diversify LLM-based Multi-Agent Collective Decision-Making.” EMNLP (2024).

Cemri, M., et al. “Why Do Multi-Agent LLM Systems Fail?” arXiv:2503.13657, MAST Framework (2025).

Gartner Research. “40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026.” (August 2025).

Khattab, O., et al. “DSPy: Compiling Declarative Language Model Calls into Self-Improving Pipelines.” ICLR (2024).

Chen, J., et al. “ReConcile: Round-Table Conference Improves Reasoning via Consensus among Diverse LLMs.” arXiv (2023).

Agache, A., et al. “Firecracker: Lightweight Virtualization for Serverless Applications.” NSDI (2020).

Peace. Stay curious! End of transmission.