The Secret That Shouldn't Exist - How Modern Agents Get API Access Without Carrying Keys

Stop giving agents long-lived secrets. Learn 5 credential injection patterns - dynamic secrets, workload identity, OAuth exchange, confidential computing - that reduce breach exposure to minutes

TL;DR:

Every credential your agent carries is a liability. Static API keys get logged within seconds of provisioning, leaked to GitHub, or buried in container images for months. When the breach hits at 2 AM, you won’t know the blast radius - and rotation means downtime.

There’s a better way: credentials that don’t exist until the moment they’re needed, then vanish.

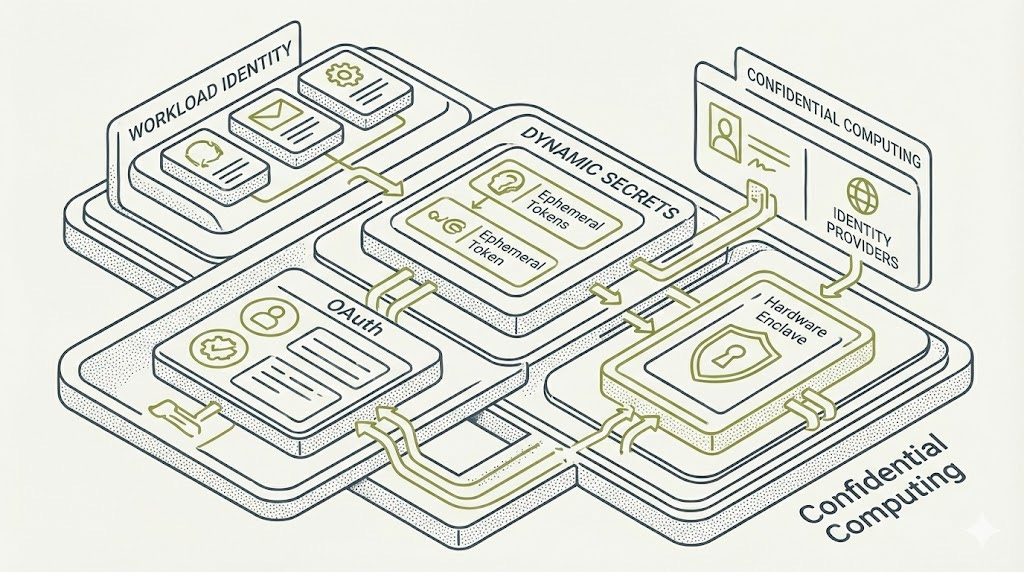

This article breaks down five patterns that eliminate long-lived secrets for autonomous agents. Dynamic secrets from HashiCorp Vault that expire in minutes. Workload identity (IRSA, GCP WI) where your agent is the credential - no keys required. OAuth token exchange for user-delegated access with per-operation scoping. Confidential computing (SGX, TDX, SEV-SNP) for hardware-enforced protection even the cloud provider can’t bypass. And network-layer injection for legacy systems that can’t be modernized.

You’ll get a decision framework for choosing between patterns, working code examples, and specific guidance for security architects, developers, and CISOs. The future belongs to agents that prove their identity - not those that carry their keys.

The Itch: Why This Matters Right Now

If not yet, you’ll eventually be there (hopefully this article helps and you don’t have to go through it). It’s 2 AM, and your phone won’t stop buzzing.

Someone found an AWS access key in a GitHub commit. Or a Slack bot token in a CloudWatch log. Or - worse - a database credential hardcoded in a container image that’s been running in production for eight months.

The credential has been exposed for... how long, exactly? Days? Weeks? You don’t know. The blast radius? Also unclear. You start the painful process of rotation, but rotation means downtime, and downtime means angry customers, and angry customers mean explaining to leadership why your “sophisticated cloud architecture” can be brought down by a copy-paste error.

This is the tax we’ve been paying for decades: the static credential problem.

Here’s the uncomfortable truth. When we gave applications long-lived secrets - API keys that don’t expire, service account passwords rotated quarterly (if we’re lucky), database credentials shared across environments - we created a ticking time bomb. Industry experience consistently shows that credentials frequently appear in application logs shortly after provisioning - often inadvertently through debug statements, error handlers, or overly verbose SDK logging. The window between credential issuance and accidental exposure is alarmingly short, sometimes measured in seconds rather than days.

As Maya Kaczorowski, former security lead at Google, has emphasized: software supply chain security extends beyond code signing to how credentials are provisioned, rotated, and scoped. The credential lifecycle is part of the supply chain - and a weak link anywhere breaks the entire chain.

Now multiply this problem by autonomous agents.

We’re deploying AI agents that need access to APIs, databases, cloud services, and third-party integrations. Each agent is a new surface area for credential exposure. Each agent is another key that could be stolen, logged, or intercepted. The old playbook - “just rotate secrets more often” - doesn’t scale when you have dozens of agents spinning up and down dynamically.

The status quo is broken. The question is: what replaces it?

The Deep Dive: The Struggle for a Solution

The Villain: Turtles All the Way Down

Before we can fix the problem, we need to understand why it’s so stubborn.

Security architects call it the “bottom turtle” problem. Think about how your application currently authenticates to a database. It uses a password. Where does that password come from? An environment variable. Where does that come from? A Kubernetes secret. Where does that come from? Vault. And how does your application authenticate to Vault?

Another secret.

Eventually, you hit a secret with nothing beneath it - no authentication, no attestation, just... trust. That’s your bottom turtle. And attackers know to look for it.

The traditional answer has been to bury this turtle deeper: more encryption, more layers, more complexity. But complexity is the enemy of security. Every layer you add is another thing that can break, another thing to audit, another thing to explain to the compliance team.

We needed a different approach entirely.

The Breakthrough: What If Credentials Didn’t Exist Until You Needed Them?

This is where the paradigm shift happens.

Imagine credentials that are born the moment an agent requests them and die the moment the task completes. No storage. No rotation. No sprawl. If they leak, they’re already expired. This is the promise of dynamic secrets, and HashiCorp Vault pioneered the implementation.

Here’s how it works in practice. Your agent needs database access. Instead of looking up a stored password, it asks Vault: “I need to talk to the Postgres database.” Vault doesn’t hand over a shared credential. Instead, it talks directly to Postgres, creates a brand-new username and password just for this request, stamps an expiration on it - say, 30 minutes - and hands it to the agent.

The agent does its work. The credential expires. Postgres cleans up the user automatically. No secrets persisted anywhere.

The numbers are striking. A traditional static credential compromise exposes you for months. A dynamic credential compromise? Minutes. The blast radius shrinks from “catastrophic” to “manageable.”

But dynamic secrets solve only part of the puzzle. The agent still needs to authenticate to Vault somehow. We’ve just moved the problem one level up. This is where workload identity enters the picture.

The Identity Revolution: Becoming What You Are

Think about how humans authenticate. When you walk into your office, the security guard doesn’t ask for a password. They look at your face, check it against your badge photo, and let you through. Your identity is derived from who you are, not from a secret you carry.

AWS IAM Roles for Service Accounts (IRSA) applies this principle to Kubernetes pods. Instead of giving your agent an AWS access key, you tell AWS: “Trust this Kubernetes service account as if it were this IAM role.” When the agent runs, it doesn’t carry credentials. It is the credential.

The mechanics are elegant. Your Kubernetes cluster exposes an OIDC endpoint - essentially, a public cryptographic signature of “yes, this pod is really running with this service account.” AWS validates that signature against its trust policy. If everything matches, it issues temporary credentials that expire in an hour.

The agent code? It doesn’t change at all. The AWS SDK automatically detects it’s running in this environment, fetches the token, exchanges it for temporary credentials, and handles refresh. The developer never sees a key.

Kelsey Hightower’s pioneering work on Kubernetes secrets management and workload identity helped establish many of these patterns as industry standard. His demonstrations of “keyless” authentication - where pods prove identity through platform attestation rather than stored secrets - shifted how the community thinks about credential bootstrapping.

Google Cloud has Workload Identity. Azure has Managed Identities. The pattern is universal: identity derived from platform attestation, not bootstrapped secrets.

IRSA in Practice: Zero-Credential Code

The elegance of IRSA is that your application code doesn’t change at all. The AWS SDK automatically detects the injected service account token and handles credential exchange:

import boto3

# No AWS_ACCESS_KEY_ID or AWS_SECRET_ACCESS_KEY set anywhere.

# The SDK detects IRSA automatically via AWS_WEB_IDENTITY_TOKEN_FILE

# and AWS_ROLE_ARN environment variables injected by Kubernetes.

s3 = boto3.client('s3') # Credentials fetched transparently

buckets = s3.list_buckets()

for bucket in buckets['Buckets']:

print(bucket['Name'])

That’s it. No secrets in environment variables. No credential files. No rotation logic. The SDK exchanges the Kubernetes service account token for temporary AWS credentials, refreshes them automatically, and your code simply works.

For comparison, here’s what you’d need without IRSA - storing long-lived credentials that could leak:

# ❌ DON'T DO THIS: Static credentials that can be logged, stolen, or forgotten

# This key will end up leaked in logs

aws_access_key = 'AKIAIOSFODNN7EXAMPLE'

# This secret will get committed to Git

aws_secret_key = 'wJalrXUtnFEMI/K7MDENG'

s3 = boto3.client(

's3',

aws_access_key_id=aws_access_key,

aws_secret_access_key=aws_secret_key

)

The first example is secure by default. The second is a breach waiting to happen.

But what happens when your agent needs to act on behalf of a user? When it needs to access their Google Drive or their Salesforce account?

Universal Identity: SPIFFE and SPIRE

Workload identity solves the problem beautifully—if you’re in a single cloud. But what happens when your agents span AWS, GCP, and on-prem data centers? You can’t use IRSA to authenticate to a GCP service, and Azure Managed Identity doesn’t help you talk to AWS.

This is where SPIFFE (Secure Production Identity Framework for Everyone) enters the picture. SPIFFE is an open standard that defines a universal identity format for workloads, regardless of where they run. SPIRE is the reference implementation that issues and manages these identities.

The core concept is the SVID - a SPIFFE Verifiable Identity Document. Every workload gets a cryptographic identity, typically a short-lived X.509 certificate or JWT, that looks like spiffe://trust-domain/workload-identifier. SPIRE agents running on each node attest workloads based on properties like Kubernetes service account, AWS instance metadata, or process attributes, then issue SVIDs automatically.

The elegance is that SPIRE solves the “bottom turtle” problem by anchoring trust in platform attestation rather than a bootstrap secret. Your agent running in Kubernetes gets an SVID based on its service account. Your agent running on an EC2 instance gets an SVID based on its instance identity document. No shared secrets. No manual provisioning. The platform is the root of trust.

Once workloads have SVIDs, they can authenticate to each other using mutual TLS (mTLS) or present JWTs to services that accept them. Vault, for example, can authenticate workloads via SPIFFE, closing the loop: your agent proves its identity to SPIRE, receives an SVID, presents it to Vault, and receives dynamic database credentials—all without a single long-lived secret in the chain.

For organizations running across multiple clouds or hybrid environments, SPIFFE/SPIRE provides the universal identity layer that makes everything else work.

The Delegation Problem: Acting on Someone Else’s Behalf

This is where OAuth 2.0 Token Exchange (RFC 8693) becomes essential.

Picture this scenario: A user authorizes your AI agent to access their email. You receive an OAuth token for your application. But your agent needs to call a backend service that only accepts tokens issued for it, not for your frontend. And you want the scope limited - the agent should be able to read emails, not delete them.

Token exchange solves this by letting you trade one token for another with different properties. Your agent presents the user’s original token, proves its own identity, and receives back a new token scoped to exactly what it needs, with an expiration measured in minutes, not hours.

Auth0 built an implementation specifically for AI agents: a “Token Vault” that stores user refresh tokens encrypted at rest. The agent never sees the refresh token. When it needs access, it requests a token exchange, receives a short-lived access token, makes its API call, and the token expires. Fresh credentials for every operation, minimal permissions, clear audit trail.

This is the difference between giving someone your house key and letting them in through a video doorbell that requires approval each time.

The Hardware Guarantee: When Software Trust Isn’t Enough

Sometimes, software-level protections aren’t sufficient. Regulated industries, multi-tenant environments, zero-trust cloud deployments - these scenarios demand confidential computing.

The premise is radical: what if even the cloud provider couldn’t read your secrets?

Intel SGX creates hardware-enforced “enclaves” - regions of memory that the CPU encrypts and protects from everything else on the system, including the operating system and hypervisor. Your agent’s most sensitive operations happen inside this enclave. The API key literally cannot be read from outside, even with physical access to the server.

AMD SEV-SNP takes a different approach: the entire virtual machine becomes confidential. Memory is encrypted with keys managed by the CPU itself. The hypervisor can schedule your VM but cannot inspect its contents.

Intel TDX (Trust Domain Extensions) represents Intel’s newest confidential computing technology, providing a middle ground between SGX’s granular enclave model and SEV-SNP’s full-VM approach. TDX creates hardware-isolated virtual machines called “Trust Domains” that are protected from the hypervisor and other VMs. Unlike SGX, TDX doesn’t require application rewrites - it operates at the VM level, requiring only OS and hypervisor support. TDX is available on 4th and 5th generation Xeon processors and is supported by Azure and GCP for confidential VM workloads.

All three technologies support remote attestation - a cryptographic proof that your code is running unmodified inside the secure environment. A secrets provisioning service can verify this attestation before releasing credentials, ensuring that secrets only reach legitimate, untampered agents.

The tradeoff is complexity and performance. Performance overhead varies significantly by workload and technology. AMD SEV-SNP typically adds 2-5% overhead for compute-bound tasks, since encryption happens transparently at the memory controller. Intel SGX overhead can reach 10-20% for memory-intensive applications due to enclave page cache (EPC) limitations and the cost of transitioning between trusted and untrusted code. Intel TDX generally falls between these extremes - offering VM-level protection with lower overhead than SGX but slightly more than SEV-SNP due to additional integrity checks. For most workloads, expect single-digit percentage impacts; for memory-heavy or enclave-intensive applications, plan for more.

This isn’t for every workload - but for high-value secrets in hostile environments, it’s the strongest guarantee available.

The Transparent Path: Network-Layer Injection

There’s one more pattern worth understanding, especially for legacy integrations: credential injection at the network layer.

The concept is simple. Your agent makes HTTP requests without any authentication headers. A sidecar proxy - an Istio service mesh, an authenticating reverse proxy, or even an eBPF program in the kernel - intercepts those requests, fetches the appropriate credentials from a secure store, injects them into the request, and forwards it to the target API.

The agent code has no credentials. Not in memory, not in environment variables, not in logs. The proxy is the only component that touches secrets.

HashiCorp Boundary implements this pattern for SSH: you connect through Boundary without an SSH key, and Boundary fetches the key from Vault and injects it into the connection transparently. The user never sees the key. The key never touches their laptop.

For agents accessing legacy APIs that can’t support modern auth flows, network-layer injection provides a path forward without rewriting the agent.

Choosing Your Pattern: A Decision Framework

With five distinct approaches available, how do you choose? The answer depends on your deployment model, compliance requirements, and how much complexity you’re willing to absorb.

Workload Identity (IRSA, GCP Workload Identity, Azure Managed Identity) is your starting point for single-cloud Kubernetes deployments. It’s free, cloud-native, and requires minimal code changes - often zero. The security guarantee is strong: identity is platform-attested, and no secrets are stored. If you’re running in one cloud and your workloads are containerized, start here.

Dynamic Secrets via HashiCorp Vault fills the gaps that workload identity can’t cover. Database credentials, multi-cloud deployments, legacy systems that need automated rotation - Vault handles all of these. The complexity is moderate: you’re adding infrastructure (Vault clusters) and operational overhead (policies, audit logging). But the payoff is significant: blast radius measured in minutes rather than months, and a single control plane for secrets across environments.

OAuth Token Exchange is essential when your agent acts on behalf of users rather than on behalf of itself. If you’re building agents that access user email, calendars, or third-party SaaS applications, token exchange gives you scoped permissions, per-operation tokens, and a clear audit trail. Auth0’s Token Vault and similar solutions abstract away the complexity of refresh token management, letting you focus on agent logic rather than OAuth plumbing.

Confidential Computing (SGX, TDX, SEV-SNP) is reserved for your highest-stakes scenarios: regulated industries with strict compliance requirements, multi-tenant environments where tenant isolation is paramount, or zero-trust cloud deployments where you can’t fully trust the infrastructure provider. The complexity is high - you’re dealing with hardware dependencies, attestation flows, and potential application partitioning (for SGX). But the guarantee is unmatched: hardware-enforced memory encryption that even privileged attackers can’t bypass.

Network-Layer Injection solves a specific problem: legacy APIs and applications that can’t be modified to support modern authentication. If you’re integrating with systems that expect static API keys or basic auth, injecting credentials at the proxy layer keeps secrets out of application code entirely. It’s also useful for SSH and RDP access patterns where you want credentials to exist only for the duration of a session.

The heuristic is straightforward: start with workload identity wherever possible - it’s the lowest friction and highest security baseline. Layer in Vault for anything workload identity can’t reach. Add OAuth token exchange when users delegate access to agents. Reserve confidential computing for crown-jewel secrets in hostile or regulated environments. Use network-layer injection for legacy systems that can’t be modernized.

The Resolution: Your New Superpower

The future of agent credentials isn’t about better secret storage or more frequent rotation. It’s about eliminating stored secrets entirely and deriving credentials from identity.

For multi-cloud deployments or organizations demanding uniform identity across environments, SPIFFE and SPIRE provide a foundation. Every workload gets a cryptographic identity - a short-lived X.509 certificate or JWT - issued based on platform attestation, automatically rotated, and usable for mTLS everywhere. The “bottom turtle” becomes the platform itself, not a secret.

The pattern emerging across all these approaches is consistent: short-lived credentials, platform-attested identity, zero trust by default.

Here’s what this means for you. Security architects: design for credential ephemerality from the start. Developers: lean on SDKs and workload identity; stop passing API keys through environment variables. CISOs: measure blast radius, not rotation frequency. The question isn’t “how often do we rotate?” It’s “how long does exposure last?”

The organizations investing in these patterns now aren’t just reducing incidents. They’re building infrastructure that enables agent deployment without fear. They’re accelerating innovation by removing security as a bottleneck.

The future belongs to agents that can prove their identity - not those that carry their keys.

Peace. Stay curious! End of transmission.

References

HashiCorp Engineering — Vault Dynamic Secrets Architecture and Implementation Patterns (https://developer.hashicorp.com/vault/)

AWS Containers Blog — “Diving into IAM Roles for Service Accounts” — Technical deep dive on IRSA mechanics

RFC 8693 — OAuth 2.0 Token Exchange (IETF Standard, https://datatracker.ietf.org/doc/html/rfc8693)

SPIFFE/SPIRE Project — Secure Production Identity Framework specifications (https://spiffe.io/docs/)

Confidential Computing Consortium — AMD SEV-SNP, Intel SGX, and Intel TDX attestation documentation (https://confidentialcomputing.io/)

Auth0 — Token Vault for AI Agents (https://auth0.com/ai/docs/intro/token-vault)

Intel — Trust Domain Extensions Overview (https://www.intel.com/content/www/us/en/developer/tools/trust-domain-extensions/overview.html)

Kelsey Hightower — “Kubernetes Secrets Management” (KubeCon keynotes) and GCP Workload Identity documentation contributions

Maya Kaczorowski — Software Supply Chain Security research (formerly Google)