AI Governance Engineering: Bridging the Policy-Control Gap

Discover the five essential engineering artifacts needed to comply with the EU AI Act, NIST AI RMF, and ISO 42001. Move from AI policy to actual security.

Disclaimer

This article is intended for informational purposes and reflects the state of published research and industry practice as of early 2026. It is not professional security advice. Your specific environment, threat model, and regulatory obligations will shape how these principles apply to your situation.

TL;DR

We have spent some time dissecting the regulatory landscape of AI governance, but here is the uncomfortable truth: frameworks like the EU AI Act, NIST AI RMF, and ISO 42001 only tell you what outcomes you need, not how to engineer them. Your compliance lead might feel secure with a stack of policy documents and signed agreements. However, when an auditor walks in on September 1, 2026, and asks for the cryptographically intact logs from a high-risk hiring system decision, a Confluence page will not save you. The gap between a policy and an operational security control is vast, and bridging it requires actual engineering work. I have mapped the essential requirements of the EU AI Act directly to the operational clauses of NIST and ISO to identify the five non-negotiable engineering artifacts you must build before the deadline. From an append-only log store to a technical Human-in-the-Loop interface, these are not paperwork exercises. They are structural components of a secure AI architecture. If you wait until the audit to discover this gap, the cost could be devastating.

The Itch: Why This Matters Right Now

Picture your compliance lead walking into a room on September 1, 2026.

The notified body’s review is wrapping up. Every policy maps to an article. The Statement of Applicability accounts for all 38 ISO 42001 Annex A controls. The NIST AI RMF gap assessment runs 47 pages. The responsible AI policy is framed and mounted on the wall behind the reception desk.

Then the auditor asks to see the logs from the hiring system’s decision run last Tuesday.

Not the logging policy. Not the logging architecture diagram. The actual log record: timestamped, cryptographically intact, showing which model version processed which input, what the confidence score was, and which named human reviewed and confirmed the output before the candidate was rejected.

Someone checks a Confluence page. Someone else sends a Slack message to the engineering team.

That pause is the gap. And in August 2026, the pause costs you up to EUR 15 million or 3% of worldwide annual turnover, not an uncomfortable retrospective.

The governance series told you what the regulators want. The series mapped three layers of obligation: the technical standards layer, the binding law layer, the international coordination layer. What that series could not do by design is tell your engineers what to build. Governance frameworks specify outcomes. Engineering builds the mechanisms that produce those outcomes. Those are different activities, and treating documentation as implementation is the most expensive technical mistake the next eighteen months will surface.

I spent the last research cycle doing the translation work. What I found is that five engineering artifacts separate an organization that survives August 2026 from one that does not. None of them can be a Word document.

The Deep Dive: The Struggle for a Solution

The structural gap, stated plainly

Every major governance framework shaping AI compliance right now was designed as either a management system standard, a risk management methodology, or a binding essential-requirements regulation. None is a security engineering specification. That single sentence is the villain of this story, and if you read the governance series (if you haven’t yet, go check it out), you already feel it. The frameworks tell you what state your system must be in. They do not tell you what to build to get it there.

That gap is structural, not accidental. And it gets worse when you look at what sits inside the black box.

The tenant you cannot see

The Berryville Institute of Machine Learning published an architectural risk analysis of large language models in January 2024. It identifies 81 risks organized by system component, with 23 of those risks located inside the foundation model itself: the black box that sits at the center of most enterprise LLM deployments and hides its behavior from everyone building on top of it.

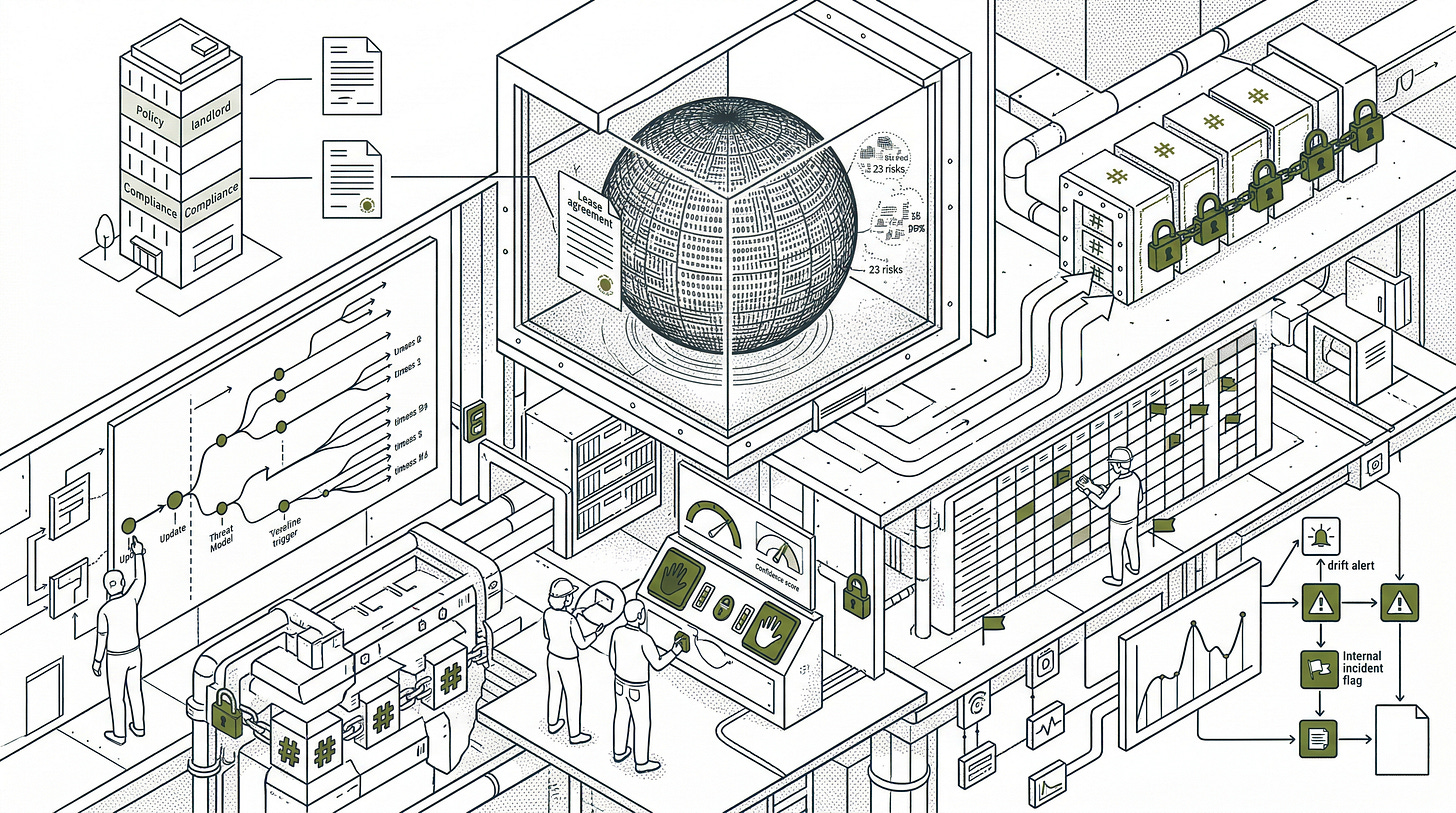

Think of your foundation model as a black-box tenant. You have a lease agreement (your acceptable use policy), a visitor log (your audit trail documentation), and an emergency exit procedure (your incident response plan). The tenant occupies the most consequential room in the building. It processes your most sensitive data. It produces the outputs that drive hiring decisions, credit assessments, and access determinations. And it has 23 documented habits you cannot observe or interrupt at the policy layer.

The BIML analysis puts it directly: securing a modern LLM system must involve diving into the engineering and design of the specific system itself. Security is an emergent property of a system. A tenant carrying 23 unobservable structural risk behaviors does not become safe because the lease agreement describes safe behavior. You need controls in the building, not clauses in the contract. CISA frames the organizational consequence: AI is the high-interest credit card of technical debt. The governance frameworks create the obligation to manage that tenant responsibly. They do not build the controls that make it possible.

The five artifacts

I mapped Articles 9, 12, 13, and 14 of the EU AI Act to the NIST AI RMF Playbook and ISO 42001’s operative clauses. Five engineering artifacts appear at every intersection. Each one maps back to the building.

The first is a versioned threat model with update triggers. Article 9 requires a risk management system that runs as a continuous iterative process across the entire system lifecycle, with regular systematic review and updating. The joint NSA/CISA guidance from April 2024 requires the primary developer to supply a threat model and the deployment team to use it as their implementation guide. Think of this as the building inspection schedule: it does not matter how thorough the initial inspection was if the tenant renovates the interior every three months and nobody re-inspects. A threat model produced at project inception and filed away fails Article 9’s lifecycle continuity requirement. Every model version update, data distribution shift, and post-deployment incident is a renovation event. The threat model needs a revision history indexed to system versions, with update triggers defined in advance, not retrospectively.

The second is an append-only, cryptographically protected log store. Article 12 requires that high-risk AI systems technically allow for the automatic recording of events across the system’s lifetime. Article 19 requires providers to retain those logs for at least six months. This is the tamper-proof surveillance record the landlord keeps regardless of whether the tenant cooperates. The joint NSA/CISA guidance specifies encryption at rest with keys in a hardware security module (HSM). The draft standard ISO/IEC DIS 24970:2025 on AI system logging confirms append-only storage with strict access controls. The architecture: an event capture pipeline sitting between the inference layer and external services, writing structured log entries with deterministic identifiers (chain ID, model version, input hash, output hash, timestamp) to an append-only backend with cryptographic hash chaining. Zero modification access. Read restricted to authorized audit roles. Retroactive log reconstruction is explicitly insufficient. When the auditor asks about last Tuesday, the infrastructure answers, not a Confluence page.

The third is a technical Human-in-the-Loop interface with override and stop controls. Article 14 requires that high-risk AI systems be designed with appropriate human-machine interface tools enabling effective oversight during the period of use. Article 14 then specifies six capabilities the overseer must be enabled to exercise: understanding the system’s capabilities and limitations, detecting and addressing anomalies, avoiding over-reliance, interpreting outputs, deciding not to use the system, and interrupting system operation.

This is the lockout mechanism on the rooms where consequential decisions happen. A policy stating “human reviewers will assess AI outputs before consequential decisions are made” describes the desired state. The phrase “will assess” does not build the confidence score display. It does not create the override button. It does not write the dual-authorization workflow. A policy is the lease clause requiring the tenant to behave; a control is the physical lock that enforces it.

Here is the dependency most API builders miss: the six capabilities in Article 14 require interfaces the provider must expose. If your upstream model vendor does not surface a calibrated confidence score, an explanation output, and an override control in its API, you cannot build Article 14 Path B controls regardless of your engineering investment. Before you write a line of HITL code, open your model vendor’s API documentation and check for those three surfaces. If they are absent, the Article 25 contract discussion is your next move, not a sprint ticket. Article 13 requires providers to supply deployers with sufficient information to implement human oversight downstream. That is a technical dependency, not a documentation courtesy.

For Annex III biometric identification systems, Article 14 adds a harder constraint: no consequential output may bypass confirmation by at least two trained and authorized natural persons. That four-eyes requirement must be enforced at the system level, not honored by process. A dual-authorization workflow that a determined operator can bypass with a single checkbox is not Article 14 compliance.

The fourth is a data governance registry covering inference-time data. ISO 42001 control A.4.3 requires documentation of data resources at all lifecycle stages. Most initial implementations documented training datasets and stopped, because at certification time, RAG pipelines and persistent agent memory stores were not yet in production scope. They are now. The retrieval corpus for a RAG system is an inference-time data resource. An outdated or poisoned document retrieved at runtime influences a high-risk output, which is a risk event under Article 12’s logging trigger. NIST AI 600-1, the Generative AI Profile published July 2024, specifies controls for data provenance and retrieval integrity that map directly to A.4.3. The registry must cover source, version, ingestion date, and scheduled staleness review dates for every document in the retrieval pool. Agent memory requires snapshot versioning and a documented reset procedure. If your system’s behavior at inference time is a function of accumulated context nobody has reviewed, you have an unsupervised state change problem, not a documentation gap.

The fifth is a post-market monitoring pipeline with a documented escalation path. Article 72 requires a post-market monitoring plan. Article 73 requires serious incident reporting to competent authorities. The NIST AI RMF MANAGE function requires tracking of negative risks throughout deployment and defined incident response procedures. The artifact is an automated pipeline tracking model performance against baselines defined at deployment, alerting on statistical output drift, triggering a risk review when thresholds are crossed, and maintaining a versioned performance record indexed to model version. The escalation path from internal incident flag to Article 73 competent authority report must exist before deployment. It cannot be assembled during an incident.

The Resolution: Your New Superpower

Picture the same room. September 1, 2026. Same notified body. Same auditor. Same question about last Tuesday’s logs.

This time the compliance lead opens a terminal. Eight seconds later, a structured log record appears: chain ID, model version, input hash, confidence score, the timestamp of the human reviewer’s confirmation, their identity, the override control they used. The auditor nods and moves on.

The tenant still has 23 habits you cannot observe at the policy layer. The building now has a tamper-proof surveillance record, a lockout mechanism on every consequential decision room, and an inspection schedule that updates every time the tenant changes anything. The lease agreement did not build those. Your engineers did.

That eight-second answer is not magic. It is the output of infrastructure that somebody built in the months before the deadline. A log pipeline with hash chaining. A HITL interface with a confirmation workflow. A threat model with a revision history. A data registry with a staleness alert that fired three weeks ago and got actioned.

The auditor’s question reveals nothing about compliance intent. It reveals only whether the infrastructure exists to answer it.

Your team has built log pipelines before. It has built access control layers before. It has built monitoring dashboards before. The five artifacts above apply those existing skills to a specific regulatory surface, with specific field requirements and specific retention properties. The novelty is the compliance specification, not the engineering category.

Two moves to make this week.

Take the five artifacts into a conversation with your engineering lead and identify which ones currently exist in production for your highest-risk Annex III system. For each artifact that does not exist, assign an owner and a build deadline before June 2026. That leaves two months for validation before August.

The log pipeline specifically requires six months of operational data before the deadline. If you are reading this in April 2026, that window closed in February. The question is no longer whether to start; it is how much ground you can recover between now and June.

For controls that do exist, check whether they were built for security engineering or adapted from operational observability tooling. A debugging dashboard that an engineer uses to investigate inference issues may not capture the right fields, with the right immutability guarantees, to answer an auditor’s question. Controls designed for compliance evidence production are different from controls adapted from monitoring infrastructure after the fact.

The organizations arriving at August 2026 with governance documentation and no infrastructure will face the scenario in the opening of this article. The organizations that treated the deadline as a construction project will face an auditor, pull up a log record, and move on.

The next article covers Article 15 (accuracy, robustness, and cybersecurity) and the NIST AI RMF MEASURE function’s adversarial testing requirements. If your system continues to learn after deployment, that article is the one your engineers need before the next sprint planning session.

Fact-Check Appendix

Statement: Article 12(1) of the EU AI Act requires that high-risk AI systems technically allow for the automatic recording of events (logs) over the lifetime of the system. | Source: EU AI Act (Regulation (EU) 2024/1689), Article 12 | https://artificialintelligenceact.eu/article/12/

Statement: Article 19(1) requires providers to retain automatically generated logs for a period of at least six months, unless applicable Union or national law provides otherwise. | Source: EU AI Act, Article 19 | https://artificialintelligenceact.eu/article/19/

Statement: Article 14(5) requires that for Annex III point 1(a) systems, no action or decision may be taken on the basis of the system’s identification output unless separately verified and confirmed by at least two natural persons with the necessary competence, training, and authority. | Source: EU AI Act, Article 14 | https://artificialintelligenceact.eu/article/14/

Statement: The BIML architectural risk analysis of LLMs identifies 81 risks organized by system component, including 23 risks inherent in the black-box foundation model. | Source: Berryville Institute of Machine Learning, “An Architectural Risk Analysis of Large Language Models” (BIML-LLM24), Version 1.0, January 24, 2024 | https://berryvilleiml.com/docs/BIML-LLM24.pdf

Statement: NIST AI 600-1 (Generative AI Profile) was published July 26, 2024. | Source: NIST AI 600-1 | https://nvlpubs.nist.gov/nistpubs/ai/NIST.AI.600-1.pdf

Statement: ISO/IEC 42001:2023 contains 38 reference controls in normative Annex A, organized across nine control objectives. | Source: ISO/IEC 42001:2023 | https://www.iso.org/standard/42001

Statement: The EU AI Act penalty for high-risk system obligation violations is up to EUR 15 million or 3% of worldwide annual turnover. | Source: EU AI Act, Article 99 | https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-99

Statement: The full high-risk AI system compliance deadline under the EU AI Act is August 2, 2026. | Source: European Commission, EU AI Act Regulatory Framework | https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

Statement: The joint “Deploying AI Systems Securely” guidance was co-authored by NSA AISC, CISA, FBI, ACSC, CCCS, NCSC-NZ, and NCSC-UK, and published April 2024. | Source: NSA/CISA/FBI/ACSC/CCCS/NCSC-NZ/NCSC-UK, “Deploying AI Systems Securely,” April 2024, TLP:CLEAR | https://media.defense.gov/2024/apr/15/2003439257/-1/-1/0/csi-deploying-ai-systems-securely.pdf

Statement: NIST AI RMF 1.0 was released January 26, 2023, developed through an 18-month public comment process with more than 240 contributing organizations. | Source: NIST AI 100-1 | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

Top 5 Prestigious Sources

NIST AI Risk Management Framework 1.0 (NIST AI 100-1), U.S. Department of Commerce / NIST, January 2023 | https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

European Commission, Regulation (EU) 2024/1689 (EU AI Act), Articles 9, 12, 13, 14, Official Journal version | https://artificialintelligenceact.eu/

NSA/CISA/FBI et al., “Deploying AI Systems Securely,” Joint Cybersecurity Information Sheet, April 2024 | https://media.defense.gov/2024/apr/15/2003439257/-1/-1/0/csi-deploying-ai-systems-securely.pdf

Berryville Institute of Machine Learning (BIML), “An Architectural Risk Analysis of Large Language Models,” McGraw, Figueroa, McMahon, Bonett, January 2024 | https://berryvilleiml.com/docs/BIML-LLM24.pdf

ISO/IEC 42001:2023, Information Technology: Artificial Intelligence: Management System | https://www.iso.org/standard/42001

Peace. Stay curious! End of transmission.